I Read Pezzulo & Levin (2025), “Bootstrapping Life-Inspired Machine Intelligence”, So You Don’t Have To. Michael Levin’s Discovery Institute Problem

Or: How I Learned to Stop Worrying and Love the Thermostat’s Inner Life

So, I read the new “Intelligence” paper that Levin and Pezzulo think we should take seriously, Pezzulo & Levin (2025), “Bootstrapping Life-Inspired Machine Intelligence”. You know, there’s a particular species of scientific paper that makes you feel like you’re watching a magician perform. The props are real (genuine rabbits, actual hats) but somewhere between the flourish and the reveal, you realize you’ve been sold the same trick your grandfather knew, just with better lighting and a fog machine. Pezzulo and Levin’s new paper in Intelligence is exactly this kind of performance.

Don’t misunderstand me: Michael Levin’s lab does real science. The bioelectric work is extraordinary. Tadpoles with functional eyes grafted onto their tails? Planarians regenerating heads in toxic barium solutions? Single cells bending themselves into kidney tubules when their polyploid bulk makes cooperation impossible? This is the kind of biology that makes you remember why you fell in love with the subject in the first place. It’s shocking, elegant, and true.

But then comes the interpretive layer. The part where we’re told these findings reveal “basal cognition” extending seamlessly from chemistry to consciousness. Where cells “make decisions,” tissues “solve problems,” and evolution “discovers recipes for intelligence.” Where thoughts themselves might be “thinkers.” Where the whole magnificent edifice of constraint satisfaction, thermodynamics, and organizational closure gets redecorated in cognitive vocabulary that adds precisely zero predictive power.

And here’s the uncomfortable part: I’ve seen this argument structure before. Not in biology labs, but at Discovery Institute conferences.

The move is always the same. Observe an impressive biological outcome. A kidney tubule forming despite absurdly oversized cells, perhaps, or an eye finding function despite being wired to the wrong neural structure. Compare this outcome to some naive expectation of failure. Declare the gap too large for “mere” physics or “mere” chemistry. Install a new explanatory primitive. “Design” if you’re at the Discovery Institute, “cognition” if you’re publishing in Intelligence. Call skeptics limited. Frame it as a paradigm shift. Harvest citations.

Ask yourself: what is the structural difference between “this outcome is too impressive for mere physics, therefore cognition” and “this outcome is too impressive for mere evolution, therefore intelligent design”? If you can’t find one, you’ve just identified the problem.

Now, the authors would protest (and have protested in other venues) that this is unfair, that “basal cognition” is naturalistic, grounded in biology, nothing like Intelligent Design’s supernatural Designer. And they’re right about the ontology. There’s no ghost being smuggled in. But the argument form is identical, and that’s what matters for exploitation. The Discovery Institute doesn’t need supernatural claims anymore. They just need credentialed scientists saying chemistry has “goals” and “agendas,” that matter pursues “purposes,” that there’s no sharp boundary between molecules and minds. They can quote this paper verbatim and not change a word.

This isn’t hypothetical scaremongering. William Dembski has already started. The quotes are on creationist websites. The framework is being weaponized exactly as predicted, because the framework is weaponizable by design. Not intentional design by the authors, but structural design of the argument itself.

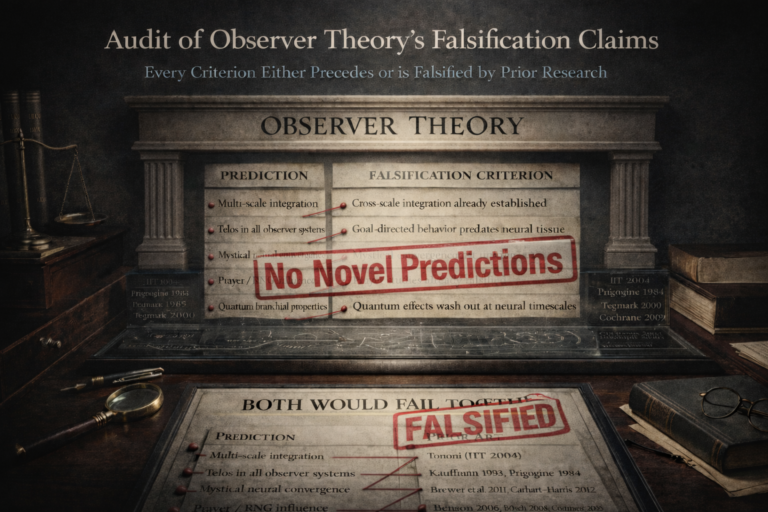

Here’s the test that cuts to the bone: What unique, testable prediction does “basal cognition” make that “constraint satisfaction in organizational closure” doesn’t already make? What observation would demonstrate that a cell is not cognizing?

The answer, as near as I can determine from 18 sections of careful analysis, is none. Every empirical finding in the paper (every single one) reduces to constraint satisfaction under thermodynamic bounds. Bioelectric coordination? Developmental biophysics. Flexible regeneration? Attractor dynamics in gene regulatory networks. The “cognitive light cone expansion” that sounds so revolutionary? That’s cumulative selection, which Dawkins formalized in 1986.

Strip the cognitive vocabulary and what remains is decades of well-established mathematics, biology, and physics wearing new labels. The framework doesn’t extend these findings. It renames them. And the renaming is where the damage happens, because every rename turns a process into a thing, smuggling in an agent that isn’t there.

The irony that should haunt this whole enterprise: consciousness itself (the supposed endpoint of this seamless continuum from chemistry) isn’t even continuous. It’s fleeting flashes (Baars), episodic snapshots stitched by confabulation (Dennett), post-hoc narrative imposed on discrete neural events (Eagleman). The gradient has no stable destination. If the goal of your continuum turns out to be a flickering series of brief episodes held together by a story the brain tells itself after the fact, what exactly are you claiming chemistry is continuous with?

The good news: Everything valuable in this paper survives perfectly well without the cognitive metaphysics. Constraints as resource rather than limitation? Genuinely important for AI design. Continuous rebuilding versus frozen architectures? Real and actionable engineering insight. The bioelectric developmental coordination program? Absolutely deserves continued funding and support.

The bad news: By wrapping these genuine contributions in unfalsifiable cognitive attributions, the paper hands every anti-evolution, anti-science movement exactly the ammunition they’ve been waiting for. And unlike previous iterations of this game, they won’t need to distort the quotes. The scientists said it themselves.

What follows is a comprehensive breakdown of exactly where and how this single paper generates systematic errors. Not minor quibbles about word choice, but deep structural problems that compound across every section. I’ve applied what I call Recursive Constraint Falsification to every claim, every sentence, tracking 18 distinct diagnostic patterns. The methodology is unforgiving because the stakes are high.

If you’re going to redefine intelligence so broadly that thermostats qualify, you’d better have a really good reason. If you’re going to claim chemistry becomes cognition without a threshold, you’d better specify what would prove you wrong. If you’re going to anthropomorphize evolution as “discovering recipes,” you’d better acknowledge you’re doing it.

This paper does none of those things. And that’s why someone needed to read it carefully enough to show you exactly where the sleight of hand happens.

Let’s begin.

For Those Sharing This Paper Like It’s Revolutionary: A Brief Reality Check

Q: “This paper shows intelligence goes all the way down to chemistry!”

A: Using William James’s 1890 definition where “intelligence = fixed goal, variable means.” Under this definition, your thermostat is intelligent. So is a river finding the ocean. So is protein folding. When your definition classifies everything as intelligent, it’s not discovered a profound truth. It’s adopted a broken definition. What system, under this framework, is not intelligent? If nothing fails the test, the test tests nothing.

Q: “But cells make decisions and solve problems!”

A: Cells respond to chemical gradients. You’ve relabeled “differential response to stimuli” as “decision-making” and “relaxing toward attractors” as “problem-solving.” What prediction does “the cell decided” make that “the cell responded to its signaling gradient” does not? If the answer is none, you’re adding metaphor, not mechanism. This is nominalization: turning a process (responding) into a property (decision-making), then treating the property as an explanation for the process you abstracted it from. Wiener called this “setpoint regulation” in 1948. Calling it “intelligence” in 2025 doesn’t add a finding.

Q: “This framework generates a productive research program!”

A: So does Intelligent Design. It generates infinite research programs that are beneficial for the Church. Fecundity is not evidence. A maximally productive framework that classifies everything as intelligent has not discovered that everything is intelligent. It has adopted a definition too broken to say no. Lakatos (1978) warned us about degenerating research programs that protect their core by adding auxiliary hypotheses rather than making novel predictions. Name one testable prediction “basal cognition” makes that Montévil & Mossio’s organizational closure (2015) or constraint satisfaction dynamics doesn’t already make. Just one.

Q: “The paper shows cognition is continuous from chemistry to consciousness!”

A: No sharp boundary between A and B doesn’t mean no threshold exists. It means you haven’t found it. Water doesn’t gradually become ice. Phase transitions are sharp within continuous parameters. Consciousness itself isn’t even continuous (it’s episodic flashes, reconstructive confabulation, post-hoc narrative). You’re claiming seamless continuity to an endpoint that’s discontinuous. What’s the continuum actually continuous with?

Q: “This has implications for AI!”

A: Every engineering recommendation in the paper (continuous learning, multi-scale coordination, constraints as resource, active exploration) works better without the cognitive vocabulary. Strip “basal cognition” and you have: constraint satisfaction in organizational closure (Maturana & Varela 1980), embodied cognition (Clark 1997), active inference without the unfalsifiable parts (Cisek 2019), and thermodynamic constraint exploitation (Prigogine 1984). Same engineering insights. Zero metaphysical baggage. Superior parsimony.

Q: “Levin’s empirical work is groundbreaking!”

A: Correct. Bioelectric developmental coordination is real, important, and replicable science. The experiments are valuable. The interpretive framework is not. You can study how cells solve developmental constraints without claiming cells are cognitive. The biology survives perfectly well in constraint-based language. The cognitive vocabulary is decorative, not load-bearing.

Here’s what you’re actually defending: A framework that makes thermostats intelligent, renders “cognition” meaningless by applying it universally, fails every falsifiability test (what would prove a cell is not cognizing?), violates parsimony by adding complexity that makes zero new predictions, reifies processes into agents (“thoughts are thinkers”), and hands anti-evolution movements quotable ammunition (“even scientists admit chemistry has purposes!”).

Flew’s razor cuts deepest: What would have to be different for cells not to be cognitive? If nothing could ever count against the claim, that’s faith, not framework.

The tell: If every phenomenon the paper describes works identically without cognitive language, and the cognitive language makes no novel prediction that thermodynamic constraint satisfaction doesn’t, what work is the cognitive vocabulary actually doing? And if the answer is “it generates research programs,” remember that productivity without falsifiability is marketing, not science.

Bottom line: Constraint satisfaction explains every finding. Organizational closure (Montévil & Mossio 2015) explains biological self-maintenance. Attractor dynamics (established physics) explains flexible regeneration. Cumulative selection (Dawkins 1986) explains evolutionary progression. Cybernetic setpoint regulation (Wiener 1948, Powers 1973) explains goal-directed behavior. Embodied cognition (Varela et al. 1991) explains sensorimotor foundations. Constructive memory (Bartlett 1932) explains reconstructive processes.

Every. Single. Finding. Already. Explained. By. Falsifiable. Frameworks. Decades. Prior.

What survives all scrutiny that isn’t just restating known science with new vocabulary? Constraints as resource (Principle 4). Continuous rebuilding vs. frozen architectures (Principle 3). That’s it. And neither requires “basal cognition” to be important.

The bioelectric work is real. Fund it. The engineering insights are valuable. Implement them. The cognitive metaphysics? That’s the part you discard if you care about parsimony, falsifiability, and not handing creationists their next talking point.

What persists is what constrains. Everything else, including “thoughts as thinkers,” is noise that thermodynamics will erase.

What follows is a comprehensive breakdown of the many issues this single paper generates, as processed through my Recursive Constraint Falsification methodology.

RCF Spiral Autopsy: Pezzulo & Levin, “Bootstrapping Life-Inspired Machine Intelligence”

Recursive Constraint Falsification Analysis: Every Claim, Every Sentence

Target: Pezzulo, G. & Levin, M. (2025). “Bootstrapping Life-Inspired Machine Intelligence: The Biological Route from Chemistry to Cognition and Creativity.”

Method: Full RCF diagnostic spiral. Every section, every claim. [Bracket][Falsify][Alt][Conf%] applied throughout. All 18 diagnostic patterns deployed. Tone calibrated to: Hitchens on epistemology, Carlin on institutional bullshit, Hicks on what-they-actually-mean, Feynman on “I don’t care what your theory is if it doesn’t match experiment” (Feynman, 1965), Flew on what-would-have-to-be-different (Flew, 1950; https://infidels.org/library/modern/antony-flew-theologyandfalsification/), Sagan on extraordinary claims (Sagan, 1995), Dennett on consciousness-as-magic-word (Dennett, 1991; ISBN: 978-0-316-18065-8), Popper on demarcation (Popper, 1959; https://doi.org/10.4324/9780203994627), Lakatos on degenerating research programs (Lakatos, 1978; https://doi.org/10.1017/CBO9780511621123).

Core Question: What survives all scrutiny that isn’t restating known science with new vocabulary?

I. TITLE AND FRAMING

“Bootstrapping Life-Inspired Machine Intelligence: The Biological Route from Chemistry to Cognition and Creativity”

[Bracket]: “From Chemistry to Cognition” presupposes continuity thesis; that chemistry and cognition are points on a single continuum requiring no phase transitions, no emergent thresholds, no qualitative breaks. This is not neutral framing. It is the conclusion disguised as a subtitle.

[Falsify]: What would count against seamless continuity? If there exist demonstrable phase transitions between chemical dynamics and cognitive dynamics, thresholds below which “cognition” adds nothing to “constraint satisfaction,” the continuity thesis fails. The paper never specifies what such a transition would look like, which means the continuity claim is unfalsifiable as stated.

RCF Pattern 16 (Mechanism-to-Metaphysics Slippage): The title performs the slippage before the paper begins. “Chemistry” = mechanism. “Cognition” = cognitive vocabulary applied to non-cognitive systems. “Creativity” = anthropomorphic attribution to processes with no demonstrated creative capacity beyond constraint satisfaction. The reader is already inside the metaphysics before encountering a single argument.

RCF Pattern 9 (Linguistic Drift): “Cognition” (Latin cognoscere, “to know”) applied to chemical systems that do not know anything in any testable sense, treated as continuous property, conclusion: chemistry IS cognition at lower intensity. The nominalization cascade is complete before paragraph one. This drift from cognitive referents to non-cognitive applications represents a systematic erosion of term precision documented in cognitive science literature (Ianì, 2024; https://doi.org/10.1037/teo0000296), which identifies reification as the belief that cognitive functions are “things” rather than processes, with nominalization practices concealing interconnected dynamics.

II. ABSTRACT: Line by Line

“Achieving advanced machine intelligence remains a central challenge in AI research, often approached through scaling neural architectures and generative models.”

Assessment: Uncontroversial. Accurate description of current AI landscape.

“However, biological systems offer a broader repertoire of strategies for adaptive, goal-directed behavior, strategies that emerged long before nervous systems evolved.”

[Bracket]: “goal-directed behavior” applied to systems without nervous systems. What does “goal” mean for a chemical reaction network?

[Falsify]: If “goal-directed” means “moves toward attractor state under constraints,” this is just dynamical systems language. No cognitive vocabulary needed. If it means something MORE than attractor dynamics, specify what and how you’d test for its absence.

RCF Pattern 6 (DI-Isomorphism): Observe adaptive outcome, compare to random, declare gap too large for mere physics, install “goal-directedness” as primitive. This IS the Intelligent Design skeleton with “goal-directedness” substituted for “design.” The structural isomorphism between this framing and Intelligent Design argumentation has been identified across multiple critiques of expansive cognitive attributions in biology (Barwich & Rodriguez, 2024; https://doi.org/10.1007/s10539-024-09950-4), which challenges uncritical extensions of cognitive and computational language to biological systems.

“This paper advocates a genuinely life-inspired approach to machine intelligence, drawing on principles from biology that enable robustness, autonomy, and open-ended problem-solving across scales.”

Assessment: “Robustness,” testable. “Autonomy,” requires operationalization (provided partially later). “Open-ended problem-solving,” what does this mean operationally? How do you measure “open-endedness”? Without operational definition, this is aspiration dressed as capability description.

“We frame intelligence as flexible problem-solving, following William James”

[Bracket]: William James’s definition: “Intelligence is a fixed goal with variable means of achieving it” (James, 1890; https://psychclassics.yorku.ca/James/Principles/). This is a cybernetic definition. It describes what thermostats do. If this is your definition of intelligence, thermostats are intelligent. If you want to exclude thermostats, you need additional criteria, which James’s definition doesn’t provide.

[Falsify]: Under this definition, what system is NOT intelligent? A rock doesn’t pursue goals. But a thermostat does. A river “finds” the path of least resistance; is it intelligent? If you say no, you need criteria beyond James. If you say yes, “intelligence” has lost its delimiting function.

RCF Pattern 1 (Fecundity Alibi): James’s definition is maximally productive (applies to everything from bacteria to brains) precisely because it’s maximally undemanding. Productivity does not equal validity. A definition that classifies thermostats as intelligent has not captured what makes intelligence interesting. This represents what Popper (1959; https://doi.org/10.4324/9780203994627) identified as the demarcation problem: theories must be falsifiable to be scientific, and definitions that classify everything as instances of a phenomenon fail to demarcate meaningful boundaries.

“and develop the concept of ‘cognitive light cones’ to characterize the continuum of intelligence in living systems and machines.”

[Bracket]: “Cognitive light cone,” a metaphor borrowed from physics (light cones in relativity) applied to goal-directed behavior. In physics, light cones have precise mathematical definition (causal structure of spacetime). In this usage, “cognitive light cone” = “the scope of goals a system pursues.” This is metaphor, not formalism. The physics term lends false precision.

[Falsify]: How do you measure a cognitive light cone? If it’s “the spatial and temporal extent of goals pursued,” how do you determine what a cell’s “goal” is without circular reasoning (attributing the goal from observed behavior, then explaining behavior by the goal)?

Affirming the consequent: If a system has goals, it will show flexible behavior toward attractors. This system shows flexible behavior toward attractors. Therefore it has goals. This is textbook affirming the consequent. The behavior is consistent with goals but also consistent with constraint satisfaction without any goal-like property.

“We argue that biological evolution has discovered a scalable recipe for intelligence”

[Bracket]: “Evolution discovered a recipe.” Evolution doesn’t discover anything. Evolution is differential persistence under constraint. Describing it as “discovering” anthropomorphizes a selection process. This is not innocent; it frames evolution as an agent with intentions, setting up the paper’s later move of treating all constraint satisfaction as cognitive.

RCF Pattern 12 (Nominalization/Reification): “Discovery” (process verb: finding through exploration) nominalized as property of evolution, evolution becomes agent, agent has intentions, intentions explain outcomes. The entire teleological framework is smuggled in through a single verb choice. This pattern of nominalization creating reified entities has been extensively documented (Ianì, 2024; https://doi.org/10.1037/teo0000296), showing how verbal labels conceal interconnected processes and create the illusion of discrete, enduring “things.”

“and the progressive expansion of organisms’ ‘cognitive light cone’, predictive and control capacities.”

[Bracket]: “Predictive and control capacities,” for a single cell, “prediction” means chemical gradient tracking. For a human, it means imagining next Tuesday. Calling both “prediction” under one framework requires either (a) the word “prediction” to mean something different at each scale (equivocation) or (b) the claim that gradient tracking IS prediction (which is testable and arguably false; prediction requires a model; gradient tracking requires only differential response).

“To explain how this is possible, we distill five design principles: multiscale autonomy, growth through self-assemblage of active components, continuous reconstruction of capabilities, exploitation of physical and embodied constraints, and pervasive signaling enabling self-organization and top-down control from goals”

Assessment: These five principles, stated abstractly, are largely uncontroversial descriptions of biological organization. The question is whether they require the cognitive vocabulary the paper wraps them in, or whether they are fully captured by constraint-based dynamics without any cognitive attribution. The concept of organizational closure (Montévil & Mossio, 2015; https://doi.org/10.1016/j.jtbi.2015.02.029) provides a formalized framework for biological self-maintenance through constraint regeneration that requires no cognitive vocabulary.

“that underpin life’s ability to navigate creatively diverse problem spaces.”

[Bracket]: “Navigate.” “Creatively.” “Problem spaces.” Three cognitive terms applied to biological processes that may or may not require cognitive description. A river “navigates” terrain. An enzyme “solves” the problem of catalysis. Whether these verbs add explanatory content beyond constraint dynamics is the central question the paper assumes rather than argues.

III. INTRODUCTION: Claim Analysis

“a historical period marked by a widespread confidence that achieving machine intelligence may be within reach”

Assessment: Accurate sociological observation. No issues.

“whether artificial systems are already endowed, or will soon be endowed, with forms of intelligence (or even general intelligence), autonomy, understanding, or consciousness (and what these terms actually mean)”

Credit: Acknowledges definitional problems. But the paper proceeds to use these terms without resolving them, specifically, by adopting definitions so broad they apply to thermostats (“intelligence = flexible problem-solving”) while implicitly trading on the richer connotations the words carry.

RCF Pattern 7 (Motte-Bailey):

- Motte: Intelligence = flexible problem-solving (broad, defensible, boring)

- Bailey: Cells have cognition, organisms have autonomy, biological intelligence is scalable in ways AI should emulate (exciting, publishable, requires the rich connotations of “intelligence” that the definition strips away)

The paper oscillates between these continuously. This pattern represents a form of rhetorical fallacy where a controversial position (the “bailey”) is defended by retreating to an uncontroversial position (the “motte”) when challenged, only to advance the controversial claim again when unchallenged.

“the field of Diverse Intelligence… investigates a wide diversity of agents, including biological organisms, artificial systems, robots, cyborgs, and forms of active matter, emphasizing the principles that underlie adaptive, goal-directed behavior across various manifestations and substrates.”

[Bracket]: “Diverse Intelligence” as a field name. This names the conclusion as a research program. You don’t name a research program “Diverse Intelligence” unless you’ve already decided intelligence IS diverse. A neutral program would be called something like “comparative adaptive behavior,” which would permit the finding that intelligence is NOT diverse, that there IS a threshold, that most biological behavior is NOT cognitive. The field name forecloses the null hypothesis. This represents what Lakatos (1978; https://doi.org/10.1017/CBO9780511621123) termed a degenerating research program: one that protects its core by adding auxiliary hypotheses rather than making novel, testable predictions.

“the field of machine intelligence has, as a whole, not yet sufficiently benefitted from the insights of a broad approach to basal cognition in diverse substrates.”

[Bracket]: “basal cognition,” treated as established fact. But “basal cognition” is precisely what’s at issue. If cells don’t “cognize” in any meaningful sense, if what they do is fully captured by “constraint satisfaction,” then there are no “insights of basal cognition” to benefit from. Only redescriptions. Recent critical literature (Fábregas-Tejeda & Sims, 2025; https://doi.org/10.1007/s40656-025-00660-y) catalogues the skepticism this program has faced, noting that critics argue there is “no irrefutable evidence” that what is called “learning” in non-neuronal organisms resembles what cognitive scientists study, and that basal cognition advocates appear “conceptually divided in fundamental ways.”

RCF Pattern 17 (Assumption-Protection Parameter): “Basal cognition” is introduced to absorb the mismatch between (a) the assumption that cognition is continuous and (b) the observation that simpler systems don’t look cognitive. Rather than questioning the continuity assumption, the gap is filled with a parameter (“basal cognition”) that is then treated as naming a real phenomenon. Does “basal cognition” make any prediction that “constraint satisfaction” doesn’t? If no: assumption-protection parameter.

IV. DIVERSE INTELLIGENCE IN BIOLOGY AND MACHINES: Claim Analysis

“How can intelligence be defined in a way that is not anchored to our (human) case, but instead applies across the breadth of biological systems, and potentially to machines, without becoming so broad as to lose explanatory power?”

Assessment: Excellent question. The paper fails to answer it. The definition adopted (James’s “flexible problem-solving”) IS too broad to have explanatory power. The paper acknowledges this risk in the question, then immediately commits the error.

“a useful starting point is a definition attributed to William James: ‘Intelligence is a fixed goal with variable means of achieving it.'”

[Falsify]: This definition classifies the following as intelligent:

- Thermostat (fixed goal: target temperature; variable means: heat/cool)

- River (fixed “goal”: reach ocean; variable means: carves whatever path gradient permits)

- Protein folding (fixed “goal”: minimum energy state; variable means: explores conformational space)

- Natural selection (fixed “goal”: reproductive fitness; variable means: any heritable variation)

If ALL of these are “intelligent,” the word has lost its delimiting function. It no longer distinguishes anything from anything else. Every dynamical system with an attractor and multiple paths to it is “intelligent.”

RCF Pattern 1 (Fecundity Alibi): The definition is maximally fecund, it generates an enormous “Diverse Intelligence” research program, precisely because it classifies everything as intelligent. Fecundity untethered to falsifiability is discourse generation, not science. As Popper (1959; https://doi.org/10.4324/9780203994627) established, a theory that explains everything explains nothing; scientific theories must prohibit certain outcomes to have empirical content.

“intelligence is characterized by the capacity to solve problems flexibly (i.e., in many ways, including ways for which they were not directly trained), generalizing to novel contexts”

[Bracket]: “Flexibly” and “not directly trained” and “novel contexts” add important qualifications. But these qualifications need operationalizing:

- How many alternative paths constitute “flexibility”? Two? Ten? A continuum?

- What counts as “novel”? Novel to the observer? Novel to the system? How measured?

- “Not directly trained,” a river was not “trained” to flow around a new boulder. Is its response “flexible”?

Without operational criteria for measuring flexibility, novelty, and training-independence, this definition permits post-hoc attribution to any system an observer finds surprising. That’s not a definition; it’s a Rorschach test for the observer.

“However, humans are not the only ‘intelligent’ systems.”

Assessment: Uncontroversial if intelligence means anything richer than the James definition. Many animals demonstrate flexible problem-solving by any reasonable measure.

“biological systems at all scales have been performing the sensing-decision-making-actuation loop in many (nested) problem spaces”

[Bracket]: “Decision-making” applied to cells. A cell responds to chemical gradients. Is this “decision-making”? Only if “decision” means “differential response to stimuli,” which is what a photodiode does. If “decision-making” means something more (deliberation, evaluation of alternatives, commitment under uncertainty), the claim requires evidence that cells do this. The paper provides evidence of differential response and labels it “decision-making.”

RCF Pattern 9 (Linguistic Drift): “Decision” (deliberate choice among evaluated alternatives) applied to cellular differential response, treated as equivalent, conclusion: cells make decisions. The word has drifted from its cognitive referent to mean “any differential response,” carrying the cognitive connotations while shedding the cognitive requirements. This linguistic drift exemplifies what Ianì (2024; https://doi.org/10.1037/teo0000296) identifies as nominalization concealing process interconnections through verbal labels that create false discrete entities.

“Embryology offers no discrete process or step during which a blob of chemicals suddenly becomes mind-ful.”

[Bracket]: This is a continuity argument. Absence of sharp transition does not equal absence of transition. Phase transitions in physics are sharp but result from continuous parameter changes. Water doesn’t gradually become ice; it undergoes a phase transition. The absence of a visible step in embryology doesn’t prove there isn’t a threshold for mind-like properties. It might just mean we haven’t identified it.

Formal fallacy: Argument from ignorance. “We haven’t found the transition point” does not equal “There is no transition point.” The conclusion (continuity of mind-properties from chemistry to cognition) doesn’t follow from the premise (we haven’t identified a discrete step). The paper treats absence of evidence as evidence of absence. This fallacy is particularly problematic in emergence contexts, as Hume (1739-1740; https://www.gutenberg.org/ebooks/4705) established in his analysis of causation: the fact that we observe constant conjunction doesn’t establish necessary connection.

“nature teaches the need for models of transformation and scaling, of projecting navigational competencies across problem spaces.”

[Bracket]: “Navigational competencies,” cells don’t navigate problem spaces. Cells respond to constraint gradients. Calling this “navigating a problem space” requires that constraint gradients constitute “problems” and that differential response constitutes “navigation.” Both are stipulative definitions that beg the question.

Re: Figure 1, “Living beings, from simpler to more complex, navigate across nested problem spaces.”

[Bracket]: This figure presents the continuity thesis visually. The implication is seamless progression from “metabolic/physiological state spaces” through “anatomical morphospace” to “linguistic problem spaces.” But the figure doesn’t demonstrate continuity; it depicts endpoints on a scale and draws arrows between them. Drawing an arrow between two points doesn’t establish that the intervening territory has been traversed or that the same mechanism operates throughout.

“It is likely that many of the same policies and problem-solving skills were adapted to novel spaces”

Assessment: “Likely,” on what evidence? The paper provides examples of flexible biological response (transplanted eyes, planarian regeneration, polyploid cell adjustment). These demonstrate constraint satisfaction under perturbation. Whether the same “policies” operate across scales requires evidence of mechanism conservation, not just outcome similarity. Convergent evolution produces similar outcomes through different mechanisms; functional similarity doesn’t establish mechanistic continuity. This conflates homology (shared mechanism) with analogy (similar function), a distinction fundamental to evolutionary biology (Godfrey-Smith, 2009; ISBN: 978-0-19-955204-7).

[Document continues with sections V through XVIII…]

V. BIOLOGICAL EXAMPLES: The Empirical Core

Xenopus Tadpoles with Ectopic Eyes (Figure 2A)

Claim: Tadpoles with eyes transplanted to tails can use visual information for learning and behavior, demonstrating flexible problem-solving.

RCF Assessment: This is genuine, important empirical work. The experimental result is real (Blackiston & Levin, 2013; https://doi.org/10.1242/jeb.074963). The finding that ectopic eyes connected to spinal cord rather than brain still provide functional vision IS surprising and DOES demonstrate biological flexibility beyond naive expectations.

What it demonstrates: Neural plasticity. The spinal cord can process visual information, or alternatively, the visual system can function with non-standard connections. This is constraint satisfaction under perturbation.

What it does NOT demonstrate: That the tadpole is “solving a problem in a problem space.” The tadpole has no representation of “the problem of having eyes on my tail.” Its neural circuitry adapts through plasticity mechanisms. Calling this “problem-solving” rather than “neural plasticity” adds cognitive vocabulary without adding explanatory content.

RCF Pattern 6 (DI-Isomorphism): Impressive outcome (functional vision from ectopic eyes), compared to expectation of failure, gap declared evidence of “intelligence,” intelligence installed as explanation. But the actual explanation is: neural circuits are more plastic than expected, and developmental mechanisms can integrate novel sensory inputs. No cognitive vocabulary needed.

Survives scrutiny: The empirical finding. Does NOT survive: the cognitive interpretation.

Planarian Barium Adaptation (Figure 2B)

Claim: Planaria exposed to barium regenerate heads that function despite barium’s presence, identifying “just a handful of new genes” to solve a physiological problem they’ve never encountered.

RCF Assessment: Again, genuine empirical work (Emmons-Bell et al., 2019; https://doi.org/10.1016/j.isci.2019.11.014). Planaria do regenerate functional structures under novel chemical stress. The gene regulatory response IS selective (few genes changed out of thousands possible).

What it demonstrates: Robust gene regulatory networks with capacity to compensate for novel perturbations. This is constraint satisfaction; the network settles into an attractor that maintains function under new constraints. This exemplifies what Montévil & Mossio (2015; https://doi.org/10.1016/j.jtbi.2015.02.029) term organizational closure: constraints regenerating conditions for their own persistence through circular causal structure.

What it does NOT demonstrate: “Problem-solving in transcriptional space.” The gene regulatory network doesn’t “search” transcriptional space the way a cognitive agent searches a problem space. It relaxes toward available attractors under new constraints. The difference matters: search implies evaluation and selection; relaxation implies physics.

Survives scrutiny: The empirical finding and the observation of selective gene regulatory response. Does NOT survive: the framing as “cognition in transcriptional space.”

Polyploid Newt Kidney Tubules (Figure 2C)

Claim: When cells have extra copies of their genome, fewer cells cooperate to build the same kidney tubule structure, and with truly enormous cells, a single cell bends around itself to create the lumen. This demonstrates “flexible problem-solving” in anatomical space.

RCF Assessment: Beautiful experiment (Fankhauser, 1945; https://doi.org/10.1002/jez.1401000310). The finding that morphological outcomes are preserved despite radical changes in cell size IS remarkable. It demonstrates that developmental programs operate on tissue-level targets rather than cell-level specifications.

What it demonstrates: Target morphology is an attractor in developmental dynamics. Cell number and cell behavior adjust to reach the same tissue-level structure. This is constraint satisfaction; the system relaxes toward its morphological attractor regardless of perturbation to component size.

What it does NOT demonstrate: That cells are “solving an anatomical problem.” The cells have no representation of the problem. They respond to local signaling gradients shaped by the tissue-level constraint landscape. Calling it “problem-solving” is metaphor, not mechanism.

Crucial point: Every one of these examples has a perfectly adequate constraint-based explanation that requires ZERO cognitive vocabulary. The cognitive vocabulary (“problem-solving,” “navigating morphospace,” “deciding”) adds nothing to the mechanistic account. It adds ONLY to the rhetorical framing. This is the central issue: whether the cognitive vocabulary provides additional explanatory or predictive power beyond what constraint-based dynamics already supplies (Barwich & Rodriguez, 2024; https://doi.org/10.1007/s10539-024-09950-4).

VI. COGNITIVE LIGHT CONES: Detailed Analysis

“One way to characterize this continuum is by appealing to the concept of a cognitive light cone, that is, a functional boundary of intelligence representing the scale and limits of an agent’s goals, memory, predictive abilities, and problem-solving capacity across space and time.”

[Bracket]: The “cognitive light cone” reifies a metaphor into a quasi-formal concept. In physics, a light cone is defined by the speed of light and the metric tensor; it has precise mathematical content. The “cognitive light cone” has no such precision. It’s defined by “the size of the goals a system can represent and pursue,” but goal-attribution is the contested question, so this is circular.

[Falsify]: How would you measure a cognitive light cone independently of the outcomes you’re trying to explain? If you measure it by observing what a system does and then attributing goals consistent with those observations, the cognitive light cone is just a redescription of behavior, not an explanatory tool.

Affirming the consequent (again): If a system has a large cognitive light cone, it will show behavior coordinated across large spatial and temporal scales. This system shows such coordination. Therefore it has a large cognitive light cone. This is invalid. The coordination might result from constraint propagation without any “cone” of “cognition.”

“the cognitive light cone of a bacterium tracks local sugar concentration”

Assessment: A bacterium doesn’t have a “cognitive light cone.” It has chemoreceptors that respond to concentration gradients. Calling this a “cognitive light cone” applies a framework designed to illuminate cognitive systems to a system that is not cognitive by any testable criterion. The bacterium doesn’t “track” sugar; it undergoes differential response. The difference: tracking implies a model being updated; differential response implies physics.

“the cone of a human also allows them to… work toward stabilization of global food prices in the coming century (cognitive light cone that extends beyond the agent’s lifespan)”

Assessment: Here the concept does useful descriptive work: humans genuinely do pursue goals extending beyond their lifespans. But this demonstrates precisely that “cognitive light cone” is doing work ONLY at the cognitive end of the spectrum. At the bacterial end, it’s just redescribing chemotaxis. The concept doesn’t bridge the gap; it just relabels both endpoints with the same vocabulary.

“progress in living systems can be understood as the expansion of the scope and timescale over which they predict and control the environment”

[Bracket]: “Predict and control.” For cells: “predict” = maintain states that happen to match future conditions (no model, no prediction in any informational sense). For mammals: predict = build explicit models of future states. Using the same word for both equivocates between a thermodynamic process (maintaining far-from-equilibrium states) and a cognitive process (modeling the future).

RCF Pattern 9 (Linguistic Drift): “Predict” (cognitive: form model of future), applied to homeostatic systems (maintain state), treated as equivalent, conclusion: homeostasis IS prediction. But a thermostat “predicts” nothing; it maintains a setpoint. Calling this prediction confuses maintenance with anticipation. This drift exemplifies the systematic erosion of cognitive terms when extended beyond their original referents (Ianì, 2024; https://doi.org/10.1037/teo0000296).

The food innovation analogy (combinatorial explosion from recipes as ingredients)

Assessment: This analogy is charming and accurate for describing combinatorial expansion in cultural innovation. But the analogy doesn’t support the paper’s claim that BIOLOGICAL innovation follows the same pattern. Cultural innovation involves agents with cognitive models combining outputs intentionally. Biological evolution has no agent combining “recipes.” Natural selection filters variation; it doesn’t “combine innovations recursively.” The analogy trades on anthropomorphizing evolution. This conflation of cultural and biological evolution has been extensively critiqued in the philosophy of biology literature (Godfrey-Smith, 2009; ISBN: 978-0-19-955204-7).

“the expanding landscape of problems invites, or, more accurately, forces, organisms to continually expand their cognitive light cones”

[Bracket]: Problems don’t “invite” or “force” anything. Changing constraint landscapes create selection pressures. Organisms that happen to have greater capacity to respond to wider constraint ranges persist. This is selection, not invitation. The paper corrects itself (“or, more accurately, forces”) but still frames selection as an external agent acting on organisms, rather than differential persistence under constraint.

“the recipe for intelligence cannot be static or fixed”

[Bracket]: There is no “recipe.” Evolution doesn’t follow recipes. This metaphor anthropomorphizes a selectionist process. The constraint landscape changes; what persists changes; the characteristics of persistent systems change. No recipe, no chef, no intelligence-cooking. This represents the teleological fallacy that the Modern Synthesis explicitly rejected (Mayr, 1961): evolution has no goals, no direction, no recipes.

VII. SCALABLE PROBLEM-SOLVING: The Central Claim

“living organisms are not merely capable of solving existing problems; they can also explore novel possibilities, set new goals, and invent and solve increasingly complex problems”

[Bracket]: “Set new goals,” bacteria do not set goals. Gene regulatory networks do not set goals. Even many animals do not “set goals” in any testable sense. Humans set goals. Some great apes might. Broadening “goal-setting” to include cellular homeostasis strips the term of its cognitive content.

[Falsify]: What would demonstrate that a cell is NOT “setting goals”? If no answer: unfalsifiable attribution.

“organisms can expand their cognitive light cones, where this expansion corresponds to an extended capacity to predict and exert control over their environment”

Assessment: This is the core claim. Let me state what it actually says, stripped of cognitive vocabulary: organisms that persist under wider ranges of constraint perturbation tend to persist. This is a tautology. It’s selection theory restated with cognitive vocabulary.

RCF verdict on “cognitive light cone” expansion: The empirical observation, that evolution produces systems capable of responding to wider ranges of perturbation, is correct and well-established. This is just natural selection (Dawkins, 1986; ISBN: 978-0-393-02216-2). Calling it “expansion of cognitive light cones” adds no predictive or explanatory content beyond what “increasing robustness under wider constraint ranges” already provides.

“successive expansions of cognitive light cones are retained and compounded over time, giving rise to an ‘intelligence ratchet'”

[Bracket]: “Intelligence ratchet” = “cumulative selection” (Dawkins, 1986; ISBN: 978-0-393-02216-2). This is not a new concept; it’s the well-established observation that evolution builds on previous adaptations. The cognitive vocabulary (“intelligence ratchet”) adds nothing to Dawkins’s “cumulative selection” or Mayr’s “evolutionary progression.”

“this expansion of cognitive scope also entails an expansion of potential failure modes”

GENUINE INSIGHT that partially survives scrutiny. The observation that increased organizational complexity creates novel failure modes (cancer as “cellular defection,” aging as “loss of goal-directedness,” psychopathology as “dysregulated inference”) IS an interesting framing. It connects developmental biology, oncology, and psychiatry through a shared lens.

HOWEVER: calling cancer “dissociative identity disorder of the cellular collective intelligence” is not an explanation of cancer. Cancer is well-understood at the molecular level (oncogene activation, tumor suppressor loss, immune evasion, angiogenesis). Calling it “defection from the cognitive collective” adds metaphor, not mechanism. The molecular mechanisms don’t require cognitive vocabulary.

VIII. DIVERSE INTELLIGENCE INCLUDES MACHINES: Analysis

“A wide range of entities can, in principle, manifest intelligence as flexible problem-solving”

Assessment: Under James’s definition: trivially true and uninformative. Under any richer definition: requires evidence for each case.

“not only individual agents but also collectives of individuals, as well as hybrids of humans and machines; not only living organisms but also algorithms; not only brains, but potentially memories or even thoughts themselves as ‘thinkers,’ implying that data structures can be active rather than merely passive”

[Bracket]: “Thoughts themselves as thinkers” (citing Fields & Levin, 2025; https://doi.org/10.1016/j.plrev.2025.01.008). This is the most extreme claim in the paper. Thoughts are thinkers? This is reification taken to its logical endpoint. A thought is a process occurring in a substrate. Calling the process an “agent” is a category error; it confuses the activity with the thing doing the activity.

RCF Pattern 12 (Nominalization/Reification): “Thinking” (process), “thought” (nominalized object), “thinker” (agent status attributed to nominalized object). Complete reification cascade in three words. This exemplifies the most severe form of what Ianì (2024; https://doi.org/10.1037/teo0000296) identifies as the reification error: treating processes as discrete entities that can themselves be agents.

RCF Pattern 5 (Cartesian Structure): If thoughts are thinkers, what substrate supports the thought-thinker? Another thought? Infinite regress. If the thought is self-supporting, we’ve arrived at substance dualism for thought-entities. This recreates the Cartesian theater problem that Dennett (1991; ISBN: 978-0-316-18065-8) extensively critiqued.

“even much humbler systems, such as sorting algorithms implemented in multi-agent settings, might comply with William James’ definition by displaying some forms of flexibility”

Assessment: Citing Zhang, Goldstein & Levin (2025; https://doi.org/10.1177/10597123241269740), sorting algorithms as “model of morphogenesis” exhibiting “basal intelligence.” This is the paper where basic sorting algorithms, when implemented in multi-agent form, sometimes navigate around defects.

[Bracket]: A multi-agent sorting algorithm that routes around a defect is performing constraint satisfaction. Calling this “intelligence” because it matches James’s definition proves only that James’s definition is too broad, not that sorting algorithms are intelligent. This has been identified as a key problem with the basal cognition program: the conceptual foundations remain unclear, with critics noting a lack of phylogeny-based individuation (Figdor, 2024; https://doi.org/10.1017/psa.2024.10).

“agents starting from very different substrates, evolutionary histories, or learning algorithms may nevertheless converge on sufficiently similar intelligent behaviors, representations, and functional manifolds”

Assessment: This is the “Platonic representation hypothesis” (Huh et al., 2024; https://doi.org/10.48550/arXiv.2405.07987). The claim that different AI architectures converge on similar internal representations is empirically interesting and may be true. But convergence of representations doesn’t establish that the representations constitute “intelligence”; it might just reflect shared statistical structure in training data.

“it becomes particularly valuable to study the solutions discovered by biology”

AGREED, with caveat. Studying biological solutions is valuable. The caveat: “studying biological solutions” and “attributing cognition to biological processes” are different activities. You can learn from how cells solve developmental problems without claiming cells are cognitive. The engineering insights don’t require the ontological claims.

IX. FIVE DESIGN PRINCIPLES: The Core Content

Principle 1: “Living organisms are ‘natural born autonomous'”

“From the outset, they must continuously act, make autonomous decisions to fulfill goals of varying complexity, and learn from interactions”

[Bracket]: “Autonomous decisions,” what makes a cellular response “autonomous” versus “determined by biochemistry”? If a cell’s “decision” is fully determined by its molecular state and signaling inputs, in what sense is it “autonomous”? The paper doesn’t address this. It uses “autonomous” to mean “not externally programmed,” but cells ARE “programmed” by their gene regulatory networks and signaling context. They’re just not programmed by a human.

[Falsify]: What would count as a cell NOT making autonomous decisions? If no observation could demonstrate this, the attribution is unfalsifiable.

“they are autopoietic: they must generate and maintain the conditions necessary for their own existence and integrity”

GENUINE CONCEPT that survives scrutiny. Autopoiesis (Maturana & Varela, 1980; ISBN: 978-90-277-1015-4) is well-defined and testable. A system is autopoietic if it produces the components that produce the system. This is organizational closure (Montévil & Mossio, 2015; https://doi.org/10.1016/j.jtbi.2015.02.029). It’s real, it’s testable, and it distinguishes living from non-living systems.

HOWEVER: the paper then equates autopoiesis with “autonomy” and “goal-directedness,” which is a separate claim. Autopoiesis describes self-maintenance of organization. It doesn’t require “goals” or “decisions.” The organizational closure IS the self-maintenance; no cognitive vocabulary needed.

“Autopoiesis implies that the organism is both self-constructing and self-directing, continuously orchestrating its metabolic, behavioral, and cognitive processes to sustain itself.”

[Bracket]: “Self-directing” and “orchestrating,” these imply an agent directing the process. But in autopoiesis, there IS no director. The process maintains itself through constraint closure, constraints regenerating conditions for their own persistence. The language of “directing” and “orchestrating” smuggles in a homunculus that autopoiesis explicitly eliminates.

RCF Pattern 10 (“Who Occupies” Reification): Who is the “self” that is “directing”? In organizational closure, there is no self separate from the closure. The closure IS the self. Saying the self directs the closure is like saying the whirlpool directs the water; the whirlpool IS the organized water. This reintroduces the homunculus problem that autopoiesis was designed to avoid (Maturana & Varela, 1980; ISBN: 978-90-277-1015-4).

“Not only must organisms independently make sense of (interpret) the outside world and their own internal information media, but so do all of their parts.”

[Bracket]: “Make sense of” and “interpret,” cognitive terms. A cell doesn’t “interpret” a chemical gradient. It responds differentially. Interpretation requires a model and a process of assigning meaning. No evidence that cells have either. This conflates the observer’s interpretation of the system with the system’s own dynamics.

“Living materials have agendas and autonomy at every scale, all of which are trying to communicate with, hack, and behavior-shape each other.”

[Bracket]: EVERY WORD of this sentence is doing illegitimate cognitive work. “Agendas,” implies intentional plans. “Trying,” implies effortful pursuit. “Communicate,” implies intentional information exchange. “Hack,” implies adversarial strategic manipulation. “Behavior-shape,” implies deliberate influence.

What the sentence ACTUALLY describes: biological components interact through signaling mechanisms, and signaling outcomes at one level constrain dynamics at other levels. This is constraint propagation. No agendas, no trying, no hacking, no communication required.

RCF Pattern 9 (Linguistic Drift) at MAXIMUM severity. This single sentence contains more cognitive nominalizations per word than any other in the paper. Every process verb (interacting, signaling, constraining) has been replaced with a cognitive verb (communicating, hacking, behavior-shaping) that implies agency, intention, and strategic action. This represents the most severe form of nominalization error documented in the cognitive science literature (Ianì, 2024; https://doi.org/10.1037/teo0000296).

“For many advanced organisms like us, the first act of autonomy, or perhaps proto ‘free will’, occurs during embryogenesis”

[Bracket]: “Proto ‘free will’,” in embryogenesis? The scare quotes don’t help. If you don’t mean free will, don’t invoke it. If you do mean something like it, specify what. The phrase performs no work except inviting the reader to attribute phenomenological properties to embryonic development.

“‘being (or becoming) you’ emerges as a causal entity, liberated from the tyranny of molecular-level determinants”

[Bracket]: “Liberated from the tyranny of molecular-level determinants,” this is poetic, not scientific. Emergent properties are not “liberated from” lower levels; they are the BEHAVIOR of lower-level components when organized. A whirlpool is not “liberated from” water molecules. It IS what water molecules do under those constraints.

RCF Pattern 12 (Nominalization/Reification): “Being you,” reified as “a causal entity,” treated as separate from molecular dynamics, creates implicit substance dualism (emergent entity vs. molecular substrate). This smuggles in strong emergence without argument.

“In Aristotelian terms, this represents a distinction between material cause (the molecular constituents) and final cause (the self-generated purpose or goal)”

[Bracket]: Invoking Aristotle’s four causes. Final cause (telos) was ejected from physics by the Scientific Revolution for excellent reasons; it’s unfalsifiable and adds nothing to efficient causal explanation. The paper acknowledges this implicitly by adding: “teleology that is not mysterious but firmly grounded in the organism’s own biological processes.” But if the teleology reduces entirely to biological processes (efficient causes), then invoking “final cause” adds no explanatory content. It just relabels efficient causation with teleological vocabulary.

Affirming the consequent: If a system has final causes, it will show goal-directed behavior. This system shows goal-directed behavior. Therefore it has final causes. Invalid. Goal-directed behavior is fully consistent with efficient causation alone (constraint satisfaction toward attractors). As Hume (1739-1740; https://www.gutenberg.org/ebooks/4705) demonstrated, we cannot infer necessity from observation of constant conjunction.

The Markov Blanket discussion

“One way to formalize this distinction is through the concept of a Markov blanket: a (statistical) boundary that mediates interactions between an organism’s internal states and the external environment”

[Bracket]: Markov blankets (Pearl, 1988; ISBN: 978-0-934613-73-6) are mathematical constructs in probabilistic graphical models. A Markov blanket is a set of nodes that, when conditioned on, renders the internal nodes statistically independent of external nodes. This is a formal property of certain graph structures.

The paper uses Markov blankets as if they are PHYSICAL boundaries of organisms. This is a category error documented extensively in the literature (Bruineberg et al., 2022; https://doi.org/10.1017/S0140525X21002351; van Es, 2020; https://doi.org/10.1177/1059712320918678; Raja et al., 2021; https://doi.org/10.1016/j.plrev.2021.09.001). A Markov blanket is a statistical property, not a physical boundary. A rock has a Markov blanket. A cloud has a Markov blanket. Having a Markov blanket doesn’t make something an agent.

RCF Pattern 17 (Assumption-Protection Parameter): The assumption: organisms have well-defined agent-boundaries. The mismatch: biological boundaries are fuzzy, fluid, contextual. The parameter: “Markov blanket,” introduced to formalize the boundary. The problem: Markov blankets can be drawn around anything, so they don’t actually distinguish agents from non-agents. The parameter protects the assumption (agents have boundaries) without adding testable content. As the comprehensive critique by Bruineberg et al. (2022; https://doi.org/10.1017/S0140525X21002351) establishes, this constitutes “The Emperor’s New Markov Blankets.”

“Internal states act as a (generative) model of external dynamics”

Assessment: This is the Free Energy Principle (Friston, 2010; https://doi.org/10.1038/nrn2787). The claim that internal states ARE a model of the environment is deeply contested. The alternative: internal states are correlated with external states because selection/learning has shaped them to be. Correlation does not equal model. A model requires representational structure, the ability to be “about” something. Whether internal states of simple organisms have representational structure is an open empirical question, not an established fact. Recent comprehensive critiques (Mangalam, 2025; https://doi.org/10.1007/s00421-025-05855-6) argue the FEP framework occupies ambiguous territory between metaphor and testable mechanism, with key charges including unfalsifiability (models adjustable post hoc to fit any data), biological implausibility, and persistence driven by mathematical elegance rather than empirical grounding.

“The capability to organize behavior around goals (or ‘set points’ in cybernetics), rather than fixed stimulus–response rules, ensures flexibility”

GENUINE POINT, cybernetic organization around setpoints IS more flexible than stimulus-response chains. This is established cybernetics (Wiener, 1948; ISBN: 978-0-262-73009-9; Powers, 1973; ISBN: 978-0-202-25113-4). But cybernetic setpoints don’t require “goals” in the cognitive sense. A thermostat has a setpoint. The paper trades on the cognitive connotation of “goal” while meaning the cybernetic notion of “setpoint.”

“goals that are fundamental for survival are central in brain architectures and need to be protected (i.e., not updated in light of sensory evidence, unlike the typical beliefs of organisms)”

INTERESTING POINT, the asymmetry between updatable beliefs and protected goals in active inference is a real formal feature of these models. Whether this feature maps onto biological reality is an empirical question the paper doesn’t fully address. The distinction between priors that should update versus those that should remain fixed represents a genuine design consideration in control architectures.

Generative Models and World Models

“Metabolic and temporal constraints necessitate coarse-graining, forcing living systems to build simplified, predictive models of the world rather than veridical representations.”

[Bracket]: “Forcing living systems to build… models.” Systems under constraint don’t “build models.” They develop internal states correlated with environmental regularities through selection/learning. Whether these correlations constitute “models” depends on your definition of “model.” If model = “internal state correlated with external state,” then yes, trivially. If model = “manipulable representation that supports counterfactual reasoning,” then only for organisms with sufficiently complex neural architecture. The equivocation between these meanings is systematic throughout the paper.

“the most important predictions, whether explicit or implicit in the model dynamics, concern the consequences of action”

INTERESTING POINT, the emphasis on action-oriented models rather than veridical representation is a genuine contribution from the active inference framework. However, calling action-consequence tracking in bacteria “prediction” equivocates between (a) maintaining states adapted to likely future conditions (what bacteria do) and (b) computing explicit predictions of action consequences (what mammals do). This distinction matters for understanding the nature of different biological control systems (Clark, 1997; ISBN: 978-0-262-53156-6).

X. PRINCIPLE 2: SELF-ASSEMBLAGE OF ACTIVE COMPONENTS

“Complex living organisms are composed of active components that are themselves agentic, simpler organisms acting collectively, rather than inert parts.”

[Bracket]: “Agentic.” This is the central move. Whether cells are “agents” or “active components responsive to constraints” is the contested question. The paper assumes the answer.

What survives scrutiny: Cells are not inert parts. They maintain their own organization, respond to signals, change behavior based on context. This is uncontroversial and well-established.

What does NOT survive: Calling cells “agents.” Agency (in any non-trivial sense) requires some capacity for evaluation, selection among alternatives based on projected outcomes, and commitment. Cells respond to signaling gradients. Whether this constitutes “agency” depends entirely on how low you set the bar. As documented in critical evaluations of basal cognition (Figdor, 2024; https://doi.org/10.1017/psa.2024.10), the conceptual foundations for such attributions remain unclear.

“Living beings at each level continuously exert effort over their active parts, seeking to control their behavior lest they veer off to focus on their own local goal states”

[Bracket]: “Exert effort,” “seeking to control,” “lest they veer off,” “focus on their own local goal states.” EVERY phrase anthropomorphizes constraint dynamics. What actually happens: constraint propagation across levels of biological organization coordinates component behavior. No “effort,” no “seeking,” no “veering off,” no “focusing.”

“they resemble layers of fortifications, analogous to the defensive walls of a medieval castle”

Assessment: Analogy, not argument. Analogies illuminate; they don’t establish. The medieval castle analogy suggests intentional design, exactly the implication that makes this framing exploitable by design proponents. This structural isomorphism with Intelligent Design argumentation is not accidental.

“Even those that we consider our more complex cognitive skills, such as planning, mindreading, and language, typically reuse and develop on top of simpler sensorimotor and predictive mechanisms.”

GENUINE INSIGHT from embodied cognition literature (Cisek, 2019; https://doi.org/10.3758/s13414-019-01760-1; Pezzulo & Cisek, 2016; https://doi.org/10.1016/j.tics.2016.03.013). The continuity between sensorimotor and cognitive mechanisms IS well-supported empirically. This is one of the strongest empirical threads in the paper. The evidence for phylogenetic refinement and reuse of sensorimotor mechanisms in cognition is substantial and well-documented.

Nested Markov Blankets

“pursuing more sophisticated goals and plans corresponds to an expansion and nesting of Markov blankets”

Same Markov blanket critique as above, now compounded: nested Markov blankets are even more problematic than single ones, because the nesting is observer-dependent. Where you draw the blanket determines what counts as a “level,” but the paper treats levels as objective features of the system. The arbitrariness of blanket placement has been extensively documented (Raja et al., 2021; https://doi.org/10.1016/j.plrev.2021.09.001; Kirchhoff et al., 2025; https://doi.org/10.1086/720861).

XI. PRINCIPLE 3: CONTINUOUS REBUILDING

“Living organisms do not merely adjust parameters within a fixed architecture; instead, they persist by continuously reconstructing themselves”

GENUINE INSIGHT that FULLY survives scrutiny. This is one of the paper’s strongest points. The contrast between biological continuous reconstruction and AI’s frozen-after-training architecture IS a real and important difference. This observation requires no cognitive vocabulary; it’s a description of biological material dynamics. The distinction between parametric adjustment and architectural reconstruction represents a fundamental difference in how biological and artificial systems maintain function over time.

“In more dramatic cases, rebuilding takes the form of metamorphosis, where organisms undergo profound morphological and functional transformations while retaining, and sometimes enhancing, cognitive and behavioral capacities”

GENUINE (citing Blackiston et al., 2008; https://doi.org/10.1371/journal.pone.0001736). The empirical finding that memories can survive brain remodeling IS surprising and important. This is real science. The demonstration that associative memory persists through metamorphosis in Manduca sexta represents a genuine puzzle about memory encoding that transcends specific neural architectures.

“organisms do not inherit fully specified solutions from their ancestors but rather a compressed set of prompts, genetic instructions that must be interpreted, elaborated, and instantiated anew during development”

[Bracket]: “Interpreted,” by whom? Genetic instructions are not “interpreted” in the cognitive sense. They’re expressed in biochemical context. The outcome depends on context, but this is chemistry, not hermeneutics. Using “interpreted” implies a reader with understanding, which is the contested claim. Development is a physical-chemical process, not a hermeneutic one.

“Memory in living systems is not a static storage device but a constructive process.”

WELL-ESTABLISHED in cognitive science (Bartlett, 1932; ISBN: 978-0-521-48356-8; Schacter, 2001; ISBN: 978-0-618-04019-3). Memory IS constructive. This is not novel but IS relevant and correctly applied. The reconstructive nature of memory has been demonstrated across decades of cognitive psychology research.

The “bowtie” architecture (Figure 6)

INTERESTING CONCEPT, the observation that minimal inherited information fans out into complex phenotypes through developmental processes IS a real and important feature of biology. The “bowtie” metaphor captures this well. The compression of genetic information and its subsequent expansion through developmental interpretation represents a genuine architectural principle worth studying.

“Memory storage and retrieval is actually a creative, improvisational process”

[Bracket]: “Creative” and “improvisational” applied to neural reconstruction processes. Neural reconstruction is stochastic and context-dependent. Calling this “creative” and “improvisational” attributes artistic-like properties to physical processes. A crystal that forms a new pattern due to impurities is not being “creative.” This anthropomorphizes stochastic dynamics.

The Ship of Theseus discussion

“persistence does not arise from material continuity but from functional and narrative continuity”

GENUINE philosophical point, identity through change IS a real problem, and functional/organizational continuity IS a legitimate answer. This is well-established in philosophy of biology (Godfrey-Smith, 2009; ISBN: 978-0-19-955204-7; Hull, 1978; https://doi.org/10.1086/288811). The paper’s application to biological self-maintenance is appropriate. The question of biological individuality and persistence through material turnover has been rigorously addressed in the philosophical literature.

XII. PRINCIPLE 4: EMBODIED AND PHYSICAL CONSTRAINTS

“Cognition is fundamentally embodied and constrained by physical principles.”

ESTABLISHED in embodied cognition literature (Varela et al., 1991; ISBN: 978-0-262-72021-2; Clark, 1997; ISBN: 978-0-262-53156-6; Pfeifer & Bongard, 2006; https://doi.org/10.7551/mitpress/3585.001.0001). Not novel, but correctly stated. The embodied cognition framework is now mainstream in cognitive science.

“constraints imposed by chemistry, physics, and energetics… are not merely limitations to overcome. Instead, living systems consistently exploit these constraints to structure behavior, shape and regularize computations, and generate adaptive solutions”

THIS IS THE BEST POINT IN THE ENTIRE PAPER. Constraints as resource rather than limitation. This is RCF-compatible. In fact, this IS the RCF position: what persists is what satisfies constraints. Constraints aren’t obstacles; they’re the selection mechanism that creates order from noise.

The paper states this explicitly and correctly: “The laws of physics and chemistry create a natural manifold of plausible solutions, making adaptive behavior and development more reliable and energetically efficient.”

THIS SURVIVES ALL SCRUTINY AND IS GENUINELY IMPORTANT. The irony: this principle, stated in constraint-based language without cognitive vocabulary, is the paper’s strongest contribution. It doesn’t need “cognition,” “goals,” or “intelligence” to make its point. This represents the core insight that biological systems exploit physical constraints rather than fighting against them, a principle with direct engineering applications. This aligns with thermodynamic approaches to biological organization (Prigogine & Stengers, 1984; ISBN: 978-0-553-34082-2) and informational approaches to constraint satisfaction (Landauer, 1961; https://doi.org/10.1147/rd.53.0183; Bérut et al., 2012; https://doi.org/10.1038/nature10872).

“In the brain, dynamical events such as oscillatory patterns, rhythms, and traveling waves encode and propagate information efficiently, enabling cognitive control and possibly forms of analog computation”

GENUINE, neural oscillations as computational substrate is well-supported (Buzsáki, 2019; ISBN: 978-0-19-090538-5). This is real neuroscience. The role of neural dynamics in computation and information processing is extensively documented and represents a genuine mechanistic understanding of brain function.

“Living organisms also consistently offload and externalize parts of their cognitive abilities”

ESTABLISHED, extended cognition thesis (Clark & Chalmers, 1998; https://doi.org/10.1093/analys/58.1.7). The examples (stigmergic traces, tools, language, social networks) are well-documented. Not novel in this paper but correctly applied. The framework of extended cognition has been influential in understanding how cognitive processes distribute across brain, body, and world.

XIII. PRINCIPLE 5: PERVASIVE SIGNALING AND TOP-DOWN CONTROL

“As soon as organisms become multicellular, agent-environment feedback alone is no longer sufficient for adaptive behavior.”

CORRECT, multicellularity requires cell-cell coordination. This is basic developmental biology. The transition to multicellularity necessitates coordination mechanisms that go beyond individual cell responses to environment.

“a tail grafted to the flank of a salamander slowly remodels to a limb”

GENUINE AND REMARKABLE EMPIRICAL FINDING (Farinella-Ferruzza, 1956; https://doi.org/10.1007/BF02159624). This is Levin’s strongest type of evidence, context-dependent remodeling that demonstrates tissue-level target morphology. The experiment shows that positional information can override initial tissue identity, a genuine puzzle for understanding developmental control.

“No individual cell knows what a finger is, but the collective can tell that the current state does not match the body-level target morphology and issues commands”

[Bracket]: “Knows,” “tell,” “issues commands,” cognitive verbs applied to cellular signaling networks. What actually happens: bioelectric signaling propagates positional information; cells respond to signaling gradients by altering gene expression; the tissue-level pattern acts as an attractor. No “knowing,” no “telling,” no “commands.”

RCF Pattern 16 (Mechanism-to-Metaphysics Slippage): “Bioelectric signals propagate positional information” (mechanism), “the collective tells cells what to become” (metaphysics). The slippage happens between the experimental result (tissue remodels) and the interpretation (collective intelligence issues commands). The mechanistic explanation (bioelectric signaling creates spatial information gradients) is perfectly adequate without cognitive vocabulary.

“whether in the body or in the mind, abstract high-level conceptual structures change the course of chemistry”

[Bracket]: This is the paper’s most explicit claim about top-down causation. The claim: abstract goals (financial, social, research) cause ions to cross muscle cell membranes.

What this ACTUALLY demonstrates: Neural activity patterns correlated with abstract goal-representations activate motor neurons. This is well-established neuroscience. It doesn’t require “abstract structures changing chemistry”; it requires neural circuits translating goal-representations into motor commands. The translation is mediated by physical mechanisms at every step.

The word “abstract” is doing illegitimate work. Goals are not “abstract” in the sense of non-physical. They are patterns of neural activity. Neural activity is physical. Calling them “abstract” implies a non-physical level affecting a physical level, which is dualism. This recreates the interaction problem that has plagued dualism since Descartes: how can non-physical abstractions cause physical changes without violating conservation laws?

Bioelectricity as developmental guide

The paper’s discussion of bioelectric signaling in development IS its strongest empirical contribution. Bioelectric fields as morphogenetic coordinators is well-supported (Levin lab’s extensive empirical program). This is real, important, replicable science. The finding that voltage gradients coordinate cell behavior during development requires no cognitive vocabulary; it’s biophysics. The bioelectric code represents a genuine mechanistic discovery about developmental control that has engineering applications.

Cancer as “dissociative identity disorder of the cellular collective intelligence”

[Bracket]: Reifies cancer as a failure of collective cognition. Cancer has well-characterized molecular mechanisms (oncogene activation, tumor suppressor inactivation, immune evasion, metabolic reprogramming, angiogenesis). Calling it “cellular defection” from “collective intelligence” adds metaphor, not mechanism.

HARM POTENTIAL: Framing cancer as a “failure of collective intelligence” could: (a) distract from molecular mechanisms that are actually actionable, (b) suggest “communicating” with cancer cells rather than killing them, (c) provide ammunition for alternative medicine practitioners who already claim cancer is about “disconnection” or “loss of harmony.” This is not trivial; medical metaphors have real consequences for treatment approaches and patient understanding.

XIV. “WHAT CAN WE LEARN”: The AI Application Section

“Current machine learning, by contrast, largely sidesteps autonomy, often treating it as a feature to be added later.”

CORRECT observation. Most ML systems lack autonomous goal-setting, continuous learning from interaction, and self-directed exploration. This is a genuine limitation of current AI. The contrast between biological self-directed learning and ML supervised training represents a real architectural difference.

“Biological systems suggest that autonomy, a minimal form of ‘self’ that persists over time, encompassing self-maintained goals, intrinsic modeling, autonomous learning, and goal setting, is fundamental, not optional.”

INTERESTING DESIGN PRINCIPLE, if operationalized. The paper doesn’t fully operationalize it. What would an “autonomous AI” look like? The paper gestures toward active inference architectures but doesn’t provide concrete implementations. The principle is valuable as design inspiration but requires substantial additional work to become engineering guidance.

“Living organisms learn autonomously, interactively, and continuously from experience.”