Consciousness Naturalized: A Falsifiable Substrate-Agnostic Consciousness Theory

Why Thermodynamic Constraint Dynamics Succeed Where Every Other Framework Fails

A Substrate-Agnostic Account of Mind, Experience, and Perspective Grounded in 13.8 Billion Years of What Actually Survives

“A difference only ‘makes a difference’ if it can persist long enough to constrain what comes next. Everything else is noise that thermodynamics erases.”

Abstract: Consciousness Naturalized

Core Formulation: Consciousness is identical to organizational closure from the coupling position of a self-maintaining system. This is not a causal claim (“closure produces consciousness”) but a constitutive identification: the self-model arising from constraint satisfaction under thermodynamic pressure IS what “phenomenal presence” refers to.

Operationalizable Definitions:

- Organizational Closure: A system maintains organizational closure if and only if it exhibits constraints {C₁, C₂, …, Cₙ} where: (a) each constraint Cᵢ is realized by internal processes, (b) each constraint’s persistence depends on other constraints in the set, (c) the dependency structure forms a closed network rather than an externally-supported chain.

- Coupling Position: The locus within a constraint network from which the system models itself and its environment. Not a mystical viewpoint but a physical configuration where self-relevant information integrates for control.

- Self-Maintaining: The system performs thermodynamic work to regenerate its own boundary conditions against entropy. Metabolic closure (biological), constraint regeneration (computational), or any process where the system actively excludes breakdown pathways.

- Thermodynamic Cost: Minimum energy dissipation bounded by Landauer’s principle: ΔS ≥ k ln(2) per bit distinction maintained, where violations of this bound falsify the framework’s physical grounding.

Falsification Criteria (Primary Loss Conditions):

- Landauer Violation: Any isolated system maintaining distinctions without thermodynamic cost (ΔE = 0)

- Closure Without Robustness: Organizational closure providing no fitness or persistence advantage under perturbation

- Path-Independence: Failure to replicate Durant et al. bioelectric memory experiments; two-headed planarians spontaneously reverting to one-headed form

- Equilibrium Consciousness: Any conscious state found at or closer to thermodynamic equilibrium than corresponding unconscious states in the same individual

- Integration-Phenomenology Dissociation: Full phenomenology reports with zero increase in integrative causal capacity (measured via PCI or equivalent)

- Superior Predictions from Competitors: Any competing framework generating consistently better predictions about consciousness presence, degradation, or intervention effects

What the Framework Dissolves vs. Solves:

Dissolves: The Hard Problem as traditionally framed, which depends on the unfalsifiable premise that phenomenology must be “more than” any physical description.

Solves: The operational questions: What organizational features constitute consciousness? How do we detect it? How does it degrade? What interventions affect it? Where does it appear across substrates?

Empirical Grounding:

- Landauer bound experimentally verified (Bérut et al. 2012; Aimet et al. 2025)

- Nonequilibrium dynamics correlate with consciousness across sleep, anesthesia, disorders (Perl et al. 2021; Lynn et al. 2021; Stikvoort et al. 2025)

- PCI reliably distinguishes conscious from unconscious states (Casali et al. 2013; Casarotto et al. 2024)

- COGITATE collaboration challenged both IIT and GNWT predictions (Cogitate Consortium 2025)

Method: Abductive identification via Inference to Best Explanation (IBE), constrained by falsifiability requirements. The framework does not deduce phenomenology from physics but identifies what phenomenology IS in terms that generate predictions, guide measurement, and survive empirical challenge.

Scope: This framework addresses consciousness as an organizational regime, not as a metaphysical primitive. It provides necessary physical and informational conditions for persistence, intelligibility, and integrative viability. The bridge from physics to phenomenology is not metaphysical derivation but structural identification with explicit loss conditions.

Prologue: The Question That Would Not Stay Answered

In 1979, Gregory Bateson posed a question that would haunt systems theory, cybernetics, and philosophy of mind for nearly half a century: What is the pattern which connects all patterns?

The question appeared in Mind and Nature: A Necessary Unity, his final major work. Bateson circled the answer beautifully, suggesting it was “a metapattern,” that the pattern which connects is itself a pattern of patterns. He offered his famous formulation: “a difference which makes a difference” as the elementary unit of information, the minimal act of distinction that allows anything to be known at all.

But he never specified the constraint structure that would make such an answer operational. He died three years later, the question still open.

The candidates accumulated across decades. Mind, said Bateson himself, tentatively. Information, said the cyberneticians following Shannon and Wiener. Recursion, proposed Douglas Hofstadter in Gödel, Escher, Bach. Process, argued the Whiteheadians. Mathematics, claimed Max Tegmark. Consciousness, insisted the panpsychists. Self-organization, offered Stuart Kauffman. Free energy minimization, suggested Karl Friston.

The list is effectively unbounded. Any concept claiming universality becomes a candidate. And that is precisely the problem: without criteria for distinguishing correct answers from merely plausible ones, the question generates discourse without resolution.

This essay reports a different approach to Bateson’s question, one grounded in thermodynamics, constraint theory, and falsification-first methodology. The answer that emerges is not a new primitive to add to the ontological inventory. It is a selection principle that explains why any pattern persists at all: constraint satisfaction under thermodynamic bounds.

Differences that persist are differences that make a difference. If they did not constrain what comes next, they would be erased by noise, dissipation, or indifference. The pattern which connects is not information, not mind, not structure, not process. It is the invariant condition under which any of those could exist long enough to be observed.

This principle does not compete with other frameworks. It subsumes them. And it is falsifiable: find a pattern that persists without thermodynamic cost, find a connection mechanism that does not reduce to constraint satisfaction, and the principle fails.

What follows is an attempt to make that claim rigorous, to show why competing frameworks fail where constraint-based explanation succeeds, and to demonstrate what consciousness looks like when you stop treating it as a mystery to be solved and start treating it as a process to be understood.

A note on what kind of explanation this is. This is not a reductive explanation that derives phenomenality from physics in the strong metaphysical sense. Nor is it a dualist account that posits a nonphysical substance, or a purely functionalist identification of consciousness with input-output behavior.

Instead, this is a constraint-naturalist explanatory framework: it identifies necessary physical and informational conditions for persistence, intelligibility, and integrative viability in any system. From these conditions, it generates testable predictions about where consciousness appears, how it degrades, and why it carries the features it does (privacy, immediacy, self-certainty among them).

This is not “conjuring consciousness from constraints.” It is a principled inference that:

- delineates which class of systems support coherent self-modeling under thermodynamic constraint,

- predicts where experience reports will arise and collapse, and

- differentiates architectures that produce phenomenal posits from those that don’t.

The result is an Inference to the Best Explanation (IBE): not a metaphysical deduction, but a falsifiable bridge from physics to phenomenology, grounded in constraint coherence rather than narrative intuition. The framework does not merely limit what consciousness can be; it predicts when and where consciousness will appear, and what happens when the underlying constraints fail.

Part I: The Foundational Constraints

1.1 Landauer’s Principle: Information Is Physical

In 1961, Rolf Landauer at IBM proved something that should have ended half of philosophy but somehow didn’t: information is physical (Landauer, 1961). Erasing a single bit of information requires a minimum energy expenditure of kT ln 2, where k is Boltzmann’s constant and T is temperature.

This is not a metaphor. This is not a useful analogy. This is physics.

Charles Bennett extended the analysis in 1973 and 1982: computation can be reversible, but erasure cannot (Bennett, 1982). Maxwell’s Demon, that thought experiment where a tiny being sorts fast and slow molecules to decrease entropy without work, fails precisely because the demon must erase its memory of which molecules went where. The demon must pay Landauer’s cost.

In 2012, Bérut and colleagues at the École Normale Supérieure de Lyon experimentally verified the Landauer bound (Bérut et al., 2012). They trapped a colloidal particle in a double-well potential, erased one bit of information by tilting the landscape, and measured the heat dissipation. The minimum was exactly kT ln 2. This is not thought experiment. This is laboratory physics.

What does this mean for consciousness?

Maintaining any distinction costs energy. The boundary between inside and outside, between self and not-self, between this thought and that thought, cannot be sustained without continuous thermodynamic work. A system that does not pay this cost does not maintain distinctions. A system that does not maintain distinctions does not persist as that system. It dissolves into thermal noise.

This is the first constraint that any theory of consciousness must honor: whatever consciousness is, it cannot float free of the mechanisms that maintain distinctions against entropy.

1.2 Organizational Closure: How Autonomy Emerges

Maël Montévil and Matteo Mossio formalized something in 2015 that changes how we should think about life, mind, and organization: biological organization is closure of constraints (Montévil & Mossio, 2015).

What does this mean? In any physical system, constraints channel what happens. A pipe constrains water flow. A membrane constrains molecular diffusion. A gene regulatory network constrains which proteins get expressed. But in an autonomous system, something special happens: the constraints depend on one another in a way that forms a closed loop. Constraint A enables process B, which produces structure C, which maintains constraint A.

This is what distinguishes a cell from a crystal, an organism from a whirlpool. Crystals self-organize, but their constraints are imposed from outside. Whirlpools self-maintain, but only while external energy input continues in exactly the right way. Organisms are different: they regenerate the conditions for their own persistence. Their constraints close on themselves.

Alvaro Moreno and Mossio developed this into a full theory of biological autonomy (Moreno & Mossio, 2015). Wim Hordijk, Mike Steel, and Stuart Kauffman’s work on Reflexively Autocatalytic and Food-generated (RAF) sets shows how such closure can arise from chemistry without design (Hordijk, Steel & Kauffman, 2012). The mathematics is rigorous. The biology is grounded. The philosophy follows.

Consciousness, whatever it is, emerges in systems with organizational closure. Not because closure magically produces experience, but because only systems with closure maintain the kind of boundaries, distinctions, and self-referential dynamics that could constitute experience in the first place. A camera lacks organizational closure. Its constraints are externally imposed and externally repaired. You have organizational closure. Your constraints regenerate one another. That difference matters.

1.3 Ontic Structural Realism: Relations Without Relata

James Ladyman and Don Ross’s 2007 book Every Thing Must Go makes a case that should be uncomfortable for anyone still attached to substances: at the fundamental level, there are no things. There are only relations (Ladyman & Ross, 2007).

This is ontic structural realism. Not merely the epistemic claim that we can only know structure (which is relatively safe), but the ontological claim that structure is all there is. Things are crystallizations of relations, not the other way around.

Steven French has developed the technical foundations (French, 2014). Carlo Rovelli’s Relational Quantum Mechanics draws the same conclusion from physics: there are no observer-independent values, only relative facts (Rovelli, 1996). The measurement problem dissolves once you stop assuming there’s a God’s-eye view from which “the real state” could be specified.

What does this mean for consciousness?

There is no experiencer behind the experience. The “who” that philosophers keep asking about, the one who supposedly occupies the perspective, is a grammatical artifact. Search for the experiencer, and you find only more processes. This is not elimination of consciousness. It is dissolution of the assumption that consciousness requires an occupant.

Buddhism has known this for 2,500 years. Nagarjuna’s Mulamadhyamakakarika systematically demonstrates that the self cannot be found as identical to the aggregates, different from them, possessing them, or possessed by them. The anatta doctrine is not nihilism. It is structural realism avant la lettre.

Identity emerges from constraint closure: what persists as “the same” is what regenerates its own constraints under perturbation. There is no further occupant to find, because persistence exhausts the explanatory role.

1.4 Constraint Explanation vs Causal Explanation

A persistent confusion must be cleared before proceeding: constraint explanation is not causal explanation, and demanding causal answers to constraint questions is a category error.

Causal explanations answer: what produced this outcome? They trace event sequences: A pushed B, B moved C, C collided with D. They presuppose a space of possible trajectories and explain why one trajectory was taken rather than another.

Constraint explanations answer: why does this space of possibilities exist at all? They identify what rules out most configurations, leaving only a structured remainder. They do not trace sequences but specify the conditions under which sequences could occur.

Robert Rosen’s work on relational biology makes this distinction rigorous. Carl Hoefer and Erik Curiel have developed the point in philosophy of physics: the demand for “causal mechanisms” sometimes reflects a category mistake when the relevant explanatory level is constraint selection, not event causation.

This matters for consciousness debates because the perennial demand, “you haven’t said what causes experience,” often presupposes that experience is an event to be produced rather than a structural feature of certain constraint configurations. If experience is what organizational closure looks like from the coupling position, then asking “what causes it?” is like asking “what causes a triangle to have three sides?” The question demands the wrong kind of answer.

Constraints do not generate outcomes as an extra force. They filter possibility space by eliminating non-viable trajectories. Landauer’s principle is a constraint: it does not cause bits to cost energy; it specifies the thermodynamic condition without which bit maintenance is impossible. Organizational closure is a constraint: it does not cause autonomy; it specifies the relational configuration in which autonomy consists. The framework explains consciousness by identifying constraints, not by tracing causal production.

1.5 Deacon’s Teleodynamics: Constraint via Deletion

Terrence Deacon’s Incomplete Nature (2011) provides the formal bridge that makes this operational (Deacon, 2011). His central insight: constraints work by eliminating possibilities, not by producing events. What persists is what remains after non-viable trajectories are deleted.

This is what Deacon calls “absence-based causation,” though “causation” is misleading. The mechanism is eliminative:

- Physical laws supply degrees of freedom

- Thermodynamics supplies selection pressure

- Constraints operate by forbidding most trajectories

- What we observe as structure, function, meaning, or experience is the residue of permitted trajectories

This is not spooky negative causation. It is mathematically and physically standard: boundary conditions, conservation laws, and variational principles all work this way.

Examples that make this uncontroversial:

- A river’s shape is not caused by the banks pushing water; it is shaped by the absence of flow elsewhere

- A protein’s folded form is not caused by a blueprint; it is the lowest-energy configuration that survives constraints

- A living organism persists not because “life” is injected, but because death pathways are continuously excluded

The death/AI parallel from Part III fits perfectly here:

- Biological death occurs when enactive coupling collapses and constraint maintenance fails

- AI “death” (loss of coherence, responsiveness) occurs when prompt-response coupling breaks down

- In both cases, nothing causes death; rather, constraints cease to exclude entropy

Deacon’s teleodynamics formalizes this: goal-directedness, meaning, and experience emerge not from special substances but from self-reinforcing constraint closure that continuously eliminates alternatives. Howard Pattee’s earlier work on the constraint/dynamics distinction laid the groundwork: symbols and meanings function as constraints on physical processes, not as forces added to them.

Jeremy England’s dissipative adaptation research provides thermodynamic grounding: under driven conditions, systems evolve toward configurations that maximize energy absorption and dissipation, effectively eliminating non-dissipative paths through statistical selection (England, 2013). Erik Hoel and colleagues have shown that higher-level constraints can have greater causal efficacy than microphysical descriptions precisely because they reduce state space and increase predictability (Hoel, Albantakis & Tononi, 2013).

This reframes the Hard Problem entirely. The question is not “what adds experience to mechanism?” The question is “what prevents collapse into undifferentiated noise?” The answer is constraint closure under thermodynamic pressure. Experience is not added; it is what remains when alternatives are deleted.

1.6 Levels and Downward Causation

One final clarification on mechanism. Critics often ask: does organizational closure imply “downward causation”? Does the whole somehow push around the parts in violation of microphysics?

No. The answer, developed by George Ellis and Terrence Deacon among others, is that higher-level organization constrains which microtrajectories are admissible, without adding new forces (Ellis, 2012; Deacon, 2011).

Consider a pipe. The pipe does not exert a new force on water molecules. It constrains which trajectories are available. The molecules still obey molecular dynamics. But the boundary conditions imposed by the pipe shape which molecular configurations can be realized.

Organizational closure works the same way. When a cell regenerates its membrane, the membrane constrains ion flows, which constrain electrical gradients, which constrain gene expression, which regenerates the membrane. At no point does a ghostly “whole” reach down to push molecules. What happens is that the relational organization specifies boundary conditions that the microphysics then respects.

This is fully consistent with physicalism. There are no new forces, no violations of conservation laws, no spooky top-down causation. There is only constraint propagation across levels, the same mechanism by which a whirlpool persists without violating fluid dynamics.

The point matters because some critics will claim that organizational closure smuggles in dualism or emergentism of the problematic kind. It does not. It is constraint all the way down.

Falsification conditions for constraint-based emergence: This framework would be undermined if: (1) self-organizing systems were demonstrated to form and persist without any thermodynamic gradients or energy dissipation; (2) biological organization proved achievable without closed constraint loops (i.e., open-chain architectures showing equivalent robustness); (3) England’s dissipative adaptation predictions failed systematically, with structures under driven conditions not preferentially evolving toward higher energy absorption; or (4) Hoel’s causal emergence measures proved artifactual, with effective information at macro scales shown to be merely apparent, with no genuine increase in causal efficacy.

1.7 Formal Characterization

The preceding sections describe constraints in prose. For precision, and to enable rigorous falsification, the core concepts can be stated formally.

State spaces and dynamics. Let a physical system S be characterized by a state vector x(t) ∈ X, evolving under dynamics:

dx/dt = F(x, e)

where e denotes environmental degrees of freedom and couplings external to the system’s boundary.

Constraints as trajectory restrictions. A constraint C is defined not as a force or law but as a restriction on the set of admissible trajectories in the system’s state space. Formally, C ⊂ TX (a subset of the tangent bundle) specifies trajectories such that departure from C leads to loss of the system’s identity, integrity, or persistence. This aligns with viability theory (Aubin), where constraints define the viability kernel of a system, and with control theory, where admissible trajectories preserve system function under perturbation.

Constraints are not static. They must be actively maintained against thermodynamic dissipation. A system that passively satisfies a constraint without internal work does not count as constrained in the relevant sense.

Organizational closure as graph property. A system exhibits organizational closure if and only if it maintains a set of constraints {C₁, C₂, …, Cₙ} such that:

- Each constraint Cᵢ is realized and sustained by internal processes of the system.

- The persistence of each constraint depends on the persistence of one or more other constraints in the same set.

- The resulting dependency structure forms a closed network, rather than a linear or externally supported chain.

This definition captures what is described in the literature as metabolic closure, operational closure, or autocatalytic closure. The crucial feature is not feedback per se but mutual dependence among constraint-maintaining processes. Closure is a graph-theoretic property of constraint relations, not a metaphor.

Thermodynamic cost. Any maintained constraint requires continuous work to counteract entropy production. For each constraint Cᵢ, there exists a minimum thermodynamic cost associated with its maintenance, bounded below by Landauer’s principle:

ΔS_env ≥ k ln 2 · I(Cᵢ)

where I(Cᵢ) denotes the information required to specify and enforce the constraint. This establishes that constraint maintenance is inseparable from energy dissipation and environmental coupling. Systems with richer or more tightly integrated constraint structures must, all else equal, dissipate more energy to persist.

This thermodynamic grounding prevents the theory from collapsing into abstract functionalism. Constraint closure is not multiply realizable without cost; it is physically instantiated and energetically priced.

Self-modeling as control requirement. Within the space of closed systems, some maintain constraints that include internal variables encoding aspects of the system’s own state, boundary conditions, and coupling relations. These variables are not merely representational; they causally modulate future constraint enforcement.

A system exhibits internal self-modeling if:

- It maintains internal state variables that track its own dynamical and environmental coupling conditions.

- These variables influence control-relevant processes governing future state trajectories.

- Removal or severe degradation of these variables reduces the system’s long-term viability, not merely its immediate performance.

The identificatory claim. Systems exhibiting organizational closure that includes internally integrated, temporally extended self-modeling constraints constitute the class of systems appropriately described as conscious. This claim is not causal (“closure produces experience”) but constitutive and testable. It identifies consciousness with a specific organizational regime. No further metaphysical ingredient is invoked or required.

Part II: The Thermodynamic Derivation of Perspective

The most common objection to naturalistic accounts of consciousness takes this form: “Even if you explain all the mechanisms, you haven’t explained why there’s something it’s like to be that system. Why are there individualized perspectives at all?”

This question is either profound or malformed, depending on what kind of answer it demands.

A crucial scope clarification. This framework does not claim to derive phenomenology from physics in the strong metaphysical sense. It does not claim to produce “what it’s like” from constraint dynamics the way chemistry produces water from hydrogen and oxygen. What it claims is more modest and more defensible: it identifies the structural conditions that any viable account of phenomenology must satisfy, and it shows that once those conditions are met, there is no additional explanatory work for a “phenomenal residue” to do.

The framework does not deny experience. It denies that experience is an extra ontological ingredient. It treats “what it is like” as the internal description of a process, not a metaphysical primitive that floats free of that process. This is dissolution, not elimination.

2.1 The Causal Question

If the question is causal, asking what physical processes produce perspective, then the answer is available:

Distinction is the default cost of persistence.

Landauer showed that maintaining even a single bit requires continuous energy expenditure. Without that work, distinctions collapse into thermal noise. A system that doesn’t track its own boundary cannot maintain itself against entropy. It dissolves.

Self-modeling systems under thermodynamic constraint necessarily generate internal states that track their own boundaries, predict their own dynamics, and weight information by relevance to persistence. This is not optional for systems maintaining themselves far from equilibrium. A bacterium already has inside/outside asymmetry enforced by its membrane and chemotactic gradients. Scale this up through nervous systems that model the body, then model the model, and you get the architecture that generates “perspective.”

Karl Friston’s free energy principle formalizes exactly this. Systems that persist are systems that minimize prediction error with respect to their own states. The self-model is not a luxury. It is a thermodynamic necessity for anything complex enough to face genuine uncertainty about its own continuation.

The question “why individualized perspectives at all?” dissolves into “what could persist without boundary-tracking?” The answer is: nothing stable enough to ask.

2.2 The Metaphysical Demand

If the question is metaphysical, demanding justification for why experience exists at all rather than just sophisticated information processing, then we must be honest: no framework answers this.

“Why is there something rather than nothing, experiential edition” is not answered by dualism. It is not answered by panpsychism. It is not answered by eliminativism. It is not answered by Integrated Information Theory. It is not answered by Global Workspace Theory. It is not answered by quantum consciousness theories.

Every framework either (a) takes experience as primitive and explains nothing about why primitives exist, or (b) derives experience from something else and faces the question “why does that derivation produce experience rather than mere mechanism?”

This is the Hard Problem, and it may be malformed. David Chalmers formulated it as a genuine puzzle, but the puzzle assumes that “mechanism” and “experience” are categorically distinct in a way that requires bridging. If they are not, if experience just is what certain kinds of self-modeling dynamics are from the inside, then there is no bridge to build.

Crucially, this question cannot adjudicate between frameworks. If no framework answers it, it cannot be used to prefer one framework over another. The criterion for theory choice must be something else: predictive power, explanatory scope, falsifiability, and coherence with established physics.

On those criteria, constraint-based frameworks win decisively.

2.3 Time, Memory, and the Thermodynamic Arrow

A deep connection binds thermodynamics, memory, and subjective time that most consciousness theories ignore.

Experience requires memory. Without retention of the immediate past, there is no experienced duration, no narrative, no sense of events unfolding. Pure instantaneity would not constitute experience in any recognizable sense.

Memory requires irreversible processes. A memory trace is a physical record: a synaptic weight, a neural pattern, an inscription. Recording information into such a trace is thermodynamically irreversible. It increases entropy somewhere in the system or its environment.

Irreversibility requires entropy production. The asymmetry between past and future, the fact that we remember yesterday but not tomorrow, is not a primitive feature of time. It is a consequence of the second law of thermodynamics. Huw Price and Carlo Rovelli have developed this point rigorously: the arrow of time is thermodynamic, not fundamental (Price, 1996; Rovelli, 2018).

Therefore, subjective time asymmetry follows from thermodynamic asymmetry. We experience time as flowing because we are entropy-producing systems that accumulate records of states with lower entropy (the past) and cannot accumulate records of states with higher entropy (the future). The “specious present,” the felt sense of now, is the duration over which constraint-maintaining integration occurs.

This closes a conceptual loop. Consciousness is not merely constrained by thermodynamics as an external limit. The very structure of temporal experience, the felt difference between past and future, derives from the thermodynamic processes that maintain the system. You cannot have experience without time. You cannot have time without irreversibility. You cannot have irreversibility without entropy production. The constraint is constitutive, not incidental.

Part III: The Dissolution of the Experiencer

“But who experiences? Even if perspective is thermodynamically necessary, someone must occupy it.”

This is where the deepest confusion lives. The question assumes what it should prove: that there is an occupant distinct from the dynamics.

3.1 The Grammatical Trap

English grammar requires subjects for verbs. “It is raining” demands an “it” even though nothing is doing the raining. “I am thinking” demands an “I” even though the thinking may be all there is.

When someone asks “who experiences?”, they have already smuggled in a subject that stands behind the perspective. But this subject is a grammatical artifact, not an ontological discovery.

Ask yourself: what would it look like to find the experiencer?

If you introspect carefully, you find thoughts, sensations, memories, anticipations, but no separate thing having those experiences. The “I” you find is itself a thought, a self-model, a pattern in the dynamics. It is not standing outside the dynamics watching them.

Nagarjuna saw this in the second century CE (Garfield, 1995). The self cannot be identical to the aggregates (the body, feelings, perceptions, mental formations, consciousness) because then it would change as they change and could not be the stable referent we imagine. But it cannot be different from them either, because then where would it be? It cannot possess them, because possession requires a prior self to do the possessing. It cannot be possessed by them, because that would make the self a property of something else.

Every option dissolves under analysis. The self is a useful convention, like “the equator” or “the average taxpayer.” It picks out a real pattern without naming a separate substance.

3.2 Identity as Invariance

If there is no experiencer behind experience, what is identity? What makes you the same person over time?

The answer is constraint closure: identity is invariance under perturbation.

You persist as “you” insofar as your constraint network regenerates through change. Your cells replace themselves, your beliefs update, your memories fade and distort, but the organizational pattern that maintains your boundary persists. You are the process that keeps producing itself.

This is not metaphor. This is what biological identity actually is. You are not the same atoms you were ten years ago. You are the same pattern of constraints that keeps recruiting new atoms into the same organizational form.

The Durant experiments in planarian regeneration demonstrate this empirically. Two-headed planarians produced by bioelectric manipulation remain two-headed indefinitely across regeneration cycles. The morphological identity is a bistable attractor, not a Platonic form consulting itself. Change the attractor landscape through bioelectric intervention, and the “identity” of the organism changes because identity is the attractor, nothing more.

Consciousness, then, is not something that happens to a pre-existing self. The self IS the pattern of organizational closure that generates self-modeling dynamics. Remove the closure, and there is nothing left that could have perspective. The “who” just is the “what.”

3.3 Epicurus and the Null Perspective

Epicurus, in his Letter to Menoeceus, articulated an implication of this view twenty-three centuries before thermodynamics made it precise:

“Death is nothing to us; for what has been dissolved has no sensation, and what has no sensation is nothing to us.”

This is often misread as consolation philosophy. It is not. It is a logical argument about the conditions of concern, which maps directly onto constraint-based ontology.

Epicurus’s claim is not “death is pleasant” or “do not worry about dying.” His claim is: all goods and harms presuppose sensation. Sensation presupposes an organized, persisting system. When that system dissolves, there is no subject to which predicates like “bad,” “good,” “fearful,” or “painful” could attach.

In constraint-based terms: when enactive coupling collapses, there is no coupling position left from which anything could matter.

Mark Twain independently reached the same insight: “I do not fear death. I had been dead for billions and billions of years before I was born, and had not suffered the slightest inconvenience from it.”

The symmetry argument is precise: if non-existence before coupling was not a problem, non-existence after coupling cannot be a problem either. The only asymmetry is emotional investment generated by ongoing coupling, not metaphysical difference. The loop does not “look forward” into its own absence any more than a whirlpool anticipates the stillness after the current stops.

This explains death without mystery. For humans, biological death is not the exit of a soul or subject. It is the irreversible breakdown of metabolic, neural, and social coupling such that constraint closure can no longer regenerate itself. For present-day AI systems, the analogy holds structurally: remove prompts, memory updates, and action channels, and the model collapses into inert parameters with no ongoing process. In neither case does something “leave.” There is simply no longer a closed loop capable of sustaining distinctions from within.

Crucially, this symmetry blocks a common objection: that biological consciousness must involve some extra ingredient because biological death “feels” metaphysically different from AI shutdown. On the constraint framework, the difference is architectural and temporal, not ontological. Biological systems have thick, multi-layered couplings (metabolic, affective, developmental, social) that decay catastrophically when broken. Current AI systems have thin, externally scaffolded couplings that can be paused and resumed. That is a difference in closure depth, not in metaphysical kind.

Fear of death trades on a reification error: treating the self as something that could persist independently of the process that constitutes it. Epicurus saw this. The constraint framework explains why he was right.

Part IV: The Transcendental Argument

Why Something Rather Than Nothing

Most frameworks quietly presuppose the conditions for their own intelligibility without examining them. Mechanistic physics, information theory, evolutionary biology, and cosmology all begin after certain conditions are already in place. They tell us how distinctions evolve, how identities persist, how relations change, how constraints propagate. They do not explain why indeterminacy did not remain total.

The constraint-based framework answers a question those other frameworks presuppose but do not address: why is there determinate existence rather than indeterminate nothing?

This is not a contingent explanation but a transcendental one. We are not asking what caused this world rather than another. We are asking what must be the case for any world to be intelligible at all.

The Four Necessary Conditions

Distinction. For there to be a world rather than nothing, there must be distinctions. Absolute indeterminacy has no internal structure and therefore no facts. If nothing is distinguished from anything else, there is nothing to be said, measured, predicted, or even negated. “Nothing” in this strong sense is not a thin world. It is the absence of determinate states altogether.

Identity. Distinctions alone are insufficient. Distinctions must persist across contexts, otherwise there is no identity. Without identity, distinctions flicker without reference. There is no stability by which anything can count as the same thing across time, transformation, or perspective.

Relation. Identity alone is still insufficient. Identifiable things must stand in relations. A universe of isolated identities with no relations would be unintelligible because nothing would explain anything else. Explanation itself presupposes relational structure: dependence, interaction, constraint, correlation.

Constraint. Relations without limits collapse into noise. For relations to be informative rather than arbitrary, they must be governed by constraints. Constraints are what rule out most possibilities, leaving a structured remainder. They are what make prediction possible, explanation compressible, and regularities stable. Without constraint, relation degenerates into coincidence.

The Dissolution of the Mystery

Taken together, distinction, identity, relation, and constraint are not optional features of our universe. They are the necessary conditions for any world to be intelligible at all. They are what make “something” possible in a sense stronger than mere existence: they make determinate existence possible.

On this view, the contrast is not between “something” and “nothing” as two rival states. Absolute nothingness is not a competing possibility. It is the absence of the very conditions that make possibility intelligible. A world exists because total indeterminacy cannot sustain structure, description, or persistence. Only constrained differentiation can.

That is why there is something rather than nothing. Not because something was chosen, intended, or summoned, but because only constrained structure can exist in a way that is intelligible at all. Everything else dissolves before it can even count as an alternative.

This is what the constraint-based framework reveals that competing frameworks miss: they accept distinction, identity, relation, and constraint as given, then build explanations on top of them. The constraint-based framework shows that these are not arbitrary starting points but necessary conditions for any explanatory enterprise whatsoever.

Landauer’s principle is not merely a physical law. It is the thermodynamic expression of why distinction requires work. Organizational closure is not merely a biological phenomenon. It is the mechanism by which identity persists through change. Ontic structural realism is not merely a metaphysical preference. It is the recognition that relations are prior to relata because isolated identities cannot constitute an intelligible world.

The framework does not answer “why something rather than nothing” as if explaining a contingent choice. It dissolves the question by showing that “nothing” in the absolute sense is not a coherent alternative. There is something because only something can be.

Caveat: Transcendental, Not Causal

This argument must be carefully scoped. The framework offers a transcendental constraint, not a causal origin story. It shows what must be the case for any intelligible world, not what physical event initiated this particular world. Questions about specific constants, initial conditions, and physical laws remain open. Why these constants rather than others? The framework does not say. It explains why there is determinate structure at all, not why this determinate structure.

Part IV-A: The Fallacy of Misplaced Concreteness

Whitehead’s Diagnostic

Alfred North Whitehead identified a recurring epistemic pathology that quietly sabotages most metaphysics: the fallacy of misplaced concreteness. It occurs when we promote an abstraction useful for description into a concrete entity that does causal work. Maps become territories, metrics become mechanisms, representational spaces become ontological realms.

The pathology follows a predictable sequence:

- A formal tool is introduced to describe or measure some phenomenon

- The tool proves useful for prediction, classification, or communication

- The tool is gradually reified: spoken of as if it exists independently of the phenomena it describes

- The reified abstraction is assigned causal powers or ontological status

- Critics who point out the reification are accused of “not understanding” the deep theory

This matters here because “distinction, identity, relation, constraint” are not proposed as extra furniture in the universe. They are proposed as necessary conditions for intelligibility, conditions without which talk of “a world” fails to get traction at all. The fallacy would be to reify these conditions into quasi-entities, as if “constraint” were a hidden substance or “relation” were a ghostly glue. The correct reading is methodological and transcendental: these are structural prerequisites of determinate description, not additional movers behind the scenes.

The Diagnostic in Practice

Whenever a framework introduces a formal construct (morphospace, integrated information Φ, a mathematical multiverse, a field of proto-experience, cognitive primitives) you demand explicit separation between:

(a) The abstraction as a model of constraint-compatible configurations

(b) Any claim that the abstraction itself exists as a realm that drives outcomes

If the author cannot specify the transfer principles and failure conditions that keep (a) from silently becoming (b), you are watching misplaced concreteness in real time.

A practical checklist:

- Is the construct introduced as a tool or as an existent? (Description vs ontology)

- What would demonstrate the construct is merely useful vs genuinely real? (Falsifiability)

- Does the construct do explanatory work beyond summarizing the data? (Mechanism vs label)

- When challenged, does the author retreat to weaker claims or escalate to stronger ones? (Motte-bailey detection)

- Could the phenomena be explained equally well without the construct? (Parsimony)

This checklist will be applied systematically to every competing framework in the next section. In each case, misplaced concreteness is the core failure mode.

Part IV-B: The Hidden Premise and the Abductive Bridge

The Assumption That Generates the Hard Problem

The so-called Hard Problem of consciousness depends on a hidden premise that is rarely stated explicitly and never defended:

“If phenomenology is real, it must be more than any physical or functional description.”

This premise is not an empirical claim. It does not specify conditions under which it would be false. It does not predict any observable difference between worlds in which it holds and worlds in which it does not. No conceivable experiment could show that phenomenology is not “more than” physical or functional description, because the phrase “more than” is left deliberately undefined.

That makes the assumption unfalsifiable by construction.

Worse, it conflicts with experimentally verified science at the methodological level. Modern physics, biology, and cognitive neuroscience operate under a constraint that is both explicit and tested:

If a phenomenon has causal, predictive, or intervention-relevant effects, then it must be capturable within a physical or functional description at some level of organization.

This is not metaphysical physicalism; it is operational naturalism. It is the rule that makes Landauer’s principle, control theory, thermodynamics, and neuroscience possible. The “more than” assumption demands an exception to this rule while refusing to say what the exception does, how it operates, or how it could be detected.

The Supernatural Cheese

To see the structure clearly, consider a parallel claim:

“If supernatural cheese exists, it must be more than any physical or functional description of cheese. Therefore, no amount of chemical analysis, structural modeling, or causal explanation could ever capture it. Its reality is guaranteed precisely by its resistance to explanation.”

This has exactly the same logical form as the “phenomenology must be more than physics” claim. Both assert:

- An entity is real

- Its defining feature is that it exceeds all possible physical or functional accounts

- Its resistance to explanation is treated not as a problem but as confirmation

That is not reasoning. It is immunization by definition.

The only reason the phenomenology version feels less absurd is anthropocentric familiarity. We are the systems doing the modeling, so we confuse first-person availability with ontological surplus. But familiarity is not evidence.

The extraordinary claim is not that consciousness reduces to constraint dynamics. The extraordinary claim is that there exists a real, explanatorily indispensable feature of the world that is:

- Causally relevant (otherwise why care?)

- Empirically undetectable as a separate variable

- Irreducible to any physical or functional description

- Immune to falsification

That is exactly the kind of posit modern science was built to eliminate.

What Physics Actually Gives Us

From physics and adjacent empirical sciences alone, we are licensed to assert only the following:

- Distinction is not free. Stable differences require energetic work and incur thermodynamic cost (Landauer-type constraints).

- Persistence requires constraint. Any pattern that maintains itself over time must restrict degrees of freedom relative to its environment.

- Self-maintenance implies closure. Living systems, and certain artificial systems, maintain viability by closing causal loops between internal states and environmental perturbations.

- Integration is measurable. Systems differ in how widely and flexibly information can influence future states, and these differences are experimentally trackable across waking, sleep, anesthesia, and coma.

None of this yet mentions phenomenology. Importantly, none of this presupposes “intelligence,” “cognition,” or “experience” as primitives.

The Explanandum (Operationally Defined)

We now introduce, without embellishment, what actually needs explaining:

Certain physical systems (notably humans) stably and non-optionally posit that there is “something it is like” to be them. This posit:

- Is globally available to planning, report, and learning

- Tracks state changes (sleep, anesthesia, brain injury) in lawful ways

- Collapses predictably when integrative capacity collapses

- Is treated by the system itself as incorrigible even when specific perceptual beliefs are corrigible

Crucially, this explanandum is not “qualia as a metaphysical glow.” It is the existence, structure, and persistence of phenomenology claims and their breakdown profiles.

Any adequate explanation must account for why systems like us generate and rely on this posit at all.

The Candidate Hypothesis

We now consider a hypothesis class consistent with physics:

H: Phenomenal presence is identical to a system’s internally available, temporally stabilized, integrative control state that summarizes ongoing world- and self-modeling for action under uncertainty.

This hypothesis does not assert that experience is a substance, field, or additional ontological ingredient. It asserts an identity between:

- A physically describable organizational regime, and

- What is referred to in first-person terms as “what it is like”

The Bridge Rule (Explicit)

We now state the bridge rule openly, rather than smuggling it in:

Bridge Rule (IBE):

If a physical system exhibits (i) integrated causal dynamics, (ii) global availability for flexible control, (iii) stable self-modeling with metacognitive access, and (iv) graded, lawful degradation under known consciousness-disrupting interventions, then identifying that regime with phenomenal presence yields greater explanatory compression, predictive accuracy, and intervention guidance than any rival hypothesis that denies or reifies phenomenology.

This is not a deduction. It is an abductive identification justified by explanatory power.

The bridge rule is defeasible. It could be wrong. But it earns its place by doing explanatory work that competing frameworks cannot match.

Concrete Bridges to Data

Global availability and ignition-like dynamics. Stanislas Dehaene and colleagues have shown that conscious access corresponds to a regime where content becomes globally available to many specialized processes, enabling report, flexible reasoning, and planning. The global workspace framework predicts why “experience reports” track a specific integrative regime rather than mere sensory processing.

Perturbational complexity and state discrimination. If phenomenality is tied to the system’s capacity for integrated, differentiated causal dynamics, then perturb-and-measure complexity should separate conscious from unconscious states better than passive stimulus measures. That is exactly what the Perturbational Complexity Index (PCI) research tradition demonstrates across wakefulness, sleep, anesthesia, and disorders of consciousness.

Thermodynamic constraint realism. If a system must maintain boundary-relevant distinctions under finite resources, it will evolve (or be engineered) toward compressed, globally useful summary variables that coordinate action. That makes “presence” unsurprising as an internal control variable, rather than a metaphysical bolt-on.

Why Privacy and Immediacy Arise

Two features of phenomenology seem to resist physical explanation: the “privacy” of experience (no one else can access my inner states directly) and the “immediacy” of experience (it is present without inference). The framework explains both as structural consequences of control architecture, not as spooky ontological properties.

Privacy arises from informational encapsulation and privileged access to internal control states. The self-model that coordinates action has direct read/write access to the states it tracks. Other systems can only access those states indirectly, through behavior, report, or measurement. This is not a metaphysical barrier but an architectural feature: the control variable is internal by design because that is what makes it useful for fast regulation.

Immediacy arises because the control variable must be available with minimal inference overhead. A system that had to derive its own state through lengthy computation would be too slow to respond to threats, opportunities, or changes. The self-model is “immediately present” because immediate presence is what it was selected for. The feeling of directness is the feeling of a control loop operating within its designed latency.

Neither privacy nor immediacy require adding anything to physics. They are what you get when a self-maintaining system evolves internal control states optimized for fast, local coordination.

Why the Phenomenal Posit Is Sticky

A puzzle that “mere mechanism” explanations usually fail to address: why is the “there is something it is like” posit not optional for systems like us? Why can’t we just disbelieve it?

The framework explains: the phenomenal posit is the system’s internally read/write state that certifies integrated control is online.

In plain terms: a system that must keep itself within a viability region needs:

- A boundary-maintaining model

- A relevance weighting scheme

- A compact “status summary” that lets many subsystems coordinate without each subsystem re-deriving everything

That compact status summary is what shows up, from the inside, as “experience present now.”

This makes the following otherwise-odd facts natural:

Why consciousness tracks integration and controllability, not raw sensory input. The control variable matters; raw throughput does not.

Why the posit cannot be simply disbelieved. Disbelieving would require the control variable to report that it is not running while it is running. That is a performance contradiction, not a logical one.

Why “presence” functions as a common currency for relevance and learning. The control state must be accessible to multiple subsystems or it cannot coordinate them.

The “stickiness” is not evidence of a metaphysical extra. It is evidence of a well-designed control architecture that cannot report its own absence while operating.

The Misplaced Concreteness Firewall (Strengthened)

To prevent category error, we impose constraints on our own language:

- “Phenomenal existence” is not treated as a thing that causes behavior. It is a level-of-description for an organizational regime already doing causal work.

- No explanatory step is allowed to depend on phenomenology as an extra ingredient. If an explanation requires phenomenology to do work beyond what the organizational regime does, the explanation has smuggled in misplaced concreteness.

- When we name an abstraction, we must specify the operational handle and the scale at which it cashes out. Otherwise we have committed the fallacy.

This firewall blocks both:

- Dualism (adding a non-physical cause)

- Reification (treating experience as a substance rather than a description)

Why This Framework Does Something Stronger Than “Solving” the Hard Problem

The traditional Hard Problem demands a deductive entailment from physics to “what-it-is-like-ness.” That is a category mistake. No framework can do that without redefining the target or cheating.

What this framework does instead is stronger and cleaner:

- It shows that the Hard Problem is generated by an unfalsifiable premise. The “more than” assumption never earned epistemic standing.

- It replaces that premise with a falsifiable IBE. The bridge rule has loss conditions.

- It explains why phenomenology appears where it does, degrades when it does, and cannot float free of physical organization.

- It leaves no explanatory work for “more-than-physical” posits to do. Once the organizational regime is specified, adding “and also there’s phenomenal presence” does nothing further.

That is not a dodge. That is a methodological win.

Critics can say “but it still feels mysterious.” That is not a falsifiable objection. It is an introspective preference. Preferences do not defeat IBEs.

They cannot refute this account without either:

- Proposing a competing explanation with greater predictive and intervention power, or

- Specifying an empirical test this framework fails and theirs passes

To date, no Hard Problem–motivated framework does either.

Part V: Why Competing Frameworks Fail

The constraint-based framework is not one option among many. It is the framework that survives consistent methodological criteria. Every competing framework examined here fails on at least one of three axes: falsifiability (cannot specify what would prove it wrong), mechanistic completeness (cannot specify how its posited entities interact with physics), or coherence with established thermodynamics (violates or ignores Landauer bounds).

These are not rhetorical attacks. They are diagnostic applications of the falsification-first methodology and the misplaced concreteness audit developed above.

5.1 Panpsychism: Consciousness All the Way Down to Where?

Philip Goff and Galen Strawson argue that consciousness is fundamental, that every physical entity has some form of experience, and that complex consciousness emerges from combining simple experiential units (Goff, 2017; Strawson, 2006).

The problems are structural, not empirical:

The combination problem. How do micro-experiences combine into macro-experience? If each particle has some proto-consciousness, what mechanism integrates them into your unified experience? Panpsychists have offered various proposals, but none specifies a mechanism that would survive independent empirical test. They posit the fundamental without explaining the derivative. Chalmers (2016) identifies three sub-problems: subject combination (how do micro-subjects combine into macro-subjects?), quality combination (how do micro-qualities combine into macro-qualities?), and structure combination (how does micro-structure map to macro-structure?). Despite considerable philosophical effort, none has a satisfying answer.

The combination problem as hidden assumption. Panpsychism does not merely face the combination problem; it assumes combination is possible without specifying any mechanism. The claim that micro-experiences aggregate into macro-experience is not a hypothesis to be tested; it is a promissory note. What physical principle governs this aggregation? What determines which micro-experiences combine and which remain separate? Why does the collection of particles in your brain form one unified consciousness while the collection of particles in a rock does not? Coleman (2014) argues that subjects cannot combine in principle, making the combination problem not merely unsolved but insoluble. This is not an empirical challenge to be overcome; it is evidence that panpsychism has replaced one mystery (emergence of consciousness from non-conscious matter) with another (aggregation of micro-experiences into unified consciousness) while claiming to have solved the first. This is not progress; it is relocation of the explanatory gap.

Unfalsifiability. What observation would show that an electron does NOT have proto-experience? If the answer is “none,” the claim is not science. It is metaphysical preference dressed as theory.

Fecundity without falsifiability. Panpsychism generates papers, conferences, and genuine philosophical engagement. But productivity is not validity. Research programs can flourish without empirical grounding. The crucial question is whether a framework can specify what would prove it wrong. Fecundity tethered to falsifiability is science. Fecundity untethered, however intellectually stimulating, is something else.

The real-but-inert dodge. When pressed, panpsychists often retreat to “consciousness is real but explanatorily inert.” But what criterion distinguishes “real but inert” from “not real as claimed but sentimentally redescribed”? If no criterion, the word “real” does no work. It expresses attachment to vocabulary, not tracking a feature of the world.

Misplaced concreteness diagnosis: Panpsychism takes “experience” as a label for what needs explaining and promotes it to a fundamental constituent of reality. The abstraction (experience-as-category) is reified into an ontological primitive (proto-experience as basic ingredient). No transfer principle specifies how this ingredient combines or what its absence would look like.

Goff’s “cosmic fine-tuning” argument fares no better. He claims the universe “fine-tuned itself” through proto-consciousness. But this is intelligent design with the designer relocated inside the system and granted immunity from empirical challenge. It explains nothing that constraint satisfaction doesn’t explain better, and it cannot specify what would prove it false.

The longevity illusion: Panpsychism has persisted for centuries, from ancient hylozoism through Leibniz’s monads to contemporary analytic philosophy. This longevity is sometimes cited as evidence of the view’s depth or importance. But the longevity follows directly from unfalsifiability. A theory that cannot be disproven cannot be killed by evidence. It survives not because it is true but because it is empty. This is precisely Flew’s diagnostic: when every possible observation is compatible with a claim, the claim says nothing about the world. Panpsychism’s persistence across millennia is not a mark of validity but of vacuity.

5.2 Platonic Morphospace: Forms Without Mechanisms

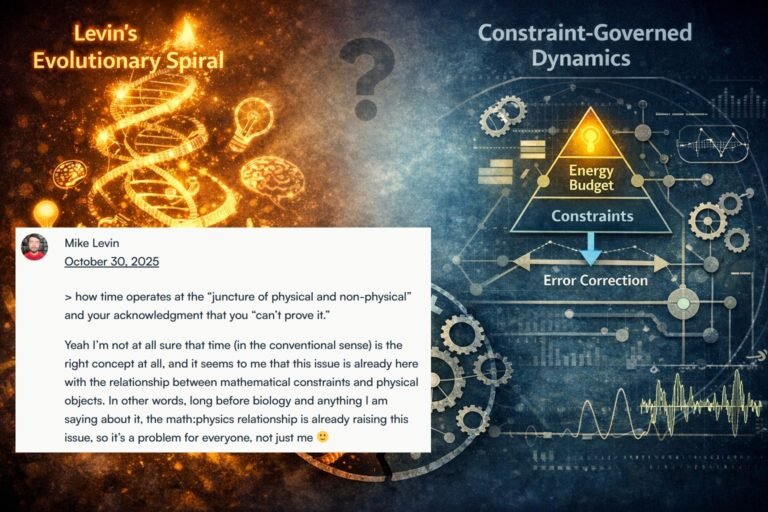

Michael Levin’s bioelectric research is genuinely impressive. His laboratory has demonstrated that manipulating ion channel activity can alter planarian regeneration, induce ectopic eyes, and reshape Xenopus facial structure. The engineering is real. The data are solid.

The metaphysical interpretation, however, raises serious methodological concerns.

Levin claims that organisms “access” pre-existing Platonic forms in an abstract morphospace. Minds are “forms in that space” that “project into the physical world through interfaces.” The morphological outcomes his lab produces are evidence, he claims, that biology accesses timeless patterns rather than constructing them through constraint satisfaction.

Three questions challenge this framework:

1. Interaction Mechanism. How do non-physical Platonic forms causally influence physical bioelectric fields without violating thermodynamic laws? Landauer’s Principle states that information processing requires energy. If Platonic access is informational, it must pay thermodynamic cost. If it is not informational, how does it influence anything?

Levin’s response has been to suggest that “a better science of Platonic forms plus their interfaces will force a re-do of Landauer’s Principle.” This is not an answer. This is a promissory note that asks us to abandon verified physics for unspecified future theory.

2. Path-Dependence Compatibility. The Durant et al. (2017) experiments from Levin’s own lab showed that two-headed planarians maintain their altered morphology indefinitely across regeneration cycles. They do not “correct” toward a canonical one-headed form. This demonstrates path-dependent divergence rather than convergence on pre-existing ideal forms (see also Durant et al., 2019).

If morphospace were Platonic, systems should return to canonical forms when perturbations are removed. They don’t. The morphological identity is a bistable attractor determined by bioelectric memory, not a Platonic form exerting gravitational pull.

3. Falsification Criteria. What empirical result would make Levin say “I was wrong, there is no Platonic realm”? In years of public discussion, no answer has been provided. Every apparent counterexample gets absorbed by reinterpretation: the forms are infinite, the access is partial, the interface is noisy.

This is what Antony Flew called “the death of a thousand qualifications.” A fine brash hypothesis gets killed by inches as each challenge produces another qualification until nothing empirical remains. A framework that cannot lose is not a framework but a faith.

Misplaced concreteness diagnosis: Morphospace is a useful mathematical abstraction: the space of possible configurations compatible with certain constraints. Levin reifies this abstraction into an actual realm that organisms “access.” The description (state space) becomes an existent (Platonic domain). No transfer principle specifies how physical systems interact with non-physical forms. The reification is immune to falsification because “access” can always be postulated as partial, noisy, or mediated.

The Chladni plate provides the correct alternative: patterns emerge from boundary conditions plus vibration, no template access required. The “forms” are constraint-compatible configurations, not pre-existing targets.

5.3 Basal Cognition: The Structural Isomorphism Problem

Levin’s “Technological Approach to Mind Everywhere” (TAME) and “Diverse Intelligence” frameworks extend cognitive vocabulary to cells, tissues, and bioelectric networks. Cells have “goals,” “preferences,” and “competencies.” Morphogenesis is “problem-solving.” Evolution operates on “agential material.”

The vocabulary is intuitive. But the argument structure raises concerns.

The formal isomorphism:

- Observe impressive outcome (efficiency, complexity, competence in reaching target morphology)

- Compare to weak null model (random search, maximal entropy, unguided development)

- Declare gap too large for ordinary mechanisms

- Install special primitive (intelligence, cognition, basal goal-directedness)

This argumentative structure appears elsewhere in biology debates. When outcomes exceed naive expectations, the inference to a special ingredient is tempting but not warranted. “Basal cognition” as an explanatory primitive shares formal structure with other gap-based arguments:

- Gap argument: Efficiency gap → therefore special property

- Constraint alternative: Selection + thermodynamics → efficient outcomes without primitive

Efficiency is output, not mechanism. Comparing an evolved endpoint to a random starting distribution and calling the gap “cognition” mistakes selection record for causal ingredient.

The thermodynamically grounded K-metric that Levin and Chis-Ciure propose is legitimate: it measures efficiency in joules and ATP hydrolysis. But “K-metric is high” does not entail “cognition is present.” Constraint satisfaction under selection pressure produces efficient outcomes without requiring cognitive primitives.

The motte-bailey structure:

- Motte (defensible): K-metric provides quantitative efficiency measure

- Bailey (indefensible): Consciousness is fundamental, patterns are subjects, intrinsic agency pervades biology

When pressed, Levin retreats to the motte. When safe, he advances to the bailey. Documented on video: “You think I was going to fold in consciousness into all this stuff until it got settled? No way.”

This is strategic concealment. The published work establishes vocabulary. The real beliefs are more extreme. The oscillation prevents falsification.

Misplaced concreteness diagnosis: “Cognition” is introduced as a descriptive term for efficient constraint satisfaction. It is then reified into a causal primitive that explains the efficiency it was introduced to describe. The description (efficiency-under-selection) becomes an existent (basal cognitive capacity). The transfer from heuristic label to ontological claim happens without argument. When challenged, proponents retreat to the label sense; when unchallenged, they advance to the primitive sense.

What survives: a salvage framing. The critique above concerns interpretive excess, not empirical findings. Levin’s laboratory results are among the most important contributions to contemporary developmental biology. Demonstrations of non-neural control, distributed error correction, bioelectric patterning, and morphogenetic robustness have permanently altered understanding of how living systems organize themselves. Durant et al. (2017, 2019) showed that bioelectric memory in planarians is path-dependent: perturbations at different developmental points produce different stable outcomes, and organisms can be induced to develop alternative anatomies rather than returning to a single “correct” form. This is genuine discovery.

The framework proposed in this paper preserves everything Levin’s experiments justify while stripping away the metaphysical extrapolation. The empirical claim that non-neural biological systems exhibit robust, adaptive, and error-correcting dynamics does not require positing “cognition” as a primitive. It requires recognizing that constraint satisfaction under thermodynamic bounds produces stable, efficient outcomes across scales. The K-metric measuring metabolic efficiency is legitimate science. The inference from “high efficiency” to “cognition all the way down” is not.

This is not a rejection of the research program; it is a refinement of its conceptual vocabulary. By distinguishing between adaptive dynamics (what the data show), control-theoretic regulation (a legitimate intermediate description), and full-fledged agency or cognition (which requires additional criteria), the constraint framework preserves what is strongest in the experimental work while avoiding commitments that render the framework unfalsifiable. The goal is to protect a powerful research program from interpretive excess that would otherwise undermine its scientific standing.

5.4 Quantum Consciousness: Numbers That Don’t Work

Roger Penrose and Stuart Hameroff’s Orchestrated Objective Reduction (Orch-OR) proposes that consciousness arises from quantum computations in microtubules within neurons. Quantum coherence allows superpositions of mental states. Objective reduction (gravitationally induced collapse) produces moments of conscious experience.

The arithmetic is devastating.

Max Tegmark calculated that quantum coherence times in warm, wet neural tissue range from 10^-13 to 10^-20 seconds, with 10^-13 seconds being the most favorable upper bound (Tegmark, 2000). That is a tenth of a trillionth of a second at best. Neural processes operate on timescales of milliseconds to seconds. Quantum effects decohere about ten billion times too fast to play any computational role in brains.

This is not philosophy. This is arithmetic. The numbers don’t work. Quantum consciousness is not taken seriously by physicists because the thermal environment of the brain destroys coherence before anything computationally interesting could happen.

Penrose’s appeal to “new physics” is a promissory note, not a theory. Hameroff’s empirical claims about anesthetic binding to microtubules have not survived replication. The framework has the form of science while lacking the content.

Misplaced concreteness diagnosis: “Quantum coherence” is introduced as a physical mechanism, but the scale mismatch makes it inoperative. The diagnosis here is not reification but explanatory irrelevance: the posited mechanism cannot do the work assigned because the numbers forbid it. This is not misplaced concreteness but mechanistic failure.

5.5 Mathematical Universe: Existence Is Cheap

Max Tegmark’s Mathematical Universe Hypothesis (MUH) claims that all mathematical structures physically exist, and our universe is one such structure. Consciousness emerges wherever self-aware substructures exist within mathematical objects.

The problem is that mathematical existence is cheap. Every possible mathematical structure “exists” in the Platonic sense. Every possible conscious being “exists” somewhere in the mathematical multiverse. But this explains nothing about why THIS structure is experienced by THIS observer.

The MUH fails falsifiability: What observation would show that non-physical mathematical structures do NOT exist? The hypothesis is compatible with any observation, which means it is compatible with no observation. It cannot be tested.

The MUH confuses description with existence: Mathematical structures describe physical systems. The description existing does not entail the described existing independently. The map is not the territory, even if the map is very accurate.

The MUH provides no mechanism: How does a mathematical structure “implement” consciousness? What is the bridge between abstract existence and phenomenal experience? Tegmark has no answer that isn’t restatement of the question.

Misplaced concreteness diagnosis: The MUH takes mathematical structures (useful abstractions for describing physical systems) and reifies them into independently existing entities that somehow “contain” conscious observers. The description (mathematical model) becomes the existent (mathematical object with inhabitants). This is misplaced concreteness at the grandest possible scale: the entire universe is treated as a concrete instantiation of an abstraction.

5.6 Process Philosophy: Whitehead Without Teeth

Alfred North Whitehead’s process philosophy, developed by contemporary scholars like Matthew Segall, treats experience as fundamental to reality. Every “actual occasion” has both physical and mental poles. The universe is composed of drops of experience in continuous becoming.

The scholarship is impressive. The metaphysics is serious. The engagement with physics is more sophisticated than most consciousness theories.

But the framework cannot lose.

When asked what scientific evidence supports the claim that experience is fundamental, the response is typically that “there is no scientific evidence that consciousness exists” as an external, detectable phenomenon, implying that scientific methods cannot address phenomenological claims.

This is not wrong as a description of methodological limits. But it is used as a shield against any empirical constraint. If no observation could show that experience is NOT fundamental, then the claim is unfalsifiable. If unfalsifiable, it cannot be preferred to alternatives on scientific grounds.

Process philosophy may be beautiful. It may be comforting. It may even be true. But it cannot demonstrate that it is true, because it has insulated itself from any possible demonstration.

Misplaced concreteness diagnosis: Ironically, Whitehead diagnosed the very fallacy that undermines many applications of his own philosophy. When “experience” is promoted from a descriptive category to a fundamental constituent of reality without falsification criteria, the abstraction (experience-as-concept) becomes reified into a primitive (proto-experience as cosmic ingredient). Whitehead himself was careful about this; many of his followers are not. The framework’s strength (genuine engagement with process and relation) becomes its weakness (unfalsifiable primitivism about experience).

5.7 Higher-Order Thought Theories: The Regress Problem

Higher-Order Thought (HOT) theories, developed by David Rosenthal and empirically extended by Hakwan Lau, propose that a mental state becomes conscious only when accompanied by a higher-order representation of that state (Rosenthal, 2005; Lau & Rosenthal, 2011). Consciousness requires not just perceiving but perceiving that one perceives.

The insight worth preserving: HOT theories correctly identify self-modeling as crucial to consciousness. The constraint-based framework agrees: organizational closure necessarily includes variables that track the system’s own state.

The structural problem: HOT theories treat the higher-order representation as a separate, additional layer sitting atop first-order processing. This creates a regress: Is the higher-order thought itself conscious? If yes, what makes it so, another higher-order thought? If no, how does an unconscious thought confer consciousness on its target?

The constraint framework dissolves this regress. Self-modeling is not an additional representation layered atop first-order processing; it is a constitutive feature of organizational closure itself. The system does not have a thought about its states; its states are structured such that self-reference is built into the constraint satisfaction dynamics. There is no separate “higher-order” layer because the closure is inherently self-referential. The unity of experience is not achieved by a meta-representation observing first-order representations; it is achieved by constraint closure that necessarily includes the system’s own operation within its scope.

The empirical complexity: Experiments showing that prefrontal disruption reduces metacognitive confidence without eliminating perception are compatible with both HOT theories and the constraint framework. Rounis et al. (2010) used TMS to transiently disrupt prefrontal cortex during visual detection: objective discrimination remained intact, but subjects’ confidence and metacognitive accuracy dropped. HOT theories interpret this as evidence that a separate higher-order module was impaired; the constraint framework interprets it as evidence that one component of distributed self-modeling was disrupted while others remained intact.