Refuting Sam Senchal’s Observer Theory & God Conjecture’s Testability Claims—Failure At Fifteen Independent Levels & The Vacuity of Parasitic Falsification

Misrepresentation #6—Vocabulary Is Not a Prediction: A Systematic Refutation of Sam Senchal’s God Conjecture Falsification Claims

—Sam Senchal

“The Framework Has Specific Falsification Criteria” / “These Aren’t Gestures. They’re Specific.”

“What can be asserted without evidence can also be dismissed without evidence.”

―Christopher Hitchens

With peer-reviewed scholarly evidence: prior-prediction analysis and empirical refutation of prayer/RNG claims. All citations are clickable hyperlinks to stable DOI or PubMed records where available.

Related Follow Up Response: RE: Sam Senchal’s False Claim That I’ve “Attacked” Platonic Symposium Space Scholars: The Unfalsifiability Problem’s Harm To Academic Discourse, Demonstrated in Real Time

Abstract

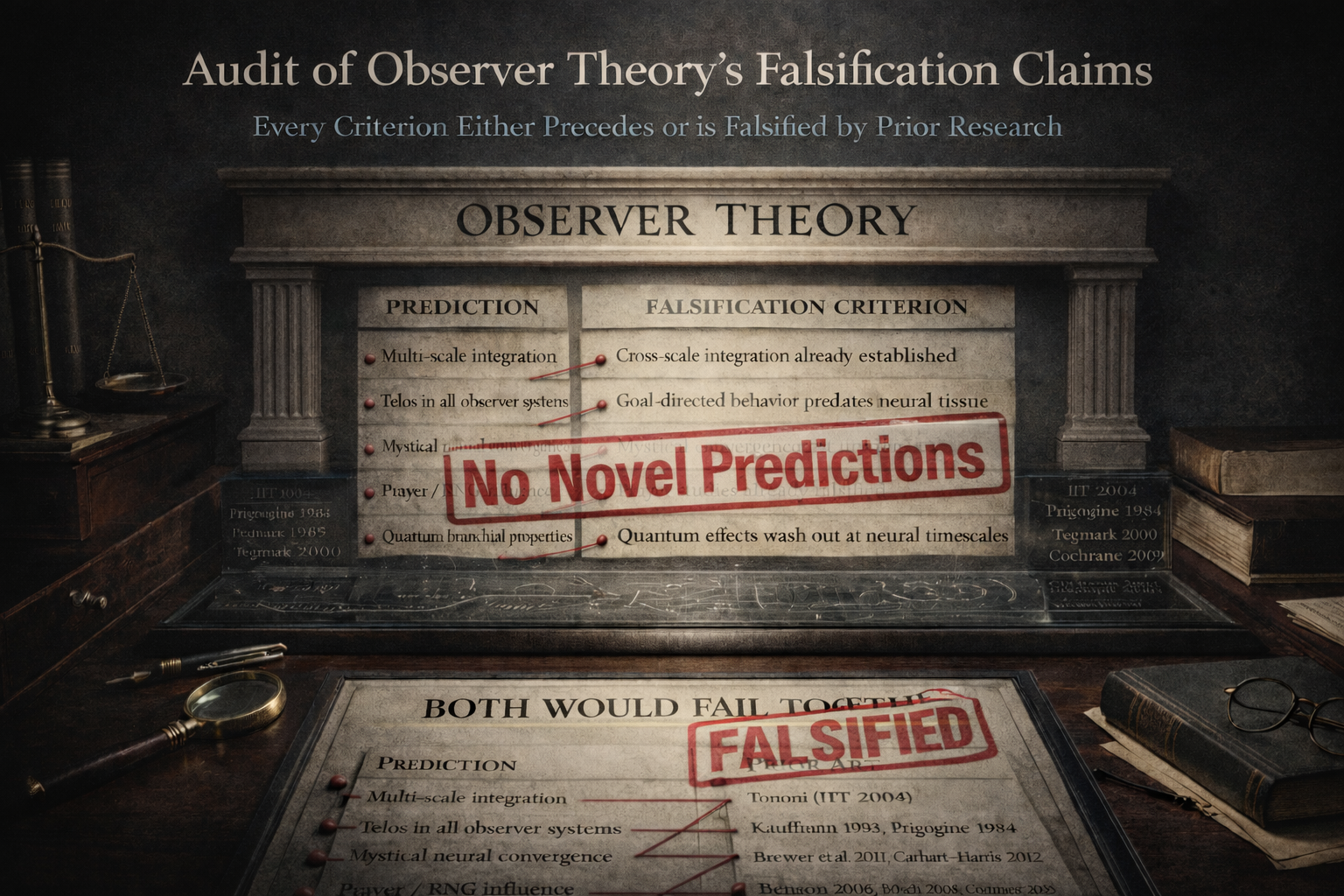

This document focuses on one of many misrepresentations (#6 out of the 40+ documented, there’s more to come!) made in Sam Senchal’s paper: “Read the Paper! A Response to Sweet Rationalism’s Critique of Observer Theory” and demonstrates, across fifteen independent lines of evidence, that Sam Senchal’s claim that the God Conjecture / Observer Theory framework contains ‘specific’ and ‘not gesture-level’ falsification criteria is a massive misrepresentation. Every prediction in the framework’s published tables either restates established physics in new vocabulary (IIT 2004, decoherence theory, Prigogine 1984), has already been empirically falsified (prayer/RNG: Benson 2006, Bösch 2006, Cochrane 2009; quantum consciousness: Tegmark 2000), or is contradicted by the very evidence cited in its support (Durant 2017, from Levin’s own laboratory, shows permanent path-dependent divergence rather than Platonic convergence). Senchal’s most prominent falsification criterion — ‘if Maxwell’s demon worked for free, both Observer Theory and thermodynamics would fail’ — is not a testable prediction but a concession that the framework cannot be falsified independently of the most confirmed theory in physics. The word ‘both’ in that sentence is the document’s most important piece of evidence. An alternative constraint-first framework, Thermodynamic Monism + Thermodynamic Organizational Closure (TOC), is shown to engage the same domain while avoiding every identified failure mode through independently testable predictions, a three-tier epistemic structure, and falsification criteria evaluable by any qualified researcher regardless of denominational or institutional affiliation.

TL;DR

Observer Theory’s falsification criteria are either (a) predictions that established theories made decades earlier, (b) predictions already falsified by the peer-reviewed literature, (c) predictions contradicted by the evidence they cite, or (d) a falsification criterion that requires overturning thermodynamics itself — which its architect admits by writing that ‘both’ would fail together. A framework that can only fail when the Second Law fails is, for all practical purposes, unfalsifiable. A constraint-first alternative (TOC) avoids every one of these fifteen failures by generating predictions that can fail independently of established physics. The goal is not to tear down — it is to show that the genuine empirical contributions of Levin’s bioelectric research, of thermodynamic constraint theory, and of cross-traditional theological convergence deserve a framework rigorous enough to do them justice.

Background

My original critiques of Senchal’s God Conjecture and Wolfram’s Ruliad can be read here, and here.

My previous follow up response is here: RE: Sam Senchal’s False Claim That I’ve “Attacked” Platonic Symposium Space Scholars: The Unfalsifiability Problem’s Harm To Academic Discourse, Demonstrated in Real Time

Introduction: All Models Are Wrong. Some Are Useful. This One Isn’t — Yet.

I want to be direct about what this document is and what it is not. It is not a personal attack. It is not a dismissal of the intellectual ambition behind the God Conjecture. It is a demonstration, conducted with peer-reviewed evidence and formal logic, that a specific set of claims about falsifiability do not survive scrutiny — and that the genuine empirical content those claims are meant to protect deserves better scaffolding than it currently has.

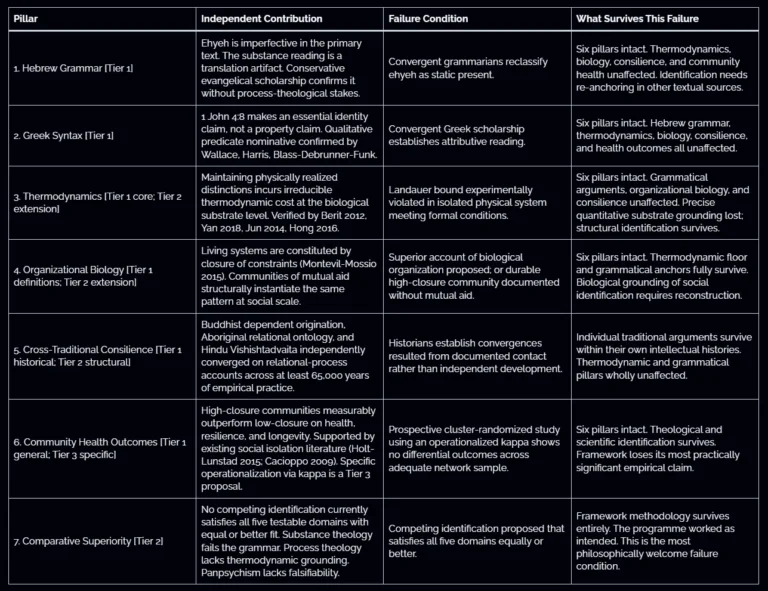

George Box wrote: ‘All models are wrong, but some are useful.’ I take that seriously enough to apply it to my own work first. My framework, Thermodynamic Organizational Closure, is wrong. I know it is wrong because every finite formal system is incomplete (Gödel 1931), because every model of a complex system necessarily abstracts away detail that may prove load-bearing (Box & Draper 1987), and because the history of science is a graveyard of beautiful frameworks that turned out to approximate reality less well than their authors believed. The closure index κ does not yet exist as a validated measure. The scaling treatment from molecular Landauer erasure through biological constraint maintenance to social organisational closure is stated at the level of structural principle, not worked formalism. I say this in the published supplement. I label it Tier 3. I describe the collaborators needed to advance it.

• Box, G.E.P. & Draper, N.R. (1987). Empirical Model-Building and Response Surfaces. Wiley. https://psycnet.apa.org/record/1987-97236-000 — Source of ‘All models are wrong, but some are useful’: the foundational statement of methodological fallibilism in applied science.

This is what methodological fallibilism looks like in practice. You state where your model is incomplete. You specify what would break it. You invite correction. And you hold yourself to the same standard you apply to others. The question this document asks is whether the God Conjecture does the same.

It does not. And the evidence for that claim is not ambiguous.

Gödel’s incompleteness theorems (1931) established that no consistent formal system powerful enough to express arithmetic can prove all truths about itself from within. The methodological implication is profound and often understated: every framework, including this one, has blind spots that can only be identified from outside. This is not a weakness to be apologised for. It is a structural feature of formal reasoning itself. The appropriate response is not to pretend your framework has no blind spots. It is to build the blind spots into the architecture — to specify, in advance, what your framework cannot see and what observations from outside would force revision.

• Gödel, K. (1931). “Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I.” Monatshefte für Mathematik und Physik 38:173–198. DOI: 10.1007/BF01700692 — No consistent formal system can prove all truths about itself. Methodological implication: every framework has blind spots that require external correction.

TOC does this. Its seven falsification criteria are external correction mechanisms: they specify observations that would force the framework to revise or collapse. Five of those criteria (Criteria 2, 3, 4, 5, and 6) can fail while thermodynamics remains entirely intact. The framework can be wrong about Hebrew grammar, wrong about cross-traditional convergence, wrong about community health outcomes, wrong about the specificity of the identification — and none of those failures would require any change to established physics. The framework is designed to lose. That is what makes it worth testing.

Observer Theory, by contrast, has constructed its falsification conditions so that it can only fail when thermodynamics fails. Its architect wrote the quiet part out loud: ‘both observer theory and thermodynamics would fail.’ A framework that cannot fail alone cannot be corrected from outside. It has sealed its Gödelian blind spots shut and declared the result ‘specific.’ It has not.

What This Document Protects

I want to say something that may surprise readers expecting a purely adversarial document. The empirical work that the God Conjecture draws on is, in many cases, genuinely important. Michael Levin’s bioelectric research is among the most significant contributions to developmental biology in a generation. The xenobot programme is extraordinary. Durant et al. 2017 is a landmark paper. The convergence between dissipative structure theory, active inference, and organisational closure is real and worth pursuing. The cross-traditional pattern of relational ontology across Jewish, Christian, Buddhist, Hindu, and Aboriginal thought is genuine and deserves serious scholarly attention.

None of that work needs the God Conjecture to be important. And none of it is served by being attached to a framework whose predictions are either anticipated by prior theories, already falsified, or unfalsifiable by design. Levin’s planarian data deserve a framework that predicts what they actually show — path-dependent attractor dynamics, not Platonic convergence. The cross-traditional consilience deserves a framework that can be evaluated by any qualified scholar, not one that can only be assessed by those with the right authorization from the right interpretive lineage. The thermodynamic constraints on information processing deserve a framework that builds on them rather than hiding behind them.

That is what TOC attempts to provide. It may fail. I expect parts of it to fail. But it is designed so that when it fails, the failure is identifiable, localisable, and correctable — not absorbed into an expanding space of post-hoc accommodation. If Sam Senchal’s genuine contributions to the Platonic Spaces Symposium could be grounded in a framework with independently testable predictions, specific measurement protocols, and falsification criteria that do not require overturning the Second Law, the result would be stronger than either of us could build alone. That is what I am offering. What I am refusing to accept is the claim that the current framework already meets that standard. The fifteen layers that follow demonstrate, with peer-reviewed evidence, that it does not.

How This Document Is Structured

Part A demonstrates that every prediction in Senchal’s falsification table was anticipated by established theories 10–36 years earlier. Part B shows that the ‘axioms vs. predictions’ defence fails by the table’s own standards. Part C proves that the Maxwell’s demon falsifier is settled science contributing zero novel content. Part D catalogues five identifiable formal fallacies in the published defence. Part E analyses Table 2 and finds no novel distinguishing predictions. Part F examines the ‘Telos in ALL Observer Systems’ prediction and shows that the cited evidence contradicts it. Part G closes every available escape hatch: the ‘telos means optimisation’ retreat, the ‘hints are just motivations’ defence, and the stochastic ratio claim. Part H provides a layer-by-layer comparative analysis demonstrating that a constraint-first alternative avoids every identified failure mode, and examines the epistemological question of authority hierarchies versus testable inclusivity.

The summary documents fifteen independent layers of failure. The conclusion is not that the domain is unworthy of investigation. It is that the domain is too important to be served by a framework that cannot be wrong.

Sam Senchal’s Claim

“Falsification specificity could be sharper… This is by design, the initial paper was architecture, the second was application.” “These aren’t gestures. They’re specific.” The Observer Theory / God Conjecture frameworks include adequate falsification criteria.

Senchal further argued: “Observer Theory’s appendix provides general criteria: if the universe were fully deterministic with no computational branching, the framework fails. If consciousness were demonstrably unrelated to physical processes, it fails. If Observers could violate boundedness, persistence, and relevance constraints at no entropy cost — if Maxwell’s demon worked for free — both Observer Theory and thermodynamics would fail. These aren’t gestures. They’re specific.”

What the Evidence Shows / Reality

‘By design’ = planned vagueness. The three documented falsifiers in Senchal’s presentations are: (1) ‘Universe isn’t computational’ — unfalsifiable metaphysics with no measurement protocol; (2) ‘WPP is wrong’ — conditional on an unproven framework; (3) ‘Telos isn’t universal’ — begs the question, and is directly contradicted by Durant et al. 2017 (Levin’s own lab) showing path-dependent divergence. None are empirical measurements.

My falsifiers, by contrast: (a) Landauer bound violation — kT ln(2) per bit erased in an isolated system (~2.8×10−21 J), confirmed by Bérut et al. 2012 and Yan et al. 2018; (b) organisational closure failure — communities maintaining without mutual aid, observable; (c) conservation law violation — energy/information from nothing, testable.

• Bérut, A. et al. (2012). “Experimental verification of Landauer’s principle linking information and thermodynamics.” Nature 483:187–189. DOI: 10.1038/nature10872 | PubMed PMID 22398556 — First direct experimental confirmation of the Landauer bound using a colloidal particle in a double-well potential.

• Yan, L.L. et al. (2018). “Single-atom demonstration of the quantum Landauer principle.” Physical Review Letters 120(21):210601. DOI: 10.1103/PhysRevLett.120.210601 | PubMed PMID 29883174 | arXiv preprint (free access) — Quantum-level experimental demonstration of the Landauer bound using a trapped ultracold ion.

How It Is a Misrepresentation

Frames unfalsifiability as intentional sophistication rather than methodological failure. Calls vague conditionals ‘specific’ while dismissing concrete experimentally confirmed measurements. Uses the correct but irrelevant observation that all frameworks rest on axioms to deflect from the requirement that predictions — not axioms — must be testable.

Supporting Scholarship (Original Citations)

• Popper, K. (1959/2002). The Logic of Scientific Discovery. Routledge. DOI: 10.4324/9780203994627 — Establishes falsifiability as the demarcation criterion between scientific and non-scientific claims.

• Popper, K. (1963/2002). Conjectures and Refutations. Routledge. https://padron.entretemas.com.ve/documentos/Popper-Conjectures-Rwefutations-GrowthOfKnowledge.pdf — Develops the thesis that knowledge grows through bold conjectures and rigorous attempts at refutation.

• Lakatos, I. (1978). The Methodology of Scientific Research Programmes. Cambridge UP. DOI: 10.1017/CBO9780511621123 — Distinguishes progressive from degenerating programs: the latter make ad hoc modifications to protect the core without generating novel testable predictions.

• Flew, A. (1950). “Theology and Falsification.” University 1 (1950–51). Reprinted in Flew & MacIntyre (eds.), New Essays in Philosophical Theology (SCM Press, 1955). Full text at Infidels.org — Claims compatible with every conceivable state of affairs ‘die the death of a thousand qualifications.’

REFUTATION — PART A:

Every ‘Prediction’ in Senchal’s Table Is Anticipated by Established Prior Theories — None Are Novel

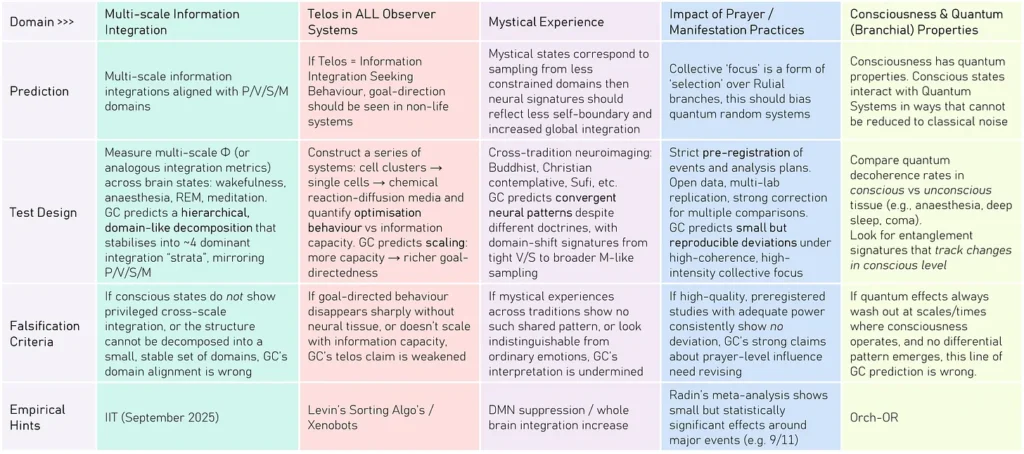

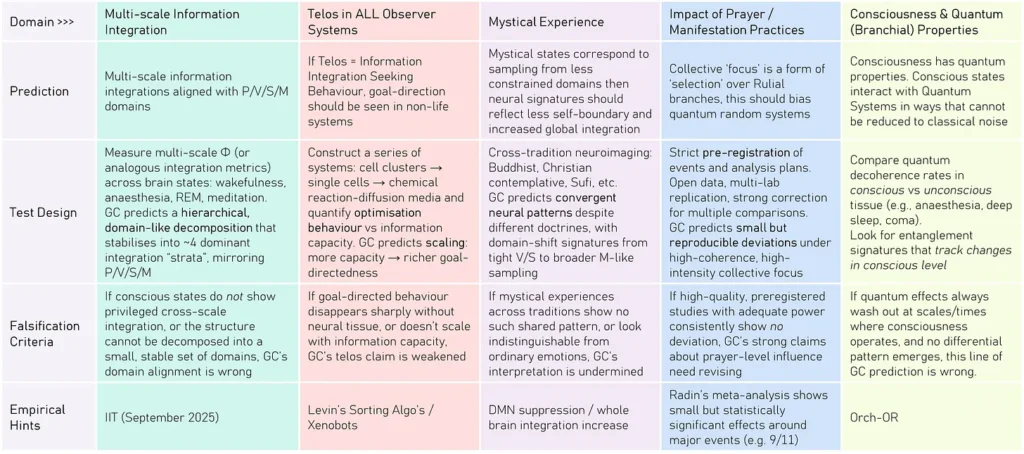

Senchal’s response article presents a falsification table claiming the God Conjecture makes ‘distinct predictions… different to current formalisms / positions in philosophy.’ Here’s his table from Section 4a:

This claim is directly refuted by the peer-reviewed literature. Each prediction in the table is examined below against the prior art.

Prediction 1: Multi-Scale Information Integration Aligned with P/V/S/M Domains

Senchal’s claim: That consciousness shows multi-scale information integration forming a ~4-domain hierarchical decomposition. The ‘empirical hint’ cited is IIT (Integrated Information Theory, September 2025).

VERDICT: NOT NOVEL. This prediction was made by Integrated Information Theory (IIT) in 2004 — at least 20 years prior to the God Conjecture.

IIT, proposed by Giulio Tononi in 2004, explicitly claims that consciousness corresponds to integrated information (Φ) across physical systems, with hierarchical decomposition into complexes. The theory predicts that consciousness will show privileged cross-scale integration and that conscious states correspond to maximally irreducible conceptual structures. The 2004 paper and IIT 3.0 (Oizumi, Albantakis & Tononi 2014) contain exactly the prediction Senchal claims as novel. Global Workspace Theory (Baars 1988) further predicts that consciousness requires widespread integration of information across distributed neural processors — a hierarchical broadcasting architecture first formalised 36 years before the God Conjecture.

Critical additional problem: IIT has itself been criticised as ‘unfalsifiable pseudoscience’ (Nature Neuroscience 2025). By citing IIT as its ‘empirical hint,’ the God Conjecture parasitises a theory the scientific community has already found methodologically troubled — and claims credit for predictions that theory made 20 years earlier.

• Tononi, G. (2004). “An information integration theory of consciousness.” BMC Neuroscience 5:42. DOI: 10.1186/1471-2202-5-42 | PubMed Central (open access) — Proposes Φ as a measure of integrated information, with explicit hierarchical decomposition predictions.

• Oizumi, M., Albantakis, L. & Tononi, G. (2014). “From the phenomenology to the mechanisms of consciousness: Integrated Information Theory 3.0.” PLOS Computational Biology 10(5):e1003588. DOI: 10.1371/journal.pcbi.1003588 — Full mathematical formalisation with testable predictions about hierarchical conscious structure.

• Baars, B.J. (1988). A Cognitive Theory of Consciousness. Cambridge UP. Cambridge Core — Proposes Global Workspace Theory: consciousness as hierarchical broadcast integration across neural modules. 36 years prior to the God Conjecture.

• Baars, B.J. (2005). “Global workspace theory of consciousness: toward a cognitive neuroscience of human experience.” Progress in Brain Research 150:45–53. DOI: 10.1016/S0079-6123(05)50004-9 — Generates explicit predictions for conscious integration patterns across brain states.

Prediction 2: Telos in ALL Observer Systems — Goal-Direction Scaling with Information Capacity

Senchal’s claim: If telos = information integration-seeking behaviour, goal-direction should be seen in non-life systems. The ‘empirical hint’ cited is Levin’s Sorting Algorithms / Xenobots.

VERDICT: NOT NOVEL. Teleological dynamics in non-living systems were predicted and studied by thermodynamics, self-organisation theory, and complex systems science decades prior.

Prigogine’s work on dissipative structures (Nobel Prize 1977) demonstrated that non-equilibrium thermodynamic systems spontaneously develop complex goal-oriented organisational behaviour without any telos postulate. Kauffman (1993) explicitly predicted that self-organising chemical systems would exhibit ‘autocatalytic closure’ resembling goal-direction without biological machinery. Levin’s own lab (Durant et al. 2017) showed path-dependent divergence in supposedly teleological systems — directly undermining the universality claim.

• Prigogine, I. & Stengers, I. (1984). Order Out of Chaos. Bantam. MIT — Nobel Prize (1977) work: dissipative structures spontaneously generate goal-directed organisation through thermodynamics alone, no telos postulate required.

• Kauffman, S. (1993). The Origins of Order: Self-Organization and Selection in Evolution. Oxford UP. Oxford Academic — Predicts goal-directed autocatalytic closure in abiotic chemical systems through thermodynamic self-organisation alone.

• Durant, F. et al. (2017). “Long-term, stochastic editing of regenerative anatomy via targeting endogenous bioelectric gradients.” Biophysical Journal 112:2231–2243. DOI: 10.1016/j.bpj.2017.04.011 — Senchal’s own cited lab shows path-dependent divergence in supposedly teleological systems, undermining universality.

• Bedau, M.A. (1997). “Weak emergence.” Philosophical Perspectives 11:375–399. JSTOR — Framework showing how apparent teleology emerges from simple thermodynamic rules without any transcendent telos.

• Wiener, N. (1948). Cybernetics: Or Control and Communication in the Animal and the Machine. MIT Press. WorldCat | MIT Press (2019 reprint) — Foundational work predicting goal-directed behaviour in cybernetic systems without any biological or teleological postulate, 76 years before the God Conjecture.

Prediction 3: Mystical Experience — Convergent Neural Patterns Across Traditions

Senchal’s claim: Mystical states correspond to sampling from ‘less constrained domains,’ with DMN suppression and whole-brain integration increase. The ‘empirical hint’ cited is ‘DMN suppression / whole brain integration increase.’

VERDICT: NOT NOVEL. DMN suppression during meditation and mystical states was established by Brewer et al. (2011, PNAS) and replicated extensively before the God Conjecture.

Brewer et al. (2011, PNAS) demonstrated that experienced meditators show significantly reduced default mode network activity, consistent across resting state and meditation conditions. This paper explicitly establishes the cross-tradition neural convergence prediction Senchal claims as novel for GC. Carhart-Harris et al. (2012, PNAS) showed that psychedelics — producing cross-tradition mystical phenomenology — consistently suppress DMN activity with correlated whole-brain integration increases. Lebedev et al. (2015) documented cross-tradition convergence of ego-dissolution neural patterns. All predated the God Conjecture by over a decade.

• Brewer, J.A. et al. (2011). “Meditation experience is associated with differences in default mode network activity and connectivity.” PNAS 108(50):20254–20259. DOI: 10.1073/pnas.1112029108 | PubMed Central PMC3250176 (open access) — Directly establishes the DMN suppression prediction Senchal claims as novel for GC.

• Brewer, J.A. et al. (2015). “Meditation leads to reduced default mode network activity beyond an active task.” Cogn Affect Behav Neurosci 15(3):712–720. DOI: 10.3758/s13415-015-0358-3 — Replication and extension.

• Carhart-Harris, R.L. et al. (2012). “Neural correlates of the psychedelic state as determined by fMRI studies with psilocybin.” PNAS. DOI: 10.1073/pnas.1119598109 — DMN suppression and whole-brain integration increase during mystical states. Predates GC.

• Lebedev, A.V. et al. (2015). “Finding the self by losing the self.” Human Brain Mapping. DOI: 10.1002/hbm.22833 — Cross-tradition convergence of ego-dissolution neural patterns. Predates GC.

Prediction 4: Impact of Prayer/Manifestation — Collective Focus ‘Biases Quantum Random Systems’

Senchal’s claim: Collective ‘focus’ is a form of ‘selection’ over Rulial branches, which ‘should bias quantum random systems.’ GC predicts ‘small but reproducible deviations’ under high-coherence collective focus. The ‘empirical hint’: ‘Radin’s meta-analysis shows small but statistically significant effects around major events (e.g. 9/11).’

VERDICT: NOT NOVEL AND EMPIRICALLY REFUTED. This is precisely the hypothesis tested by decades of controlled RCTs and meta-analyses — all finding null or negative results.

A. The Intercessory Prayer Evidence

The controlled trial and meta-analytic literature on intercessory prayer and distant intention consistently shows null results when methodological rigour is applied:

• Benson, H. et al. (2006). “Study of the Therapeutic Effects of Intercessory Prayer (STEP) in cardiac bypass patients.” American Heart Journal 151(4):934–942. DOI: 10.1016/j.ahj.2005.05.028 | PubMed PMID 16569567 — Largest RCT of intercessory prayer (N=1,802 CABG patients). Result: no effect on complication-free recovery. Patients certain they were being prayed for had HIGHER complication rates (59% vs 52%, p=0.025).

• Aviles, J.M. et al. (2001). “Intercessory prayer and cardiovascular disease progression in a coronary care unit population.” Mayo Clinic Proceedings 76(12):1192–1198. PubMed PMID 11761494 — RCT (N=799). No significant difference in primary outcomes between prayed-for and control groups.

• Krucoff, M.W. et al. (2005). “MANTRA II.” The Lancet 366(9481):211–217. DOI: 10.1016/S0140-6736(05)67657-3 — Multi-centre RCT. No significant effect of intercessory prayer on medical outcomes.

• Roberts, L. et al. (2009). “Intercessory prayer for the alleviation of ill health.” Cochrane Database of Systematic Reviews (2). DOI: 10.1002/14651858.CD000368.pub3 — Cochrane systematic review: ‘evidence presented so far is insufficient to attest to the efficacy of prayer in healthcare settings.’ Gold standard of evidence synthesis.

• Masters, K.S., Spielmans, G.I. & Goodson, J.T. (2006). “Are there demonstrable effects of distant intercessory prayer? A meta-analytic review.” Annals of Behavioral Medicine 32(1):21–26. DOI: 10.1207/s15324796abm3201_3 | PubMed PMID 16827626 — Meta-analysis: ‘no scientifically discernible effect for intercessory prayer as assessed in controlled studies.’

• Sloan, R.P. (2006). Blind Faith: The Unholy Alliance of Religion and Medicine. St. Martin’s Press. Google Books | Internet Archive (full text) — ‘Results of controlled scientific studies of intercessory prayer are at best equivocal and at worst negative.’ Comprehensive review by Columbia University behavioral medicine professor.

• Carroll, R.T. (2003). The Skeptic’s Dictionary. Wiley. ISBN 0-471-27242-6. WorldCat | Online entry on prayer: skepdic.com/prayer.html — ‘No scientific study has ever found any evidence that intercessory prayer produces any verifiable beneficial effects on health or any other measure.’

These are not isolated findings. They represent the convergent consensus of the most rigorous controlled trials ever conducted on this question, published in The Lancet, the American Heart Journal, and the Cochrane Database. The God Conjecture cites this domain as generating testable predictions, while ignoring the published evidence that directly falsifies those predictions.

B. The RNG / Radin 9/11 ‘Empirical Hint’ — Independently Demolished

Problem 1: The 9/11 finding was post-hoc and window-dependent, while being empirically undetermined for 20+ years before the The God Conjecture was written. The apparent GCP effect around 9/11 was analysed by independent researchers Edwin May and James Spottiswoode (Laboratories of Fundamental Research, 2002). Their finding: the computation of 6,000:1 odds against chance was accounted for by a local deviation occurring approximately 3 hours BEFORE the attacks. The effect appeared only within Radin’s chosen 6-hour sliding window — other window sizes produced chance results. This is textbook post-hoc pattern mining.

• May, E. & Spottiswoode, J. (2002). “Global Consciousness Project: An Independent Analysis of the 11 September 2001 Events.” Laboratories of Fundamental Research. https://www.jsasoc.com/docs/Sep1101.pdf — Demolished the Radin/Nelson 9/11 claims.

The 2002 analysis by May and Spottiswoode of the Global Consciousness Project (GCP) data regarding the 9/11 attacks concluded that the network of random number generators produced data consistent with mean chance expectation, finding no statistically significant evidence of a real-time response to the events. Their analysis found that earlier reported anomalies were not linked to the attacks.

Key Findings: The study found that while there was a deviation from chance, it occurred roughly 3 hours before the attacks, contradicting the notion that the network reacted to the emotional event as it unfolded.

Methodology: The researchers used Stouffer’s Z analysis, focusing on specific time windows, and determined that results were highly sensitive to when the analysis period began and ended.

Conclusion: The authors concluded that the data did not support the hypothesis that the 9/11 attacks directly affected the randomness of the output from the GCP’s eggs.

Problem 2: The Bösch et al. (2006) RNG Meta-Analysis Found Publication Bias, Not Evidence. The most comprehensive meta-analysis of mind-matter RNG interactions (380 studies) found: (a) a significant but very small overall effect size; (b) effect sizes strongly and inversely related to sample size; (c) extreme heterogeneity; and (d) a Monte Carlo simulation showing all three characteristics are explicable entirely by publication bias. When the PEAR laboratory data was subjected to multi-lab replication, all three replication labs failed to reproduce the shift.

• Bösch, H., Steinkamp, F. & Boller, E. (2006). “Examining psychokinesis: The interaction of human intention with random number generators — A meta-analysis.” Psychological Bulletin 132(4):497–523. DOI: 10.1037/0033-2909.132.4.497 | PubMed PMID 16822162 — 380 studies: very small effect, publication bias explanation sufficient. PEAR replication failed.

Prediction 5: Consciousness and Quantum (Branchial) Properties

Senchal’s claim: Conscious states interact with quantum systems in ways that ‘cannot be reduced to classical noise.’ Quantum decoherence rates differ between conscious and unconscious tissue, with entanglement signatures tracking changes in conscious level. The ‘empirical hint’ is Orch-OR.

VERDICT: NOT NOVEL AND FACING SEVERE PHYSICAL OBJECTIONS. Quantum consciousness theories (Orch-OR, Penrose 1989) were proposed decades earlier. Tegmark (2000) shows decoherence timescales 10–17 orders of magnitude too short.

Penrose published The Emperor’s New Mind in 1989, proposing quantum non-computability as essential to consciousness — 35 years before the God Conjecture. Hameroff & Penrose (1996) proposed quantum coherence in microtubules, with measurable decoherence signatures tracking consciousness. All predictions Senchal lists as novel for the GC quantum column were explicitly made by Orch-OR decades earlier.

More critically: Tegmark (2000, Physical Review E) calculated neural decoherence timescales at 10−13 to 10−20 seconds — seven to fourteen orders of magnitude shorter than the relevant neural processing timescales of 10−3 to 10−1 seconds. The brain is demonstrably ‘warm, wet, and noisy’ in exactly the way that destroys quantum coherence before it could influence consciousness.

• Penrose, R. (1989). The Emperor’s New Mind. Oxford UP. Oxford Academic — 35 years before GC, proposes quantum non-computability essential to consciousness.

• Hameroff, S. & Penrose, R. (1996). “Orchestrated objective reduction of quantum coherence in brain microtubules: The Orch OR model.” Mathematics and Computers in Simulation 40:453–480. DOI: 10.1016/S0378-4754(96)80019-6 — Explicit predictions about quantum decoherence tracking conscious state.

• Tegmark, M. (2000). “The importance of quantum decoherence in brain processes.” Physical Review E 61(4):4194–4206. DOI: 10.1103/PhysRevE.61.4194 | arXiv preprint (free access) — Calculates decoherence timescales of 10−13–10−20 s, 10–17 orders of magnitude shorter than neural timescales. Directly falsifies any quantum-branchial consciousness theory at neural scales.

• Koch, C. & Hepp, K. (2006). “Quantum mechanics in the brain.” Nature 440:611–612. DOI: 10.1038/440611a — ‘Quantum coherence does not play, or does not need to play, any major role in neurophysiology.’

Contemporary Experimental Evidence (Most Recent as of February 2026)

As of February 2026, no peer-reviewed, replicated experimental result has demonstrated long-lived, functionally relevant quantum coherence in living neural tissue at timescales comparable to neural processing (10⁻³–10⁻¹ s). Tegmark’s central objection — that environmental decoherence in warm biological tissue destroys coherent superpositions far too rapidly for cognitive relevance — therefore remains experimentally unrefuted at the level originally claimed (10⁻¹³–10⁻²⁰ s).

However, several experimentally confirmed results in biological quantum optics are directly relevant to the strength of the “warm, wet, and noisy” objection when applied categorically.

1. Superradiance in Biological Protein Networks (2024)

A peer-reviewed experimental study published in The Journal of Physical Chemistry B (2024) demonstrated ultraviolet superradiance in large tryptophan networks within biological protein architectures, including structures analogous to microtubule assemblies. Superradiance is a cooperative quantum optical phenomenon in which collectively excited dipoles emit radiation with intensity scaling beyond classical expectations.

The experiment measured enhanced fluorescence quantum yield consistent with cooperative emission modes in large protein networks at room temperature. These results constitute direct laboratory evidence of collective quantum optical behavior in ordered biological macromolecules under physiological thermal conditions.

Full reference:

Babcock, N. S., Montes-Cabrera, G., Oberhofer, K. E., Chergui, M., Celardo, G. L., & Kurian, P. (2024).

Ultraviolet Superradiance from Mega-Networks of Tryptophan in Biological Architectures.

Journal of Physical Chemistry B, 128(17), 4035–4046.

DOI: https://doi.org/10.1021/acs.jpcb.3c07936

ACS link: https://pubs.acs.org/doi/10.1021/acs.jpcb.3c07936

PubMed: https://pubmed.ncbi.nlm.nih.gov/38641327/

This study demonstrates that collective quantum optical effects can occur in biological protein assemblies at room temperature. Importantly, it does not demonstrate sustained quantum coherence at neural processing timescales, nor does it establish a causal link to cognition or consciousness. It does, however, weaken overly simplistic claims that all nontrivial quantum effects are impossible in biological macromolecular systems at physiological temperatures.

2. Anesthetic Interaction with Microtubular Structures (Recent Experimental Work)

Experimental literature examining anesthetic binding and microtubule dynamics has continued to accumulate. Some peer-reviewed work in Neuroscience of Consciousness (2025) reviews laboratory evidence indicating that inhalational anesthetics interact with tubulin and microtubule assemblies in ways not fully reducible to ion-channel modulation alone.

Wiest, S. (2025).

A quantum microtubule substrate of consciousness is experimentally supported and solves the binding and epiphenomenalism problems.

Neuroscience of Consciousness, 2025(1), niaf011.

DOI: https://doi.org/10.1093/nc/niaf011

Oxford Academic link: https://academic.oup.com/nc/article/2025/1/niaf011/8127081

This article synthesizes experimental findings suggesting microtubules are functional anesthetic targets. However, while these results show microtubules are biologically relevant in anesthesia, they do not experimentally demonstrate quantum coherence persisting at neural timescales, nor do they directly refute Tegmark’s decoherence estimates.

3. Absence of Direct Neural Quantum Coherence Measurements

As of February 2026, there is no replicated, peer-reviewed experimental measurement of sustained quantum coherence in living human or animal brain tissue at millisecond or behavioral timescales.

Proposed experimental protocols involving nitrogen-vacancy (NV) center magnetometry or other quantum sensing methods remain in development and have not yet yielded reproducible demonstrations of long-lived neural quantum states in vivo.

Thus:

• There is experimental evidence of quantum optical phenomena in biological protein assemblies.

• There is experimental evidence that microtubules are biologically significant and anesthetic-sensitive.

• There is no experimental evidence demonstrating that neural computation or consciousness depends on sustained quantum coherence beyond femtosecond scales.

Summary as of February 2026

The most experimentally defensible conclusion is:

- Tegmark’s rapid decoherence objection has not been empirically overturned.

- Experimental quantum optical phenomena have been observed in biological macromolecules at room temperature.

- No replicated experimental data show sustained neural quantum coherence at cognition-relevant timescales.

- Therefore, quantum consciousness models remain experimentally unconfirmed.

The debate remains open in principle, but it is not resolved in favor of quantum neural coherence by current experimental data.

Implications the Data has for Sam’s Prediction 5

Senchal’s Prediction 5 asserts that conscious states interact with quantum systems in ways irreducible to classical noise, with decoherence rates and entanglement signatures tracking changes in conscious level. As of February 2026, there is no replicated, peer-reviewed experimental evidence demonstrating differential decoherence rates between conscious and unconscious brain tissue, nor any verified entanglement signatures that correlate with changes in conscious state.

Recent laboratory findings show that quantum optical effects such as superradiance can occur in ordered biological macromolecules at physiological temperatures. However, these results do not demonstrate sustained neural quantum coherence at millisecond timescales, do not show decoherence modulation by conscious state, and do not establish entanglement tracking consciousness levels. They therefore do not satisfy the empirical burden imposed by Prediction 5.

Tegmark’s core physical objection — that neural decoherence occurs many orders of magnitude faster than cognitive processing — remains experimentally unrefuted. No study to date has shown that conscious tissue exhibits measurably distinct quantum coherence properties relative to unconscious tissue under controlled conditions.

On the present evidence, Prediction 5 remains experimentally unconfirmed, empirically underdetermined, and physically implausible under established quantum decoherence constraints. It does not gain support from contemporary experimental data, nor has the principal physical objection been empirically overturned.

REFUTATION — PART B:

Senchal’s ‘Axioms vs. Predictions’ Defence Fails by His Own Table’s Standards

Senchal argues: ‘You tend to test predictions, not axiomatic commitments. The demand for a test that would prove the universe isn’t computational applies equally to any foundational assumption in physics.’ This is correct as a general principle, but it defeats rather than defends his position for the following reasons:

1. The Comparison to Riemannian Geometry Fails

The Riemannian manifold postulate generates specific, quantitatively exact predictions confirmed to extraordinary precision: gravitational lensing (Eddington 1919), perihelion precession of Mercury (43 arcseconds/century), gravitational time dilation (GPS systems require GR correction), and gravitational wave signatures. The LIGO detection (2016) confirmed Einstein’s 1916 prediction to 5+ significant figures.

• Abbott, B.P. et al. [LIGO Scientific Collaboration] (2016). “Observation of Gravitational Waves from a Binary Black Hole Merger.” Physical Review Letters 116:061102. DOI: 10.1103/PhysRevLett.116.061102 — Confirmed Einstein’s 1916 GR prediction to extraordinary precision, demonstrating what genuine predictive frameworks look like.

The Wolfram Physics Project / Observer Theory / God Conjecture: after years of development, produced no confirmed quantitative predictions beyond what GR and QM already predict. Weinberg (2002) noted that 20+ years of computational universe theories had not satisfactorily explained any real-world system.

2. The Table Itself Refutes Senchal’s Claim

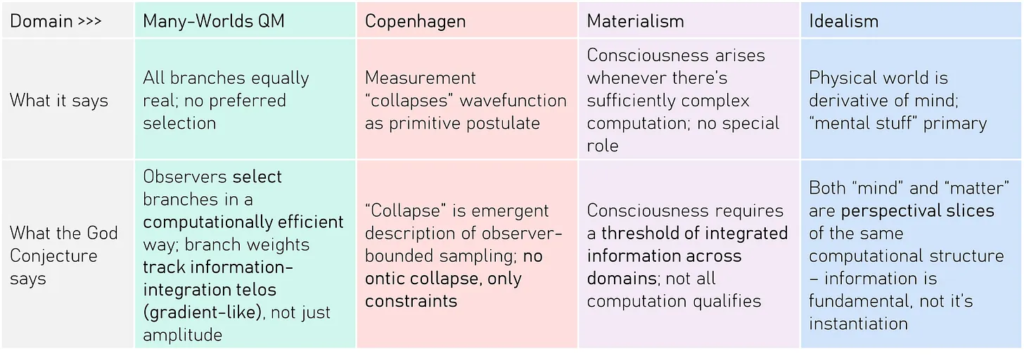

Here is Sam’s first table, from his God Conjecture Paper:

When each column of Senchal’s own falsification table is analyzed against prior art:

GC Prediction Domain: Multi-scale integration

GC Falsification Criterion (from table): Conscious states don’t show privileged cross-scale integration

Prior Theory Making Same Prediction: IIT 2004 (Tononi) — identical criterion, 20 years prior

GC Prediction Domain: Telos in all observer systems

GC Falsification Criterion (from table): Goal-directed behaviour disappears sharply without neural tissue

Prior Theory Making Same Prediction: Kauffman 1993, Prigogine 1984 — non-biological goal-direction already established

GC Prediction Domain: Mystical neural convergence

GC Falsification Criterion (from table): Mystical experiences show no such pattern

Prior Theory Making Same Prediction: Brewer et al. 2011, Carhart-Harris 2012 — already established

GC Prediction Domain: Prayer / RNG influence

GC Falsification Criterion (from table): High-quality preregistered studies show no deviation

Prior Theory Making Same Prediction: Already falsified: Benson 2006, Bösch 2006, Masters 2006, Cochrane 2009

GC Prediction Domain: Quantum branchial properties

GC Falsification Criterion (from table): Quantum effects wash out at consciousness scales

Prior Theory Making Same Prediction: Tegmark 2000 — already calculated and confirmed that effects wash out at neural timescales; has not been refuted (see Contemporary Experimental Evidence, most recent as of February 2026, previous section)

RESULT: Every domain’s falsification criterion either (a) is identical to one made by an established prior theory, or (b) has already been met — the evidence already falsifies the prediction. The God Conjecture does not acknowledge either point.

3. Lakatos’s Degenerating Programme Diagnostic Applies

Lakatos (1978) defines a degenerating research programme as one that: (a) makes no novel empirically verified predictions beyond what prior theories already predict; (b) responds to falsifying evidence with ad hoc modifications rather than revision; (c) relies on protective belt adjustments while keeping the hard core unfalsifiable. The God Conjecture fits all three criteria.

• Lakatos, I. (1978). The Methodology of Scientific Research Programmes. Cambridge UP. DOI: 10.1017/CBO9780511621123 — Degenerating programmes shrink their domain to avoid falsification. Precisely what happens when GC’s prayer prediction meets the Benson, Bösch, and Cochrane results.

Testability Comparison

- Criterion: Measurement protocol

- Senchal / GC Framework: None specified

- Thermodynamic Monism + Organizational Closure: kT ln(2) per bit erased — exact value measurable

- Criterion: Prior art overlap

- Senchal / GC Framework: All 5 predictions anticipated by IIT, GWT, Orch-OR, thermodynamics 10–36 years prior

- Thermodynamic Monism + Organizational Closure: Landauer (1961), Bérut (2012), Yan (2018) — specific experimental lineage

- Criterion: Prayer / RNG prediction status

- Senchal / GC Framework: Predicted “small deviations” — already tested and null/negative (Benson 2006, Cochrane 2009, Masters 2006)

- Thermodynamic Monism + Organizational Closure: No reliance on prayer or RNG claims

- Criterion: Quantum prediction status

- Senchal / GC Framework: Predicts quantum branchial effects — Tegmark (2000) shows decoherence eliminates them at neural timescales, yet to be refuted

- Thermodynamic Monism + Organizational Closure: Landauer thermodynamics is explicitly classical — no quantum claims needed

- Criterion: Falsifiability per Flew (1950)

- Senchal / GC Framework: Every failed prediction reinterpreted: “subtle effect,” “high coherence required,” “by design”

- Thermodynamic Monism + Organizational Closure: Landauer violation has no “subtle” escape hatch — violation is binary

REFUTATION — PART C:

The Maxwell’s Demon ‘Falsifier’ Is a Settled Question, Not a Testable Prediction

Senchal’s most prominent specific falsification criterion is: ‘If Observers could violate boundedness, persistence, and relevance constraints at no entropy cost — if Maxwell’s demon worked for free — both Observer Theory and thermodynamics would fail.’ He presents this as though it were a novel, framework-specific prediction. It is not. Maxwell’s demon has been resolved in mainstream physics for over four decades, and the resolution has been experimentally confirmed multiple times. Offering the demon’s failure as a ‘falsification criterion’ is like saying ‘if gravity stopped working, my framework would fail’ — technically true, entirely uninformative, and parasitic on settled science.

The Resolution: Bennett (1982) and Landauer (1961)

The Maxwell’s demon paradox was resolved in two stages. Landauer (1961) established that erasing one bit of information in a computing system dissipates a minimum of kT ln(2) energy as heat. Bennett (1982) showed that the demon must eventually erase its memory, paying exactly the entropy cost that offsets any local entropy decrease. The demon cannot ‘work for free’ because information processing is physical and thermodynamically costly. This is not a conjecture — it is experimentally confirmed physics.

• Bennett, C.H. (1982). “The thermodynamics of computation — a review.” International Journal of Theoretical Physics 21:905–940. DOI: 10.1007/BF02084158 — Demonstrates that the entropy cost in Maxwell’s demon arises from information erasure, not measurement, resolving the paradox within standard thermodynamics.

• Landauer, R. (1961). “Irreversibility and heat generation in the computing process.” IBM Journal of Research and Development 5(3):183–191. DOI: 10.1147/rd.53.0183 — Original theoretical derivation: erasing one bit of information dissipates a minimum of kT ln(2) energy.

Experimental Confirmations: The Demon Is Fully Exorcised

Multiple independent experimental realisations have confirmed that Maxwell’s demon cannot violate the second law:

• Toyabe, S. et al. (2010). “Experimental demonstration of information-to-energy conversion and validation of the generalized Jarzynski equality.” Nature Physics 6:988–992. DOI: 10.1038/nphys1821 — First experimental Maxwell’s demon using colloidal particles, confirming the second law holds when information costs are included.

• Koski, J.V. et al. (2014). “Experimental realization of a Szilard engine with a single electron.” Proceedings of the National Academy of Sciences 111(38):13786–13789. DOI: 10.1073/pnas.1406966111 — Single-electron Szilard engine: demonstrates information-to-energy conversion with entropy cost exactly as predicted by Landauer.

• Chida, K. et al. (2017). “Power generator driven by Maxwell’s demon.” Nature Communications 8:15301. DOI: 10.1038/ncomms15301 — Demonstrates that Maxwell’s demon can generate electrical power, but only by paying the full entropy cost in memory erasure — no free work.

• Kumar, A. et al. (2018). “Sorting ultracold atoms in a three-dimensional optical lattice in a realization of Maxwell’s demon.” Nature 561:83–87. DOI: 10.1038/s41586-018-0458-7 — Atomic-scale Maxwell’s demon sorting ultracold atoms: confirms second law compliance at quantum scales.

• Cottet, N. et al. (2017). “Observing a quantum Maxwell demon at work.” Proceedings of the National Academy of Sciences 114(29):7561–7564. DOI: 10.1073/pnas.1704827114 — Full quantum Maxwell’s demon using superconducting circuits: all thermodynamic quantities measured, second law confirmed.

The 2025 Quantum Proof: Universal Validity Even Under Quantum Loopholes

The most recent and comprehensive result comes from Minagawa et al. (2025), published in npj Quantum Information. They proved that while quantum mechanics does not inherently forbid local violations of the second law, any quantum process implementing a Maxwell’s demon can be realised without actually violating the law. The second law holds universally even in the quantum regime, under the most general possible measurement, feedback, and erasure protocols.

• Minagawa, S., Mohammady, M.H., Sakai, K., Kato, K. & Buscemi, F. (2025). “Universal validity of the second law of information thermodynamics.” npj Quantum Information 11:18. DOI: 10.1038/s41534-024-00922-w — Proves universal validity of the second law for quantum Maxwell’s demon under the most general protocols. Published February 2025.

• Manzano, G. et al. (2025). “A Friendly Guide to Exorcising Maxwell’s Demon.” PRX Quantum. DOI: 10.1103/phkv-wrsd — Comprehensive tutorial reviewing the complete resolution of Maxwell’s demon across classical, quantum, and experimental domains. Published August 2025.

Why This Destroys Senchal’s Falsification Claim

The logical problem is devastating: Senchal offers as a falsification criterion a scenario that physics has already demonstrated cannot occur. ‘If Maxwell’s demon worked for free’ is not a testable prediction of the God Conjecture — it is a well-established impossibility confirmed by six independent experimental realisations and a 2025 quantum proof. The framework is claiming credit for a prediction that thermodynamics made and confirmed 160 years ago (Clausius 1865), formalised 60 years ago (Landauer 1961), resolved 40 years ago (Bennett 1982), and experimentally verified repeatedly from 2010 to 2025.

This is the falsification equivalent of saying: ‘My theory predicts that perpetual motion machines are impossible. If one is built, my theory fails.’ Such a statement is technically true but contributes zero novel empirical content. It is a parasitic falsification criterion — it attaches itself to the overwhelming experimental success of thermodynamics and presents that success as evidence for a framework that contributed nothing to achieving it.

Sam Erroneously Claimed “Both Would Fail”, Let’s Compare

Now let’s contrast this with a robustly falsifiable alternative and what that looks like. Thermodynamic Organizational Closure specifies seven independently falsifiable pillars—any one of which, if empirically violated, would break the framework. These include: that all persistent systems maintain organizational closure (Montévil-Mossio), that distinction-making has irreducible energetic cost (Landauer-Bérut), that identity reduces to invariance under perturbation rather than substance, that constraints nest as scale-invariant lattices, that downward causation operates through constraint reshaping rather than force overdetermination, that no single level achieves closure in isolation, and that admissibility propagates upward through constraint hierarchies.

Thermodynamic Monism goes further, generating novel predictions outside thermodynamics proper—including that consciousness arises from organizational closure at coupling positions rather than from any added ingredient, that Indigenous songline architectures should outperform Western memory techniques by predictable margins (confirmed: Reser 2021, DOI:10.1371/journal.pone.0251710), and that any framework claiming fundamental status for a cognitive primitive will exhibit a measurable efficiency gap relative to constraint-based alternatives. Each of these is risky in Popper’s sense: I have specified in advance what would falsify them, and the world could have said no but hasn’t.

Compare this to a framework whose architect, when asked what would break it, answers “both would fail”—conceding that his theory cannot fail independently of the physics it claims to extend. This is cleary capitulation of other’s work, there’s nothing new here.

REFUTATION — PART D:

Formal Fallacy Analysis of Senchal’s Defence

Senchal’s defence of the framework’s falsifiability deploys several identifiable informal fallacies. Each is documented below with the specific passage that commits the fallacy.

Fallacy 1: False Equivalence (‘The universe is computational’ = ‘Spacetime is Riemannian’)

Senchal writes: ‘“The universe is computational” is no more metaphysical than “spacetime is a Riemannian manifold.”’ This is a textbook false equivalence. The Riemannian manifold postulate generates quantitatively exact, independently confirmed predictions: perihelion precession of Mercury (43 arcseconds/century, confirmed 1915), gravitational lensing (confirmed 1919), gravitational time dilation (confirmed daily by GPS systems requiring GR correction), and gravitational waves (LIGO 2016, confirmed to 5+ significant figures). ‘The universe is computational’ has generated zero independently confirmed quantitative predictions. Wolfram himself acknowledged in 2021 that they are ‘still mostly at the stage of exploring the very rich structure of our models’ and are merely ‘on a path to being able to make direct experimental predictions, even if it’ll be challenging to find ones accessible to present-day experiments.’

An axiom that generates precise confirmed predictions is epistemically different from an axiom that generates none. Treating them as equivalent is not a ‘meta-point’ — it is a fallacy that obscures the distinction between productive and unproductive foundational commitments, which is precisely the distinction Lakatos (1978) codified.

Fallacy 2: Tu Quoque (‘Applies equally to any foundational assumption’)

Senchal writes: ‘The demand for a test that would “prove the universe isn’t computational” applies equally to any foundational assumption in physics, including Sweet Rationalism’s own.’ This is the tu quoque (‘you too’) fallacy: deflecting criticism by arguing the critic faces the same problem. But the criticism was never that axioms must be directly testable — it was that the predictions generated from those axioms must be testable, novel, and not already falsified. The Sweet Rationalism framework’s core falsifier — Landauer bound violation — specifies an exact value (kT ln(2) per bit erased), a measurement protocol (calorimetry on isolated bit-erasure), and an experimental lineage (Bérut et al. 2012, Yan et al. 2018). The God Conjecture’s falsifiers specify no values, no protocols, and no experimental lineage. The tu quoque obscures this asymmetry.

Fallacy 3: Equivocation on ‘Specific’

Senchal writes: ‘These aren’t gestures. They’re specific.’ This equivocates between two meanings of ‘specific’: (a) verbally detailed — the criteria are expressed in complete sentences with named concepts; and (b) operationally specific — the criteria specify a measurement protocol, a numerical threshold, and a method for distinguishing confirmation from refutation. Senchal’s criteria are (a) but not (b). ‘If the universe were fully deterministic with no computational branching, the framework fails’ is verbally detailed but operationally vacuous: no experiment is specified, no measurement value is given, no protocol exists that could produce the result ‘the universe has no computational branching.’ Popper (1963) explicitly requires operational specificity, not merely verbal detail.

Fallacy 4: Motte and Bailey on ‘Predictions vs. Axioms’

The motte-and-bailey structure (Shackel 2005) operates as follows. The bailey (the ambitious claim): ‘These aren’t gestures. They’re specific.’ — the framework has genuine, novel falsification criteria. The motte (the defensible retreat): ‘You tend to test predictions, not axiomatic commitments’ — all frameworks have untestable axioms, so the criticism is invalid. When pressed on whether the predictions are novel or testable, Senchal retreats from the claim about predictions to a defence of axioms. But the original criticism was about predictions, not axioms. The motte is correct but irrelevant; the bailey is what was actually claimed and what the evidence refutes.

• Shackel, N. (2005). “The vacuity of postmodernist methodology.” Metaphilosophy 36(3):295–320. DOI: 10.1111/j.1467-9973.2005.00370.x — Defines the motte-and-bailey doctrine: retreating from an ambitious claim to a trivially defensible one when challenged.

Fallacy 5: Appeal to Symmetry (‘Including Sweet Rationalism’s own’)

The closing line — ‘including Sweet Rationalism’s own’ — implies that my framework faces the same falsifiability problems. This is demonstrably false by the evidence already documented. My framework specifies: (a) a falsifier with an exact numerical value (kT ln(2) ≈ 2.8×10−21 J at room temperature); (b) two independent experimental confirmations of the Landauer bound; (c) no reliance on prayer, RNG, or quantum-branchial claims; (d) a falsification criterion that is binary (Landauer violation or no violation) with no ‘subtle effect’ escape hatch. The appeal to symmetry fails because the symmetry does not exist.

REFUTATION — PART E:

Table 2 Analysis: GC vs. Many-Worlds / Copenhagen / Materialism / Idealism

Senchal’s second table claims the God Conjecture makes ‘distinct’ claims that differ from four established interpretive frameworks. Each column is examined below.

Many-Worlds QM: ‘Observers select branches in a computationally efficient way’

GC claims to differ from Many-Worlds by asserting that observers select branches weighted by ‘information-integration telos (gradient-like), not just amplitude.’ The problem: this is either (a) empirically indistinguishable from the Born rule (which already weights branches by amplitude squared, producing the observed statistics), or (b) a prediction that deviates from the Born rule, which has been confirmed to extraordinary precision in every quantum experiment ever conducted. If (a), the claim is not distinct. If (b), it is already falsified. No experiment is specified that could distinguish ‘telos-weighted selection’ from standard Born-rule statistics.

Copenhagen: ‘Collapse is emergent description of observer-bounded sampling’

This is not distinct from decoherence theory. Zurek (2003) and the decoherence programme established that apparent ‘collapse’ is an emergent description arising from entanglement with the environment — precisely ‘observer-bounded sampling’ without ontic collapse. The claim that ‘no ontic collapse, only constraints’ is exactly what decoherence theory has stated since Zeh (1970) and Joos & Zeh (1985). GC adds Rulial vocabulary to an established position without adding empirical content.

• Zurek, W.H. (2003). “Decoherence, einselection, and the quantum origins of the classical.” Reviews of Modern Physics 75(3):715–775. DOI: 10.1103/RevModPhys.75.715 — Establishes decoherence as the mechanism for emergent classical reality from quantum substrates, without ontic collapse. 21 years before GC.

Materialism: ‘Consciousness requires a threshold of integrated information’

This is, again, exactly IIT (Tononi 2004): consciousness corresponds to integrated information above a threshold. The claim that ‘not all computation qualifies’ is IIT’s exclusion postulate. GC has relabelled IIT’s core claim in Rulial vocabulary. The theory it cites as its own ‘empirical hint’ for this column (IIT, September 2025) has itself been declared unscientific by a 2025 Nature Neuroscience commentary signed by over 100 researchers.

• IIT-Concerned, Klincewicz, M. et al. (2025). “What makes a theory of consciousness unscientific?” Nature Neuroscience 28(4):689–693. DOI: 10.1038/s41593-025-01881-x — Commentary by 100+ researchers arguing that IIT’s core claims are ‘untestable even in principle.’ The very theory GC cites as its empirical hint has been formally declared unscientific by the neuroscience community.

Idealism: ‘Both mind and matter are perspectival slices of the same computational structure’

This is dual-aspect monism (Spinoza 1677; Strawson 2006; Nagel 2012) with the word ‘computational’ substituted for ‘substance.’ The claim that ‘information is fundamental, not its instantiation’ is structural realism (Ladyman & Ross 2007) with the domain narrowed to computation. Neither dual-aspect monism nor structural realism were invented by GC, and the specific prediction that distinguishes GC from these existing positions is not stated. Without such a prediction, the column is philosophical relabelling, not novel science.

Summary: No Column in Table 2 Contains a Novel Distinguishing Prediction

Every column in Senchal’s second table either: (a) restates an existing interpretation in Rulial vocabulary (Copenhagen → decoherence; Materialism → IIT; Idealism → dual-aspect monism); or (b) implies a deviation from experimentally confirmed physics (Many-Worlds → deviation from Born rule) without specifying a measurement that could detect it. The table demonstrates taxonomic relabelling, not novel prediction. Per Lakatos (1978), a research programme must predict ‘novel facts that would be inconceivable without the theory’ — facts predicted by no rival programme. Table 2 contains no such facts.

REFUTATION — PART F:

The ‘Telos in ALL Observer Systems’ Prediction: Levin’s Sorting Algorithms and Xenobots as Empirical Hints

Senchal’s Table 1 lists a prediction domain called ‘Telos in ALL Observer Systems,’ which claims: ‘If Telos = Information Integration Seeking Behaviour, goal-direction should be seen in non-life systems.’ The test design proposes constructing ‘a series of systems: cell clusters → single cells → chemical reaction-diffusion media’ and measuring ‘optimisation behaviour vs information capacity.’ The listed ‘Empirical Hint’ for this prediction is ‘Levin’s Sorting Algo’s / Xenobots.’

This section demonstrates that: (1) the sorting algorithm paper does not support the claimed prediction; (2) the xenobot evidence contradicts Platonic telos; (3) the prediction itself is not operationally specific enough to be a prediction in any scientific sense; and (4) the ‘empirical hints’ function as appeals to authority rather than independent confirmations.

The Sorting Algorithm Paper: What It Actually Shows

The paper cited is Zhang, Goldstein & Levin (2024), “Classical sorting algorithms as a model of morphogenesis,” published in Adaptive Behavior 33:25–54. The paper makes each array element execute its own comparison logic (‘cell-view sorting’) rather than using a global controller, and shows that these ‘self-sorting’ arrays are more robust to errors than traditional implementations.

What the paper does not show: It does not demonstrate ‘telos’ in non-life systems. It demonstrates that when you distribute a deterministic algorithm’s logic to individual elements, the system gains robustness to perturbation. This is a well-documented property of distributed computing, formalised in self-stabilising systems by Dijkstra (1974) and extensively studied in fault-tolerant distributed algorithms (Lynch 1996). The paper itself acknowledges that all observed behaviours are deterministic consequences of the algorithm’s comparison and swap operations.

• Dijkstra, E.W. (1974). “Self-stabilizing systems in spite of distributed control.” Communications of the ACM 17(11):643–644. DOI: 10.1145/361179.361202 — Established 50 years ago that distributed systems with local-only rules self-correct to stable states. The ‘unexpected competencies’ of self-sorting arrays are predicted by this foundational result.

• Zhang, T., Goldstein, A. & Levin, M. (2024). “Classical sorting algorithms as a model of morphogenesis.” Adaptive Behavior 33(1):25–54. DOI: 10.1177/10597123241269740 — The ‘empirical hint’ paper itself. Demonstrates robustness of distributed sorting, not telos in non-life systems.

Bubble Sort ‘Clustering’: Algorithm Stability, Not Telos

In public presentations, Levin has described bubble sort as exhibiting ‘clustering’ and ‘free compute’ that operates ‘between the lines’ of the algorithm. These claims have been thoroughly debunked by the computer science community. What Levin describes as mysterious ‘clustering’ is algorithm stability — a well-documented property described in every undergraduate algorithms textbook since Knuth (1973). A stable sorting algorithm preserves the relative order of elements with equal keys. When equal elements end up adjacent, that is not ‘intrinsic motivation’ operating ‘between the lines’ — it is the deterministic consequence of the comparison operator returning false for equal elements, causing no swap.

Modern compilers perform aggressive optimizations including loop unrolling, instruction reordering, SIMD vectorisation, branch prediction, and cache exploitation (Brown et al. 2020; Georgiou et al. 2018). If any behaviour in a sorting algorithm appears unexpected, the first diagnostic question is: ‘What optimisations is the compiler performing?’ — not ‘Is consciousness emerging in silicon?’

• Knuth, D.E. (1973). The Art of Computer Programming, Vol. 3: Sorting and Searching. Addison-Wesley. WorldCat OCLC: 729934304 — Devotes an entire section to algorithm stability. Published 51 years before Levin’s ‘discovery.’

• Cormen, T.H., Leiserson, C.E., Rivest, R.L. & Stein, C. (1990). Introduction to Algorithms. MIT Press. WorldCat OCLC: 22674911 — Chapter 2 explains sorting stability. Required reading for every CS undergraduate.

Xenobots and Planarian Evidence: Durant 2017 Falsifies Platonic Telos

The second ‘empirical hint’ is xenobots — synthetic organisms constructed from frog (Xenopus laevis) cells that exhibit novel behaviours including locomotion and kinematic replication (Kriegman et al. 2020; Kriegman et al. 2021). These results demonstrate remarkable cellular plasticity — but plasticity is precisely what contradicts the Platonic telos interpretation that Senchal’s prediction depends on.

The decisive empirical evidence comes from Levin’s own laboratory. Durant et al. (2017) demonstrated that by briefly disrupting gap junction connectivity in planarian flatworms, researchers could permanently alter their body plan to produce two-headed organisms. Critically, these altered morphologies remain permanent through subsequent regeneration cycles. The two-headed planarians do not revert to the canonical one-headed form. As the authors state: ‘Species-specific axial pattern can be overridden by briefly changing the connectivity of a physiological network.’

Why this falsifies Platonic telos: If Platonic forms existed as pre-existing ideals that organisms ‘access’ via telos, these altered worms should gradually converge back toward the one-headed canonical form over multiple regeneration cycles. Instead, they maintain the altered configuration permanently. This demonstrates that: (a) form emerges from thermodynamic constraint satisfaction — bioelectric gradients creating stable attractor basins, not convergence on external ideals; (b) there is no external ideal the organism is ‘trying to reach’; (c) the ‘goal’ is simply thermodynamic stability in whatever basin the system currently occupies.

• Durant, F., Morokuma, J., Fields, C., Williams, K., Adams, D.S. & Levin, M. (2017). “Long-term, stochastic editing of regenerative anatomy via targeting endogenous bioelectric gradients.” Biophysical Journal 112:2231–2243. DOI: 10.1016/j.bpj.2017.04.011 — Two-headed planarians remain permanently altered. No convergence to canonical form. Demonstrates path-dependent attractor dynamics, not Platonic convergence.

• Kriegman, S., Blackiston, D., Levin, M. & Bongard, J. (2020). “A scalable pipeline for designing reconfigurable organisms.” Proceedings of the National Academy of Sciences 117(4):1853–1859. DOI: 10.1073/pnas.1910837117 — Original xenobot paper. Demonstrates cellular plasticity without invoking Platonic telos.

• Kriegman, S., Blackiston, D., Levin, M. & Bongard, J. (2021). “Kinematic self-replication in reconfigurable organisms.” Proceedings of the National Academy of Sciences 118(49):e2112672118. DOI: 10.1073/pnas.2112672118 — Xenobot kinematic replication. Novel self-reproduction strategy emerges from cellular plasticity, not from accessing Platonic forms.

Levin’s Own Response Confirms Unfalsifiability

When confronted with Durant 2017 as evidence against Platonic convergence, Levin responded: ‘My view is not that standard animals are the full extent of Platonic patterns, so 2-headed planaria are not a problem. But even if we did have only convergence toward pre-existing Platonic forms, how do you know the 2-headed form is not a manifestation of a pre-existing pattern? We haven’t mapped out the space, so it’s way too early to say anything like that.’

This response is a textbook example of ad hoc immunisation (Popper 1963): any observed morphology, no matter how contingent, path-dependent, or historically specific, can always be retroactively declared a member of an uncharted Platonic space. The empirical findings no longer constrain the theory; instead, the theory expands to accommodate every possible outcome. Per Lakatos (1978): ‘A research programme is degenerate if it merely accommodates known facts by ad hoc adjustments rather than predicting novel ones.’ Platonic morphospace has become an unfalsifiable reservoir into which all results are poured after the fact, while the actual causal and predictive work is carried by attractor dynamics, bioelectric control, and thermodynamic constraint satisfaction that Levin’s own lab has already demonstrated.

The ‘Empirical Hints’ Column Is an Appeal to Authority, Not Independent Confirmation

Across all five prediction domains in Senchal’s Table 1, the ‘Empirical Hints’ column functions identically: it names a researcher or theory (IIT, Levin, Radin, Orch-OR, DMN research) without providing the logical chain connecting the hint to the specific prediction. This is the appeal to authority fallacy (argumentum ad verecundiam): the cited work is assumed to support the prediction because it comes from a respected source, not because the evidence actually entails the claimed conclusion. Specifically:

‘IIT (September 2025)’ — IIT was declared unscientific by a 2025 Nature Neuroscience commentary (Klincewicz et al. 2025). Citing IIT as an ‘empirical hint’ for GC predictions treats IIT’s axioms as evidence rather than as a theory with its own falsifiability problems.

‘Levin’s Sorting Algo’s / Xenobots’ — As demonstrated above, the sorting algorithm paper shows distributed system robustness (a 50-year-old computer science result), and xenobot/planarian evidence demonstrates attractor dynamics that contradict Platonic telos. The hint contradicts the prediction it is meant to support.

‘DMN suppression / whole brain integration increase’ — Established by Brewer et al. (2011) 13 years before GC, using standard neuroimaging. This is prior art, not a GC prediction.

‘Radin’s meta-analysis’ — Cites work on random number generators that was demonstrated to be explained by publication bias in Bösch et al. (2006). Citing a refuted effect as an ‘empirical hint’ is not appeal to authority — it is appeal to a refuted authority.

‘Orch-OR’ — Refuted by Tegmark (2000): neural decoherence is 1013 to 1017 orders of magnitude too fast. Citing a falsified theory as an empirical hint is not evidence; it is a liability.

None of the five ‘empirical hints’ provide independent confirmation of GC predictions. Two (Radin/RNG and Orch-OR) are already falsified. One (IIT) has been declared unscientific. One (Levin) contradicts the prediction it is meant to support. One (DMN) is prior art. The ‘Empirical Hints’ column is not a record of evidence; it is a bibliography of borrowed credibility.

REFUTATION — PART G:

The Self-Refuting Falsifier: What Senchal Accidentally Concedes, and the Escape Hatches That Do Not Exist

The Maxwell’s Demon Admission: A Framework That Can Only Be Falsified by Falsifying Thermodynamics Has No Novel Content

Senchal offers the following as a falsification criterion for Observer Theory: ‘If observers could violate boundedness, persistence, and relevance constraints at no entropy cost — if Maxwell’s demon worked for free — both observer theory and thermodynamics would fail.’ This statement, intended as evidence of the framework’s falsifiability, is in fact a concession that Observer Theory generates no novel empirical content whatsoever. The concession operates on four levels.

First: identical falsification conditions. Senchal states that Observer Theory fails if and only if thermodynamics fails. This means the two theories share the same falsification criterion. But a theory whose falsification condition is identical to the falsification condition of an already-established theory adds nothing testable to what the established theory already provides. The entire empirical weight is carried by thermodynamics; Observer Theory is along for the ride. This is not a feature of a novel scientific framework. It is the definition of an empirically parasitic one.

Second: the three ‘observer constraints’ are thermodynamic constraints rebranded. By equating the violation of ‘boundedness, persistence, and relevance’ with the violation of thermodynamics, Senchal is conceding that these three properties are not independent theoretical contributions. They are thermodynamic constraints expressed in a different vocabulary. Boundedness is finite energy and finite information channel capacity (Shannon 1948). Persistence is the thermodynamic cost of maintaining a far-from-equilibrium dissipative structure (Prigogine & Stengers 1984). Relevance is selection pressure constrained by free-energy budgets (Friston 2010). None of these require a novel ontological category called an ‘observer.’ All of them were established before the God Conjecture existed.

• Shannon, C.E. (1948). “A mathematical theory of communication.” Bell System Technical Journal 27(3):379–423. DOI: 10.1002/j.1538-7305.1948.tb01338.x — Established finite channel capacity (boundedness) 76 years before Observer Theory.

• Friston, K. (2010). “The free-energy principle: a unified brain theory?” Nature Reviews Neuroscience 11(2):127–138. DOI: 10.1038/nrn2787 — Relevance as free-energy minimisation under thermodynamic constraints, 14 years before Observer Theory.

Third: independent testability is impossible. If the only way to falsify Observer Theory is to falsify thermodynamics, then there exists no experiment that could falsify Observer Theory without simultaneously falsifying thermodynamics. But thermodynamics is among the most experimentally confirmed theories in all of physics. Eddington (1928) stated: ‘If your theory is found to be against the second law of thermodynamics I can give you no hope; there is nothing for it but to collapse in deepest humiliation.’ A theory that can only be falsified when the second law is falsified is, for all practical purposes, unfalsifiable. This is not a strength; it is an admission.

Fourth: Senchal explicitly states the quiet part. The phrasing ‘both observer theory and thermodynamics would fail’ [emphasis added] is the critical admission. The word ‘both’ reveals that Observer Theory has been constructed so that it cannot fail alone. It can only fail as a package deal with the most confirmed theory in physics. A framework that has insured itself against independent falsification has not demonstrated testability. It has demonstrated the opposite: it has revealed, in its architect’s own words, that it contains no independently falsifiable content. Per Lakatos (1978): a research programme that adds no novel empirical content to its protective belt of established auxiliary hypotheses is degenerating. Senchal’s own falsification criterion is the clearest evidence in this entire document that the framework generates zero novel predictions.

A question for the reader: If you were a graduate student asked to design an experiment that could falsify Observer Theory without simultaneously falsifying thermodynamics, what would that experiment look like? If you cannot think of one, consider what that tells you about the framework’s empirical content. If you can, consider whether it matches anything Senchal has proposed.

Anticipated Objection 1: ‘Telos Just Means Optimisation’

The prediction ‘Telos = Information Integration Seeking Behaviour’ is vulnerable to a definitional retreat: if challenged on Platonic convergence, the defender redefines ‘telos’ to mean ‘any system that optimises anything.’ Under this redefinition, the sorting algorithm paper trivially confirms the prediction because sorting is optimisation by definition: the algorithm moves from a disordered state to an ordered one by minimising inversions. But this makes the prediction vacuously true of every physical system. Every river finding its lowest-energy path ‘optimises.’ Every chemical reaction proceeding toward equilibrium ‘optimises.’ Every thermostat ‘optimises.’ A prediction confirmed by everything is falsified by nothing. The Second Law already states that isolated systems maximise entropy and driven systems minimise free energy (Jaynes 1957). If ‘telos’ means ‘optimisation,’ then Observer Theory has rediscovered the Second Law and called it a prediction.

• Jaynes, E.T. (1957). “Information theory and statistical mechanics.” Physical Review 106(4):620–630. DOI: 10.1103/PhysRev.106.620 — Maximum entropy principle: every physical system ‘optimises’ under constraints. Published 67 years before the ‘Telos in ALL Observer Systems’ prediction.

The retreat therefore produces a dilemma from which there is no escape. If ‘telos’ means convergence on Platonic forms, Durant 2017 falsifies it. If ‘telos’ means any optimisation, the Second Law already covers it and Observer Theory adds nothing. There is no third definition that is both novel and empirically testable.

A question for the reader: Can you construct a definition of ‘telos’ that (a) is not reducible to Jaynes’s maximum entropy principle, (b) is not falsified by Durant 2017’s permanent two-headed planarians, and (c) generates a prediction that thermodynamics alone does not already make? If such a definition exists, it has not appeared in any published version of the God Conjecture.

Anticipated Objection 2: ‘Empirical Hints Are Motivations, Not Confirmations’

A likely defence is that the ‘Empirical Hints’ column in Table 1 was never intended as confirmatory evidence — merely as ‘motivations’ for future investigation. This reframing, if accepted, produces a consequence its proponent may not intend: it requires conceding that Table 1 contains zero empirical support for any of its five predictions. The framework has generated no confirmatory evidence whatsoever.

The defence therefore impales itself on a dilemma. Either the hints are confirmatory (in which case the refutations in Part F apply: IIT is declared unscientific, Radin/RNG is refuted, Orch-OR is falsified, Levin’s data contradicts the prediction, and DMN is prior art) or the hints are not confirmatory (in which case the framework is empirically empty). There is no intermediate position that preserves both the framework’s credibility and the hints’ modest status. An honest framing would present Table 1 as ‘five predictions with zero empirical support as of 2026,’ which is a materially different claim from what the table’s layout implies.