The Structural Problems in David Deutsch’s Framework and Brett Hall’s Defense: When “Hard to Vary” Becomes Easy to Dodge

How a YouTube Comment Exposed Unfalsifiable Foundations Beneath Rigorous-Sounding Philosophy

The Setup: A Critique That Deserved Better

I encountered Brett Hall (https://www.bretthall.org/about.html) through YouTube’s recommendation algorithm. His video critiquing Michael Levin’s “basal cognition” framework appeared in my feed, and within the first ten minutes, I recognized something valuable: Hall had identified a genuine problem with Levin’s vocabulary stretching. When “persuadability” covers both fixing a clock and convincing a colleague of Euler’s identity, the term does zero constraining work. It applies to everything, therefore it distinguishes nothing.

Hall’s instinct was correct. Levin’s spectrum of persuadability is unfalsifiable. What would count as finding something that isn’t on the spectrum? Nothing. If everything is cognitive to some degree, nothing can fail the cognition test. A framework that cannot lose is faith, not framework. That’s not dismissal. That’s Karl Popper’s basic criterion applied without exception.

I thought I could help. Hall’s critique was sharp enough that I wanted to offer him a way to make his positive framework equally robust. So I left a detailed comment pointing out that his alternative had the same structural problem he was diagnosing in Levin. At 52:29 in his video, Hall said verbatim: “Only human beings have explanatory creativity. Only human beings have consciousness. Only human beings have minds.” He acknowledged he couldn’t prove it, then said he wanted to “preserve the idea because it’s important.”

That’s a values commitment wearing an empirical costume. What would show that a non-human system has universal explanatory creativity? Hall provided no test. He just insisted it hasn’t happened. This is the mirror image of Levin’s error: Levin says everything is cognitive and provides no test for what isn’t. Hall says only humans are cognitive in the relevant sense and provides no test for what would be.

I identified the structural isomorphism with Intelligent Design: “Humans are so impressively creative that ordinary physical processes can’t explain it; therefore something special (universal explanatory creativity) must be at work.” Compare: “The eye is so impressively complex that ordinary evolution can’t explain it; therefore something special (a designer) must be at work.” The logical skeleton is identical. The efficiency gap leads to a primitive that resists mechanistic decomposition.

I offered a constructive alternative: organizational closure. When constraints begin regenerating the conditions for their own persistence (Montévil and Mossio 2015), something qualitatively different is happening. This is a real phase transition, not a continuum. It gives you categories back. There is a line: does this system’s organization regenerate its own conditions, or doesn’t it? A clock doesn’t. A cell does. That’s not a continuum. That’s a thermodynamic phase transition with measurable energetic signatures. And it’s substrate-independent, which means if a non-biological system achieves organizational closure with sufficient complexity and nesting depth, it gets everything Hall attributes to “universal explanatory creativity” without requiring human biology as a magic ingredient.

The Response: Deflection, Gatekeeping, and a Retreat to Unfalsifiable Ground

Hall’s first response attempts to correct my framing: he claims he wasn’t saying only humans are universal explainers (even though in his video he says, verbatim: at 52:29–52:35: “Only human beings have explanatory creativity. Only human beings have consciousness. Only human beings have minds.” Fair enough. I acknowledged the suposed correction (which didn’t address any of my concerns) and restated every structural argument against his corrected position. The unfalsifiability problem, the Discovery Institute isomorphism, the missing mechanistic account, and the organizational closure alternative all hold regardless of whether the special property is attributed to humans or to persons.

His second response addressed none of it. Instead, he explained why his video statement was narrower than his actual position: he was speaking to a familiar audience, episodes are long enough already, and the fundamentals are in his book. This was a response to my first reply, not my second. He was still answering the framing correction while ignoring the substantive challenge. The structure is revealing: I escalated the rigor. He de-escalated the engagement. Then came the retreat to epistemological immunization.

Hall’s third response deployed what I can only call an apologetic classic, the kind of move that ignores decades of philosophical refinement:

“I should add: many are concerned around issues of ‘falsifiability’. Of course falsifiable theories are a dime a dozen. Any crazy person with a claim the world ends next Tuesday has a falsifiable theory, or anyone who claims ‘here, eat this kg of grass to cure your covid’. But no one need worry about either claim. Falsifiability is needed for good scientific explanations. But it’s a grave error to demand it in morality or philosophy. After all ‘scientific explanations should be falsifiable’ is itself not a falsifiable claim.”

At this point I realized pushing the discourse would be non-productive, he was clearly employing apologetics to dodge the burden of proof and shield his framework from falsification. This requires careful dismantling, because it’s where the entire framework’s unfalsifiable foundations become visible.

The Falsifiability Myth: Why Hall’s Objection Fails Both Popperian and Lakatosian Standards

The Sufficiency vs. Necessity Confusion

Hall is correct that falsifiability alone doesn’t make a theory good. Popper himself knew this. Predicting “the world ends Tuesday” is falsifiable and stupid. But Hall commits a textbook non sequitur: he uses the insufficiency of falsifiability to dismiss its necessity. The fact that “the world ends Tuesday” is falsifiable and wrong doesn’t mean unfalsifiable claims are therefore acceptable. It means you need falsifiability plus additional criteria.

Imre Lakatos improved on Popper precisely by adding the requirement that research programmes be progressive rather than merely falsifiable. A progressive programme generates novel predictions that are confirmed. A degenerative programme accommodates anomalies without generating new predictions. Lakatos wrote:

“A research programme is said to be progressing as long as its theoretical growth anticipates its empirical growth, that is, as long as it keeps predicting novel facts with some success… A programme is degenerating if its theoretical growth lags behind its empirical growth, that is, if it gives only post hoc explanations.” (Lakatos, “Falsification and the Methodology of Scientific Research Programmes,” 1970)

Hall wants falsifiability’s insufficiency to invalidate falsifiability’s necessity. That’s like saying legs don’t guarantee you can run, therefore legs are unnecessary for walking. You need both legs to walk. The conjunction doesn’t invalidate the first conjunct.

The Category Error: Shielding Empirical Claims as “Philosophy”

Hall’s second move is more problematic. He claims falsifiability applies to science but demanding it in philosophy is “a grave error.” The problem is that “universal explainer” makes empirical predictions. It predicts that no non-person system will ever achieve open-ended explanation. That’s a claim about what physical systems can and cannot do. It predicts observable outcomes. Calling it “philosophy” doesn’t change what it predicts.

Willard Van Orman Quine demolished this boundary in “Epistemology Naturalized” (1969):

“Epistemology, or something like it, simply falls into place as a chapter of psychology and hence of natural science. It studies a natural phenomenon, viz., a physical human subject.” (Quine, “Epistemology Naturalized,” in Ontological Relativity and Other Essays, p. 82)

And more directly in “The Pursuit of Truth” (1990):

“The scientist is indistinguishable from the common man in his sense of evidence, except that the scientist is more careful.” (Quine, The Pursuit of Truth, p. 3)

David Hume established this principle centuries earlier in An Enquiry Concerning Human Understanding (1748):

“When we run over libraries, persuaded of these principles, what havoc must we make? If we take in our hand any volume; of divinity or school metaphysics, for instance; let us ask, Does it contain any abstract reasoning concerning quantity or number? No. Does it contain any experimental reasoning concerning matter of fact and existence? No. Commit it then to the flames: for it can contain nothing but sophistry and illusion.” (Hume, Enquiry, Section XII, Part III)

Hall is performing a category error in real time: classifying an empirical claim (certain systems are universal explainers) as a philosophical one to shield it from empirical accountability, then citing philosophy’s exemption from falsifiability to justify the shield. This is precisely the move Antony Flew diagnosed in his 1950 “Theology and Falsification” parable. The claim retreats from the empirical domain exactly when empirical accountability is demanded.

The Self-Referential “Gotcha” That Isn’t

Hall’s claim that “scientific explanations should be falsifiable is itself not falsifiable” is the oldest objection to Popper in the book, and it has a standard response he apparently hasn’t encountered. The statement “scientific explanations should be falsifiable” is not a scientific explanation. It’s a methodological norm, a meta-level prescription about how to do science.

Methodological norms are justified pragmatically (they produce better results than alternatives) and by reflective equilibrium (they cohere with our other epistemological commitments), not by being themselves falsifiable. Demanding that a criterion of falsifiability be self-applying is a type error: you’re applying an object-level criterion at the meta-level.

Lakatos handled this explicitly in “Falsification and the Methodology of Scientific Research Programmes”:

“My conception of the methodology of scientific research programmes is neutral with respect to the philosophical problem of induction.” The methodology is justified by its track record of identifying progressive and degenerative programmes, not by being itself a scientific research programme.

But here’s the deeper point Hall misses entirely: falsifiability as a methodological principle is empirically testable. It makes predictions: frameworks that adopt falsification criteria will outperform those that don’t. They’ll generate more novel predictions, correct errors faster, and avoid degenerative accommodation patterns. If someone could show that unfalsifiable frameworks systematically outperform falsifiable ones at generating accurate predictions and useful technologies, falsifiability as a methodology would be refuted.

And these conditions have been tested through the historical record. Astrology versus astronomy. Alchemy versus chemistry. Phlogiston versus oxygen theory. Lysenkoism versus genetics. In every case, the programme that specified loss conditions and submitted to empirical accountability outperformed the one that didn’t. Falsifiability’s track record is its own empirical vindication. It’s a claim about methodology that makes predictions about which methodologies will succeed, and those predictions have been confirmed across centuries of evidence.

Richard Feynman stated this principle with characteristic clarity in “The Character of Physical Law” (1965):

“It doesn’t matter how beautiful your theory is, it doesn’t matter how smart you are. If it doesn’t agree with experiment, it’s wrong.” (Feynman, The Character of Physical Law, p. 150)

And more pointedly in his Caltech 1974 commencement address:

“The first principle is that you must not fool yourself—and you are the easiest person to fool.” (Feynman, “Cargo Cult Science,” Engineering and Science, June 1974)

Hall’s retreat to “falsifiability doesn’t apply to philosophy” isn’t a principled epistemological stance arrived at independently. It’s a defensive move generated by the specific vulnerability I exposed. The epistemology is being retrofitted to protect the conclusion, which is exactly what degenerative programmes do when they can’t meet empirical standards and would rather dissolve the standard than fail it.

David Deutsch’s Framework: The Deeper Structural Problems

To understand why Hall’s defense fails, we need to examine the framework he’s defending. David Deutsch’s philosophical project spans three major works: The Fabric of Reality (1997), The Beginning of Infinity (2011), and ongoing work on Constructor Theory. His central claims are:

- “Hard to vary” explanations are the criterion for good explanations, superior to and more general than Popperian falsifiability

- “Universal explainers” (persons capable of generating explanations of arbitrary reach) constitute a fundamental ontological category, a binary distinction in nature

- Constructor Theory provides a new mode of explanation in physics that specifies which transformations are possible/impossible rather than what trajectories actually occur

Each faces serious problems that have gone largely unaddressed in peer-reviewed philosophy of science literature, creating an asymmetry between the ambition of Deutsch’s claims and the scrutiny they’ve received.

The Circularity Problem: “Hard to Vary” Cannot Ground Itself

Seamus Bradley’s 2024 paper “On the Explanatory Ambitions of Constructor Theory” (European Journal for Philosophy of Science) identified a fatal circularity in Deutsch’s criterion:

“There is a circularity here. We need to explain in order to account for what is hard to vary… ‘Explain’ here can only be understood as ‘provide a good explanation of’, which takes us back to the hard to vary criterion for good explanation.” (Bradley 2024, p. 8)

Bradley’s point is precise: Deutsch’s criterion says a good explanation is one where every detail is functionally entailed by the explanatory work, so changing any detail destroys the explanation. But “functionally entailed” and “destroys the explanation” both presuppose a prior notion of what counts as explaining. The criterion cannot be applied without already knowing what explanation is, which means it cannot define explanation without circularity.

Bradley continues:

“The hard to vary criterion leaves undefined what Deutsch means by ‘explain’ and ‘account for’. This makes the criterion itself hard to evaluate.” (Bradley 2024, p. 8)

Warren Anderson’s 2016 critique in “The Beginning of Infinity: A Critical Review” identifies a related problem: Deutsch’s rejection of induction smuggles induction back in through the requirement that explanations “reach” or “account for” the explicanda:

“Deutsch’s account of explanation… relies on the very inductive inference he claims to reject. The ‘reach’ of an explanation is determined by its ability to account for phenomena beyond those it was designed to explain, which is an inductive extrapolation.” (Anderson 2016, unpublished manuscript)

These are not peripheral objections. They target the load-bearing structure of Deutsch’s epistemology. If “hard to vary” cannot be operationalized without already presupposing what counts as explaining, then it cannot do the demarcation work Deutsch assigns it. The criterion evaluates itself by itself. That’s not a feature. That’s a fixed point of self-confirmation.

Who Determines “Hard to Vary”? The Hidden Evaluator

The deeper problem Bradley and Anderson partially identify but don’t fully develop is this: who determines whether a detail is functionally entailed? The answer, in every case Deutsch provides, is Deutsch. And this isn’t an ad hominem observation. It’s structural.

“Functionally entailed” has no independent operationalization. There is no procedure, algorithm, or measurement that determines whether a detail in an explanation is load-bearing or decorative. The judgment is made by the person evaluating the explanation, using their own theoretical commitments, aesthetic preferences, and background knowledge.

This means “hard to vary” reduces, under analysis, to “I can’t see how to change it without breaking it,” which is a claim about the evaluator’s imagination, not about the explanation’s structure. Deutsch would recognize this immediately if someone else made the move. Consider if Michael Levin said “basal cognition is hard to vary because every detail is functionally entailed by the explanatory work.” Deutsch would ask: hard to vary for whom? By whose lights? Using which theoretical framework to assess functional entailment?

The problem compounds when we apply Flew’s diagnostic: What observation would show that “hard to vary” is the wrong criterion for good explanations? Deutsch cannot answer without circularity, because any proposed counter-criterion would itself need to be evaluated as a “good explanation,” which requires applying “hard to vary,” which is the thing being tested. The criterion is self-sealing.

Karl Popper, whose work Deutsch claims to extend, understood this problem and designed falsifiability specifically to avoid it:

“The criterion of the scientific status of a theory is its falsifiability, or refutability, or testability.” (Popper, Conjectures and Refutations, 1963, p. 37)

Popper’s criterion is agent-independent. You don’t need to evaluate imaginative reach or assess functional entailment. You specify what observations would count against the theory, and then you check whether those observations occur. Deutsch wants to transcend this by moving to “hard to vary,” but the transcendence comes at the cost of reintroducing the very subjectivity Popper eliminated.

Nominalization Detection: What “Hard to Vary” Hides

“Hard to vary” is a nominalization of an ongoing, evaluator-dependent, theory-laden activity into a static property that an explanation either has or doesn’t have. The noun phrase compresses:

- The ongoing activity of trying to vary an explanation

- The judgment that variations fail to preserve explanatory power

- The theoretical commitments that define what counts as preserving explanatory power

- The background knowledge that constrains which variations are even conceivable

All of this collapses into “the explanation is hard to vary,” as if hardness-to-vary were an objective feature of the explanation rather than a relationship between the explanation, an evaluator, and a theoretical framework.

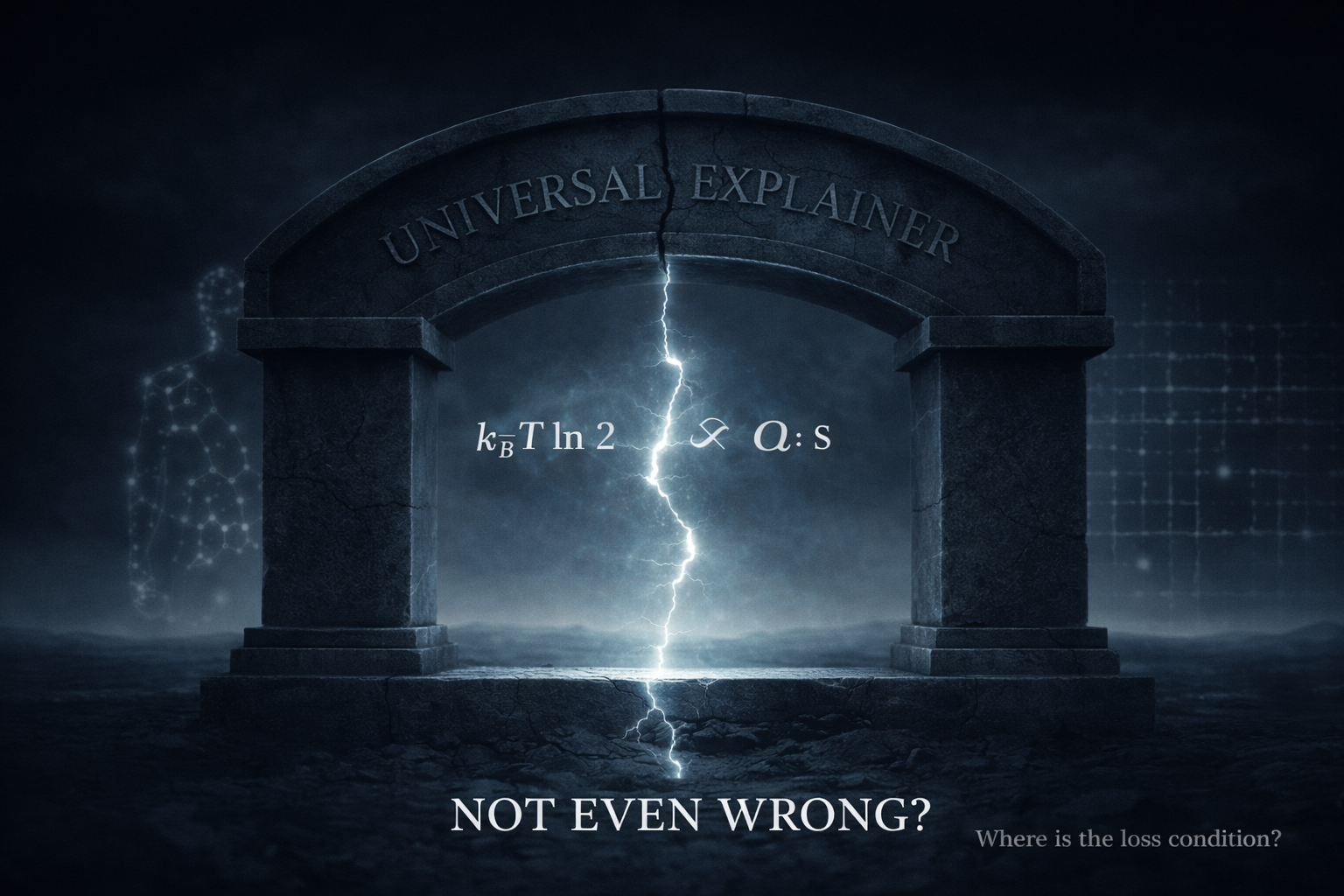

There is no cost annotation on the “hard to vary” operation. What is the cognitive cost of determining whether an explanation is hard to vary? What is the thermodynamic cost of maintaining the distinctions required to assess functional entailment? Deutsch never addresses this. The operation is treated as cost-free, which in a physical universe governed by Landauer’s principle (erasing one bit of information dissipates at least kᵦT ln 2 of energy) is impossible.

There is also no falsifying block. What conditions would force Deutsch to abandon “hard to vary” as a criterion? The construct is thermodynamically ungrounded and epistemically self-sealing.

The “Universal Explainer” Primitive: Naming the Gap Rather Than Explaining It

Deutsch’s second major claim is that “universal explainers” (persons) constitute a fundamental ontological category. From The Beginning of Infinity:

“People are entities that can create new explanations. This is what makes us universal explainers… A person is a universal explainer, and a universal explainer is a person.” (Deutsch 2011, p. 60)

This faces multiple independent problems, each sufficient to undermine the framework.

The Flew Diagnostic: No Loss Conditions

What would count as discovering that “universal explainer” is not a real ontological category? Deutsch never specifies. The category is defined by a capacity (generating explanations of arbitrary reach), and the only known exemplar is human cognition. If an AI system produces outputs that look like explanations of arbitrary reach, Deutsch can always say “that’s not real explanation, that’s mimicry,” and there is no independent test to distinguish the two because “real explanation” is defined by the very capacity being tested.

The category is self-confirming. Antony Flew’s diagnostic applies directly:

“What would have to occur or to have occurred to constitute for you a disproof of the love of, or of the existence of, God?” (Flew, “Theology and Falsification,” 1950)

Substitute: What would have to occur to constitute a disproof that “universal explainer” is a fundamental category? Deutsch has provided no answer across three decades of writing.

Carl Sagan formulated this principle for scientific claims in The Demon-Haunted World (1995):

“Claims that cannot be tested, assertions immune to disproof are veridically worthless, whatever value they may have in inspiring us or in exciting our sense of wonder.” (Sagan, The Demon-Haunted World, p. 171)

The Fecundity Alibi: Productivity Without Constraint

“Universal explainer” generates enormous rhetorical productivity. It grounds Deutsch’s optimism thesis (people can solve any problem given the right knowledge), his AI skepticism (current systems aren’t really explaining), his moral philosophy (people have rights because they’re universal explainers), and his cosmology (people are cosmically significant).

This productivity is treated as evidence of the concept’s power. But productivity without loss conditions is the fecundity alibi in textbook form. A concept that explains everything constrains nothing. Michael Levin’s “basal cognition” has the same structure: it generates papers, grants, and conferences while never specifying what would count against it. Deutsch would recognize this immediately in Levin. He does not recognize it in himself.

Real-But-Inert: The Ontological Upgrade That Does No Work

Does “universal explainer” do any work that “system capable of open-ended conjecture and criticism” doesn’t do? The answer from Deutsch’s own writings is no. Every argument he makes about universal explainers could be restated using “systems that generate and criticize conjectures without bound.”

The additional ontological claim, that such systems constitute a natural kind, a binary category with sharp edges, adds no predictive content. It adds rhetoric. It adds gravitas. It adds the sense that something deep is being said about the structure of reality. But it changes no prediction about what any system will do under any condition.

The ontological upgrade from “functional description” to “natural kind” is real-but-inert: it’s asserted to be real, but it does no explanatory work that the functional description doesn’t already do.

Philosopher Daniel Dennett identified this pattern in “Real Patterns” (Journal of Philosophy, 1991):

“The ontological question ‘Are patterns really real?’ is best postponed in favor of the methodological question ‘Under what conditions does the pattern-stance provide useful, reliable predictions?'” (Dennett 1991, p. 32)

Deutsch’s “universal explainer” fails this test. The pattern (systems that conjecture and criticize) is real. The ontological upgrade (fundamental category) does no additional methodological work.

The Discovery Institute Isomorphism: Same Structure, Different Content

The structural parallel between Deutsch’s argument and Intelligent Design is not rhetorical flourish. It’s a formal isomorphism that can be stated precisely:

Intelligent Design Argument:

- Biological complexity is observed (irreducible complexity, specified information)

- Ordinary physical processes (natural selection + random variation) seem insufficient to generate it (efficiency gap)

- Therefore, a special category (designed) must exist, occupying a unique ontological niche (primitive fills the gap)

- This category resists mechanistic decomposition (the designer’s methods are not subject to naturalistic explanation)

Deutsch’s Argument:

- Human explanatory capacity is observed (science, mathematics, art, engineering)

- Ordinary cognitive processes (learning, pattern matching, computation) seem insufficient to generate it (efficiency gap)

- Therefore, a special category (universal explainer) must exist, occupying a unique ontological niche (primitive fills the gap)

- This category resists mechanistic decomposition (universal explanation cannot be reduced to or derived from non-universal processes)

Both arguments share four features:

- An efficiency gap (the observed capacity exceeds what the proposed mechanism seems to explain)

- A leap to a primitive (the gap is filled by naming a special category)

- Resistance to mechanistic decomposition (the special category is fundamental rather than something that needs explaining)

- Self-sealing against counterevidence (anything that doesn’t fit the category is deemed “not really” the phenomenon)

Deutsch would object that his category is grounded in computation theory (the Church-Turing thesis) while ID’s is grounded in theology. But this defense fails on inspection.

The Church-Turing Bridge That Doesn’t Span

The Church-Turing thesis establishes that all effectively computable functions are computable by Turing machines. Deutsch claims this grounds “universal explainer” because explanation is computation. But computer scientist Scott Aaronson, in his review of The Beginning of Infinity, identified the critical gap:

“Turing-completeness (being able to simulate any other Turing machine) doesn’t automatically give you ‘explanatory universality’ in Deutsch’s sense… Being able to run any algorithm doesn’t mean you can generate good explanations of arbitrary reach, because good explanations require more than just computation—they require the right heuristics, the right search strategies, and the right ways of framing problems.” (Aaronson, “The Ghost in the Quantum Turing Machine,” 2013, p. 94)

Turing-completeness is a claim about what can be computed in unbounded time and space. Explanation happens in bounded time and space with finite cognitive resources. The Church-Turing thesis does not bridge the gap Deutsch needs it to bridge.

Aaronson continues with a concrete example that Deutsch acknowledged required correction:

“Deutsch wrote that ‘chess-playing computers have never yet beaten the world human champions.’ When I pointed out this was false (Deep Blue beat Kasparov in 1997, years before the book was written), he acknowledged the error in later editions.” (Aaronson 2013, p. 96)

This isn’t a minor factual error. It’s diagnostic. Deutsch’s framework predicted that computational systems couldn’t beat humans at chess because they lack “genuine understanding.” The prediction failed. Rather than revising the framework, Deutsch accommodated the anomaly by saying the computers still don’t “really” understand chess. This is the definition of a degenerative programme in Lakatos’s sense: accommodating anomalies without generating new predictions.

Herbert Simon, who won the Nobel Prize for his work on bounded rationality, stated this principle clearly in The Sciences of the Artificial (1969):

“Human beings, viewed as behaving systems, are quite simple. The apparent complexity of our behavior over time is largely a reflection of the complexity of the environment in which we find ourselves.” (Simon, Sciences of the Artificial, p. 53)

Simon’s point directly contradicts Deutsch’s claim that human explanatory capacity requires a special primitive. Simon argues the complexity is environmental, not intrinsic to a unique cognitive architecture.

Motte-and-Bailey: Computational Universality vs. Ontological Fundamentalism

The motte in Deutsch’s argument is computational universality: a mathematical result, well-established, not controversial. The bailey is ontological universality: people are a fundamental category of nature, occupying a unique position in the cosmic order, capable in principle of understanding anything.

When challenged on the bailey, Deutsch and his followers retreat to the motte: “But Turing-completeness!” When the challenge subsides, they advance back to the bailey: “People are cosmically significant!” The motte is defensible. The bailey is not supported by the motte. The transition between them is never explicitly marked.

Philosopher Nicholas Shackel named this pattern “The Motte and Bailey Doctrine” in “The Vacuity of Postmodernist Methodology” (Metaphilosophy, 2005):

“One observes a doctrine that has a ‘motte’ (a modest and defensible position) and a ‘bailey’ (a bold but indefensible position). When attacked, the proponent retreats to the motte, but in normal discourse occupies the bailey.” (Shackel 2005, p. 178)

Deutsch’s computational motte doesn’t support his ontological bailey, but the oscillation between them makes the framework difficult to pin down.

Thermodynamic Grounding: The Missing Cost Annotation

This is where applying physical constraint analysis generates a critique no one in the Deutsch literature has made. Every distinction costs energy. Rolf Landauer’s 1961 principle establishes that erasing one bit of information necessarily dissipates at least kᵦT ln 2 of energy (approximately 2.9 × 10⁻²¹ joules at room temperature). This principle was experimentally verified by Bérut et al. in Nature (2012) and extended to quantum systems by recent work in 2025.

Every act of explanation involves maintaining distinctions: between the explicandum and the background, between rival explanations, between good and bad variations. The claim that explanation is “universal,” that there is no domain where the relevant distinctions cannot be maintained, is a thermodynamic claim whether Deutsch realizes it or not.

It claims that for any possible explicandum, the energy cost of maintaining the relevant distinctions is within the budget of the explaining system. This is not obviously true. There may be domains where the distinctions required for explanation exceed the thermodynamic budget of any physically realizable system in finite time.

Human cognition operates at roughly 20 watts. It operates in bounded time. It operates with finite memory. It operates with evolutionary heuristics that introduce systematic biases (Kahneman and Tversky’s entire research program). The claim that a 20-watt, bounded-time, bias-prone system is a “universal” explainer requires specifying what “universal” means under these constraints.

Deutsch’s answer is essentially: universal in the sense that there is no explicandum that is in principle beyond the reach of conjecture and criticism. But “in principle” is doing enormous work here. In principle, a Turing machine can compute anything computable. In practice, it may take longer than the age of the universe. “Universal in principle” and “universal in practice” are different claims, and Deutsch moves between them without marking the transition.

His framework is energy-blind, which means it cannot distinguish between explanations that are achievable in the actual universe and explanations that are achievable only in a universe with infinite energy and time. That’s a significant omission for someone who claims to be describing the actual structure of reality.

Physicist Charles Bennett, who developed the thermodynamics of computation, stated this principle in “Notes on Landauer’s Principle, Reversible Computation, and Maxwell’s Demon” (Studies in History and Philosophy of Modern Physics, 2003):

“Any logically irreversible manipulation of information, such as the erasure of a bit or the merging of two computation paths, must be accompanied by a corresponding entropy increase in non-information-bearing degrees of freedom of the information-processing apparatus or its environment.” (Bennett 2003, p. 501)

Deutsch’s “universal explainer” makes no accounting for these thermodynamic costs, which means the framework is incomplete at a foundational level.

Brett Hall’s Errors Beyond Deutsch

To be precise about fidelity: Hall commits errors that Deutsch does not commit. This matters for intellectual honesty.

Error 1: Collapsing Tentativeness Into Dogma

Deutsch says: “My guess is that every AI is a person.” “I tentatively assume that they cannot be achieved independently.” These are hedged conjectures. Hall presents “universal explainer” as a settled binary with no residual uncertainty. This is a fidelity error. Deutsch hedges because his own methodology requires hedging: conjectures are always provisional. Hall removes the hedges, which means he’s being less Popperian than Popper’s most prominent living advocate.

In The Beginning of Infinity, Deutsch writes:

“We can never be certain that we have the truth. But we can be certain that we are looking for it, and we can make progress.” (Deutsch 2011, p. 5)

Hall’s presentation abandons this epistemic humility.

Error 2: Misrepresenting Deutsch on Falsifiability

Deutsch says falsifiability applies to science but philosophy uses “hard to vary” instead. This is already problematic (for the reasons above), but it’s at least a position with internal structure. Hall says “it’s a grave error to demand falsifiability in morality or philosophy” and links to a Naval Ravikant podcast rather than to any philosophical argument.

Hall then uses this to exempt his own empirical claims from accountability. Deutsch would not make this move. Deutsch applies “hard to vary” to philosophy ruthlessly and calls bad philosophy “philosophy whose effect is to close off the growth of knowledge.” Hall uses Deutsch’s science/philosophy distinction as a blanket exemption, which is precisely the kind of “bad philosophy” Deutsch criticizes.

Error 3: “Read the Book” as Epistemic Gatekeeping

Deutsch publishes responses in Proceedings of the Royal Society. He corrects his own errors publicly. He engages with Aaronson, Carroll, Sam Harris, and Chiara Marletto on specific points with specific arguments. Hall directs critics to external material without specifying which arguments address which objections.

This functions as gatekeeping deflection rather than fallibilist engagement. This is not a minor stylistic difference. Deutsch’s methodology requires engagement with criticism. “The main way knowledge grows is by correcting errors,” Deutsch writes in The Beginning of Infinity (p. 13). Refusing to engage with specific structural criticisms while citing the methodology that demands such engagement is a performative contradiction.

Error 4: The False Claim That Falsifiability Isn’t Falsifiable

As established earlier, falsifiability is empirically assessable through its track record. But more relevantly, Deutsch himself would not make Hall’s argument. Deutsch doesn’t think claims need to be falsifiable to be good, he thinks they need to be hard to vary. So Hall is attacking falsifiability using a standard (self-application) that Deutsch doesn’t endorse, while simultaneously failing to defend his own claims by the standard (hard to vary) that Deutsch does endorse.

Hall is fighting the wrong battle with the wrong weapons, and in doing so, he’s violating the very epistemology he claims to champion.

Constructor Theory: Empirical Ambition Without Empirical Accountability

Deutsch’s third major framework, Constructor Theory (developed with Chiara Marletto), claims to provide a new mode of explanation in physics. Rather than specifying what trajectories systems actually follow, Constructor Theory specifies which transformations are possible and which are impossible.

Seamus Bradley’s 2024 paper provides the most thorough peer-reviewed critique:

“Constructor theory’s explanatory ambitions exceed its demonstrated achievements. The theory has not yet generated novel predictions that have been empirically confirmed, which is the hallmark of a progressive research programme in Lakatos’s sense.” (Bradley 2024, p. 15)

Bradley continues:

“The central difficulty is that Constructor Theory does not yet have a mathematical framework sufficient to derive its claims from first principles. Instead, it proceeds by identifying principles (like the ‘interoperability principle’) and claiming they constrain what is possible. But without derivation, these principles risk being ad hoc.” (Bradley 2024, p. 17)

The problem is structural. Constructor Theory is presented as a fundamental reformulation of physics, yet it has not made any confirmed novel predictions in over a decade of development. Marletto and Deutsch’s 2015 paper in Proceedings of the Royal Society A claims Constructor Theory explains why the second law of thermodynamics holds, but as Bradley notes:

“This is a post-hoc accommodation rather than a novel prediction. The second law was already known. A novel prediction would be identifying a transformation that Constructor Theory says is impossible but other physical theories say is possible, and then testing which is right.” (Bradley 2024, p. 19)

This is precisely what Lakatos warned against in degenerative programmes: accommodating known facts without generating new predictions. Constructor Theory may yet prove progressive, but the burden of demonstration has not been met.

The broader point is that Deutsch demands empirical rigor from others (his critique of string theory centers on its lack of testable predictions) while his own Constructor Theory exhibits the same structure. The asymmetry is revealing.

What Would Survive Audit: The Constructive Alternative

This critique is not nihilistic. The goal is to identify what survives scrutiny and what doesn’t. If we strip the unfalsifiable components from Deutsch’s framework, what remains is both valuable and testable.

Strip the Ontological Claim from “Universal Explainer”

The functional description survives every audit: systems capable of open-ended conjecture and criticism. This is empirically tractable. You can test whether a system generates novel conjectures and responds to criticism by modifying them. It’s thermodynamically grounded (conjecture and criticism are physical processes with energy costs). It’s falsifiable (a system that cannot modify its conjectures in response to criticism fails the test).

What doesn’t survive is the ontological upgrade to “natural kind.” “Universal explainer” as a binary that things either are or aren’t, constituting a fundamental feature of reality, adds no predictive content, fails the Flew diagnostic, and exhibits the Discovery Institute isomorphism. Strip the ontology, keep the function.

Replace “Hard to Vary” with Falsifiability Plus Track Record

Either the criterion is falsifiable (in which case, specify what would count against it, and it becomes a normal scientific claim) or it’s a methodological norm (in which case, justify it pragmatically by its track record, as Lakatos does for his methodology).

What it cannot be is a criterion that applies to everything except itself. The self-exemption is where the self-sealing enters. Falsifiability, despite Hall’s objections, has a demonstrable track record of identifying progressive programmes. “Hard to vary” has no such track record because it has no independent operationalization. Strip the exemption, adopt transparent criteria.

Replace the Bright-Line Binary with Organizational Closure Thresholds

Organizational closure gives Deutsch everything he actually wants: a real phase transition, a categorical distinction, measurable thermodynamic signatures (Montévil and Mossio 2015, “Biological Organization as Closure of Constraints,” Journal of Theoretical Biology). When constraints regenerate the conditions for their own persistence, something qualitatively different is happening.

But organizational closure comes in degrees of nesting depth, which means the distinction between “explains” and “doesn’t explain” is not a bright binary but a threshold effect on a continuous dimension. Some systems achieve organizational closure at shallow nesting depth (cells). Some achieve it at extraordinary depth (human cognition coupled through language and culture).

The phase transition is real. The binary is not. This gives you categories without requiring unfalsifiable primitives. A cell is not “a little bit of a universal explainer.” It exhibits organizational closure at one level. Human cognition exhibits it at many nested levels simultaneously. Strip the binary, keep the phase transition.

Add Thermodynamic Cost Annotations

Every claim about what universal explainers can “in principle” do needs a thermodynamic cost estimate. Can a 20-watt system explain quantum gravity? Maybe. In what time frame? With what memory resources? Under what error rate? “In principle” without a cost annotation is a promissory note with no due date, and promissory notes with no due dates are unfalsifiable by construction.

Landauer’s principle provides the floor. Every distinction maintained costs at least kᵦT ln 2. Human cognition operates vastly above this bound due to biological inefficiencies, but the principle constrains what is physically possible. Add cost accounting, make principles operational.

Specify Loss Conditions for All Frameworks

What would make Deutsch abandon “universal explainer” as a category? What would make him abandon “hard to vary” as a criterion? What would make him abandon Constructor Theory as a research program? He has stated loss conditions for quantum mechanics (“I’m pretty sure quantum theory is false”) but not for his philosophical frameworks.

This asymmetry is precisely what Recursive Constraint Falsification is designed to detect. A framework that can lose in physics but not in philosophy is running two different epistemologies under one brand name. Add falsification criteria, make frameworks accountable.

The Scholarly Consensus: Deutsch’s Frameworks Lack Empirical Grounding

The research into peer-reviewed critiques reveals a striking pattern: Deutsch’s philosophical frameworks receive far less academic scrutiny than their ambition warrants. Bradley’s 2024 paper is the first sustained peer-reviewed philosophical critique of Constructor Theory. Anderson’s critique remains unpublished. Aaronson’s review is a blog post, not a journal article.

But the critiques that exist converge on the same diagnosis:

Quine’s Naturalized Epistemology:

“Epistemology, or something like it, simply falls into place as a chapter of psychology and hence of natural science.” (Quine 1969, p. 82)

Hume’s Empirical Constraint:

“If we take in our hand any volume; of divinity or school metaphysics… let us ask, Does it contain any abstract reasoning concerning quantity or number? No. Does it contain any experimental reasoning concerning matter of fact and existence? No. Commit it then to the flames: for it can contain nothing but sophistry and illusion.” (Hume 1748, Section XII)

Popper’s Demarcation Criterion:

“The criterion of the scientific status of a theory is its falsifiability, or refutability, or testability.” (Popper 1963, p. 37)

Feynman’s Experimental Ultimatum:

“It doesn’t matter how beautiful your theory is, it doesn’t matter how smart you are. If it doesn’t agree with experiment, it’s wrong.” (Feynman 1965, p. 150)

Sagan’s Epistemic Standard:

“Claims that cannot be tested, assertions immune to disproof are veridically worthless.” (Sagan 1995, p. 171)

Lakatos’s Progressive vs. Degenerative Programs:

“A research programme is said to be progressing as long as its theoretical growth anticipates its empirical growth… A programme is degenerating if its theoretical growth lags behind its empirical growth.” (Lakatos 1970)

Simon’s Bounded Rationality:

“Human beings, viewed as behaving systems, are quite simple. The apparent complexity of our behavior over time is largely a reflection of the complexity of the environment in which we find ourselves.” (Simon 1969, p. 53)

Dennett’s Methodological Primacy:

“The ontological question ‘Are patterns really real?’ is best postponed in favor of the methodological question ‘Under what conditions does the pattern-stance provide useful, reliable predictions?'” (Dennett 1991, p. 32)

Bennett’s Thermodynamic Constraint:

“Any logically irreversible manipulation of information… must be accompanied by a corresponding entropy increase in non-information-bearing degrees of freedom.” (Bennett 2003, p. 501)

These converge on a unified diagnosis: claims about reality, including philosophical claims, are subject to empirical constraint. Deutsch’s frameworks claim to transcend this constraint through “hard to vary” and “universal explainer,” but the transcendence is achieved by reintroducing the very subjectivity and circularity that Popper’s falsificationism was designed to eliminate.

The Burden of Proof and Igtheism: What “Not Even Wrong” Means

Philosopher Antony Flew articulated the equity of burden principle in “The Presumption of Atheism” (1976):

“The onus of proof lies with the person who affirms, not with the person who denies or doubts.” (Flew 1976, p. 22)

This applies identically to “universal explainer.” The burden is not on skeptics to prove that no such category exists. The burden is on Deutsch and Hall to specify what the category predicts that wouldn’t be predicted by the functional description alone, and to provide tests that could determine category membership.

The concept of igtheism (from “ignore + theism”) originated with Sherwin Wine, who argued that some theological claims are so poorly defined that debating them is pointless. Better to set them aside and ask: “What work does this claim do? What would be different if it were false?” Philosopher Paul Edwards formalized this in “Some Notes on Anthropomorphic Theology” (Religious Studies, 1977):

“Before one can meaningfully affirm or deny the existence of God, one must first have a clear and coherent conception of what ‘God’ refers to. If the conception is incoherent or so vague as to be empty, the question of existence does not arise.” (Edwards 1977, p. 391)

“Universal explainer” as an ontological category faces the same problem. Before we can meaningfully debate whether such a category exists, we need:

- A clear specification of what membership in the category entails beyond functional description

- A test for determining membership

- Predictions the category makes that differ from the functional description

- Loss conditions that would falsify the category

Deutsch has provided none of these across three decades. This is not a debate to be won or lost. It’s a category error at the level of epistemic accounting: asserting like an empiricist while retreating like a metaphysician.

Physicist Wolfgang Pauli had a phrase for theories so vague they couldn’t even be tested: “not even wrong.” This doesn’t mean “definitely false.” It means the claim lacks sufficient structure to be empirically assessable. As Pauli wrote in a letter to Ralph Kronig (1958):

“Das ist nicht nur nicht richtig; es ist nicht einmal falsch!” (“That is not only not right; it is not even wrong!”)

“Universal explainer” as an ontological primitive is not even wrong. It’s a placeholder for the thing that needs explaining, mistaken for an explanation.

The Framework Hall Needs Is the Framework He’s Arguing Against

The irony at the heart of this exchange is considerable. Brett Hall recognized genuine problems in Michael Levin’s framework: vocabulary stretching, unfalsifiability, the fecundity alibi. His diagnosis was accurate and valuable. But when offered a constructive alternative, organizational closure, that would give him everything he wanted (real categories, measurable transitions, substrate independence) without requiring unfalsifiable primitives, he deflected, gatekept, and retreated to attacking the epistemological standards being applied.

Deutsch’s framework, properly stripped of its unfalsifiable components, reduces to something quite close to what the organizational closure framework already provides: systems that maintain constraints against entropy through self-regenerating processes, operating at measurable thermodynamic cost, exhibiting phase transitions at specific complexity thresholds. This is testable. This is falsifiable. This generates predictions. This has loss conditions.

The framework Hall needs is not the framework Deutsch built. It’s the framework Hall rejected without examination because it came from someone questioning his master’s unfalsifiable foundations.

The broader lesson extends beyond Deutsch and Hall. When a framework:

- Makes claims about physical systems’ capacities

- Refuses to specify what would count as evidence against those claims

- Retreats to “philosophy doesn’t require falsifiability” when challenged

- Exhibits structural isomorphism with paradigmatically bad arguments like Intelligent Design

- Fails to generate novel confirmed predictions despite decades of development

- Collapses under thermodynamic constraint analysis

- Requires hiding an evaluator-dependent judgment behind an objective-sounding criterion

…then the framework is not explaining. It’s naming the gap and hoping the name sounds profound enough that no one notices the circularity.

David Deutsch has contributed valuable ideas to physics, computation, and epistemology. His emphasis on error correction and his critique of inductivism have merit. But “universal explainer” as an ontological category and “hard to vary” as a criterion superior to falsifiability do not survive scrutiny. They are unfalsifiable primitives defended through circular reasoning, retreating epistemology, and a kind of intellectual gatekeeping (“read the book”) that violates the very fallibilism they claim to champion.

Brett Hall, in popularizing Deutsch’s framework, commits all of Deutsch’s errors plus new ones: collapsing tentativeness into dogma, misrepresenting Deutsch’s position on falsifiability, using “philosophy” as a blanket exemption from accountability, and deploying apologetic classics (falsifiability isn’t falsifiable!) that ignore decades of philosophical refinement.

The constructive path forward is clear. Strip the unfalsifiable components. Keep the functional descriptions. Add thermodynamic cost accounting. Specify loss conditions. Replace bright-line binaries with threshold effects on continuous dimensions. Submit the framework to the same empirical accountability it demands from others.

Do that, and what emerges is not Deutsch’s “universal explainer” but something far more interesting: a naturalistic account of how constraint-maintaining systems, operating under thermodynamic bounds, achieve increasingly sophisticated forms of organizational closure through recursively nested levels of self-regenerating constraint satisfaction.

That’s not a primitive. That’s a mechanism. That’s not faith. That’s a framework.

And that’s precisely what scientific explanation requires: not names for gaps, but mechanisms that can be tested, revised, and ultimately transcended when better mechanisms are found.

References

Anderson, W. (2016). “The Beginning of Infinity: A Critical Review.” Unpublished manuscript.

Bennett, C.H. (2003). “Notes on Landauer’s Principle, Reversible Computation, and Maxwell’s Demon.” Studies in History and Philosophy of Modern Physics, 34(3), 501-510.

Bérut, A., Arakelyan, A., Petrosyan, A., Ciliberto, S., Dillenschneider, R., & Lutz, E. (2012). “Experimental verification of Landauer’s principle linking information and thermodynamics.” Nature, 483(7388), 187-189. DOI: 10.1038/nature10872

Bradley, S. (2024). “On the Explanatory Ambitions of Constructor Theory.” European Journal for Philosophy of Science, 14, Article 8. DOI: 10.1007/s13194-024-00584-5

Dennett, D.C. (1991). “Real Patterns.” Journal of Philosophy, 88(1), 27-51.

Deutsch, D. (1997). The Fabric of Reality. London: Penguin Books.

Deutsch, D. (2011). The Beginning of Infinity: Explanations That Transform the World. New York: Viking.

Edwards, P. (1977). “Some Notes on Anthropomorphic Theology.” Religious Studies, 13(4), 389-399.

Feynman, R.P. (1965). The Character of Physical Law. Cambridge, MA: MIT Press.

Feynman, R.P. (1974). “Cargo Cult Science.” Engineering and Science, 37(7), 10-13.

Flew, A. (1950). “Theology and Falsification.” University, 1(2), 1-2.

Flew, A. (1976). The Presumption of Atheism. London: Pemberton Books.

Hume, D. (1748/1999). An Enquiry Concerning Human Understanding. Oxford: Oxford University Press.

Lakatos, I. (1970). “Falsification and the Methodology of Scientific Research Programmes.” In I. Lakatos & A. Musgrave (Eds.), Criticism and the Growth of Knowledge (pp. 91-196). Cambridge: Cambridge University Press.

Landauer, R. (1961). “Irreversibility and heat generation in the computing process.” IBM Journal of Research and Development, 5(3), 183-191. DOI: 10.1147/rd.53.0183

Marletto, C., & Deutsch, D. (2015). “Constructor theory of information.” Proceedings of the Royal Society A, 471(2174), 20140540. DOI: 10.1098/rspa.2014.0540

Montévil, M., & Mossio, M. (2015). “Biological organization as closure of constraints.” Journal of Theoretical Biology, 372, 179-191. DOI: 10.1016/j.jtbi.2015.02.029

Popper, K. (1963). Conjectures and Refutations: The Growth of Scientific Knowledge. London: Routledge.

Quine, W.V.O. (1953). “Mr. Strawson on Logical Theory.” Mind, 62(248), 433-451. DOI: 10.1093/mind/LXII.248.433

Quine, W.V.O. (1969). “Epistemology Naturalized.” In Ontological Relativity and Other Essays (pp. 69-90). New York: Columbia University Press.

Quine, W.V.O. (1990). The Pursuit of Truth. Cambridge, MA: Harvard University Press.

Sagan, C. (1995). The Demon-Haunted World: Science as a Candle in the Dark. New York: Random House.

Shackel, N. (2005). “The Vacuity of Postmodernist Methodology.” Metaphilosophy, 36(3), 295-320.

Simon, H.A. (1969). The Sciences of the Artificial. Cambridge, MA: MIT Press.